XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, as well as narrative analysis and top takeaways. Speakers’ opinions are their own.

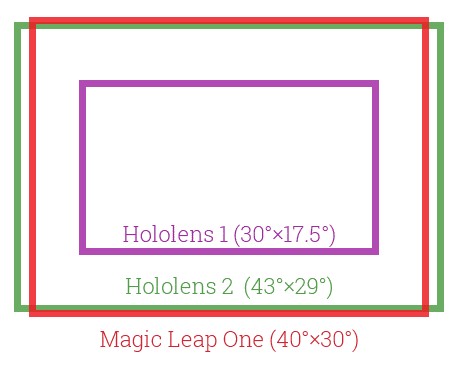

After the initial wave of coverage and launch-day excitement over Hololens 2, there’s lots of information emerging that goes deeper and answers looming questions. We haven’t worn one, but have talked to developers who rave about the upgrade in field of view (FOV) and overall UX.

“Compared to the first version, it’s a bit lighter and smaller, but when you put it on, what you see is way bigger,” said the Verge’s Dieter Bohn to echo the developer comments we’ve been hearing. His review is one of the two videos (embedded below) that we selected for this week’s XR talks.

“Even though what Microsoft is showing is still pretty early as a prototype, and has a bunch of half-finished software, I think it’s a big leap forward at least from a tech perspective,” continued Bohn in his review (video embedded below). “The tech inside this thing is seriously impressive.”

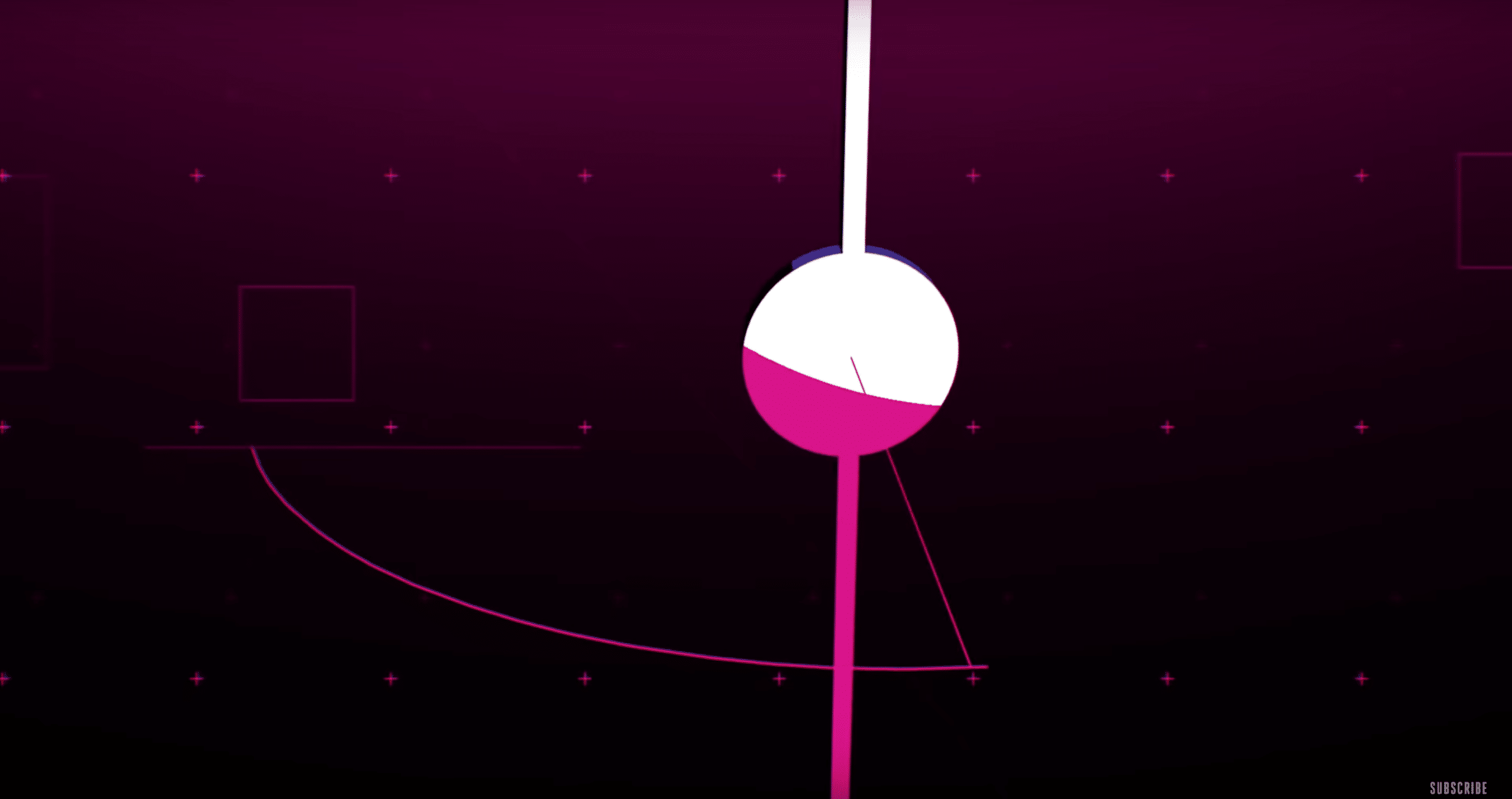

For example, what creates the wider FOV? Hololens 2 employes waveguide system, but instead reflecting light into your eyes, it projects images on the enclosure. This involves a MEMS system that redirects a laser with a small disc, the rapid oscillation of which defines the arc of the FOV.

That sounds admittedly confusing, but the video below visualizes it well. The system also requires a lot of speed, because deflecting laser images across a wider arc (thus attaining wider FOV) sacrifices resolution. That is, unless the disc is oscillating really fast — a sort of zoetrope effect.

“It works by having lasers hit mirrors that scan 54K times per second,” said Bohn, adding that each eye gets 2K resolution. “The result is that you get a wider field of view, the holograms don’t clip as much and they just feel more natural. They have a higher density, they just feel more real.”

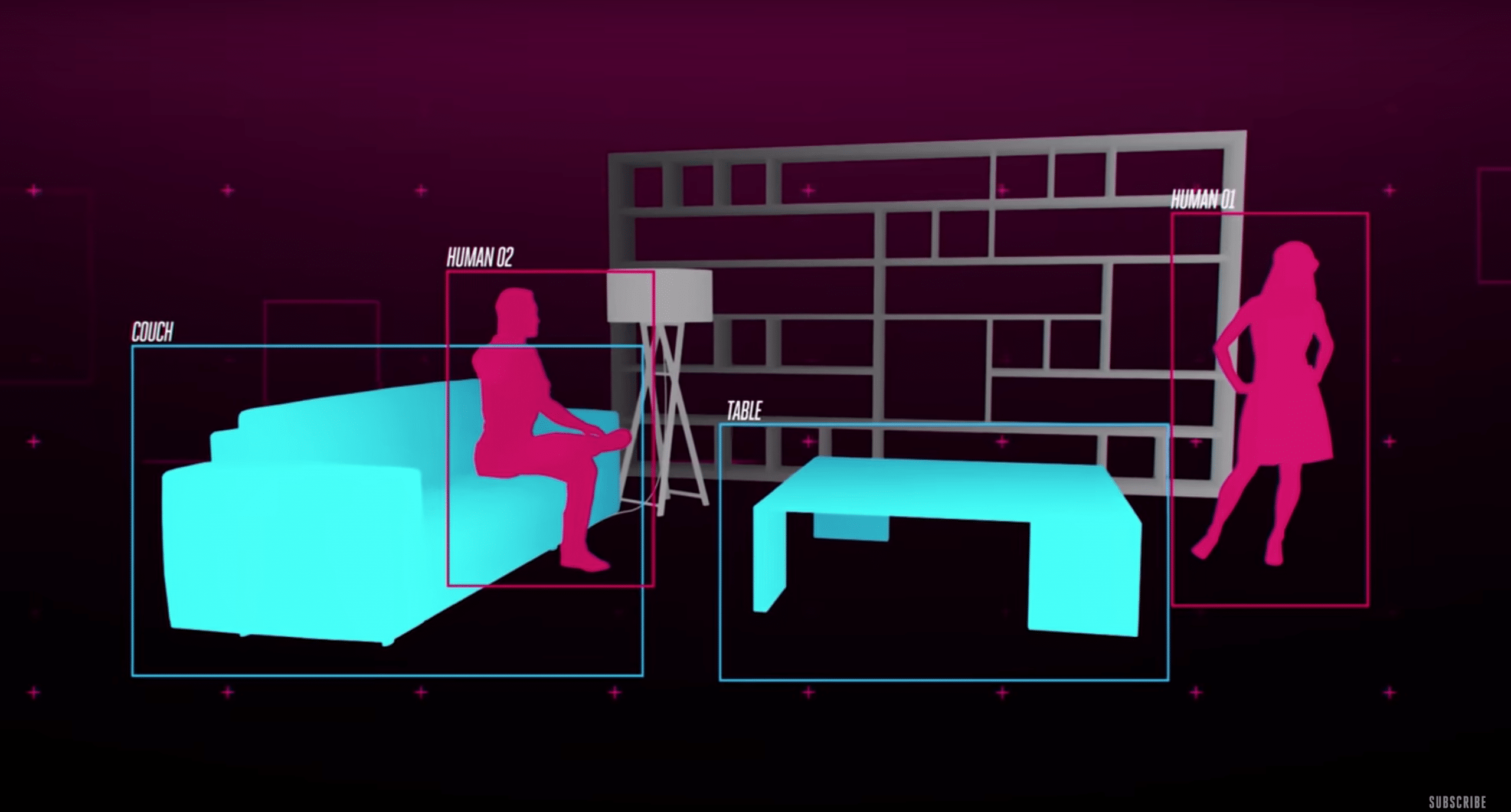

Moving from optics to interactions, Hololens 2 has a better understanding of its surroundings. This taps into some of the machine learning that’s delivered to the device from Azure (Microsoft’s own enterprise AR cloud), and some of the software it’s developing, such as Spatial Anchors.

“Hololens 1 was one big mesh,” Microsoft’s Alex Kipman told Bohn. “It was like dropping a blanket over the world. With Hololens 2, we go from spatial mapping to semantic understanding… what’s a couch? What’s human… What’s the difference between a window and a wall?”

Hand tracking has also improved greatly. Hololens 2 graduates from the limited set of gestural inputs (pinching, etc.), to a more intuitive set of controls to grab, spin and manipulate items — or what it calls “intuitive interactions.” Voice control has also improved with better sound localization.

“The first Hololens made you do these really awkward little pinching motions to select stuff,” said Bohn. “The new one can see how you actually articulate your fingers, so you can just naturally grab objects and resize them and move them around. If you see a button, you can just push it.”

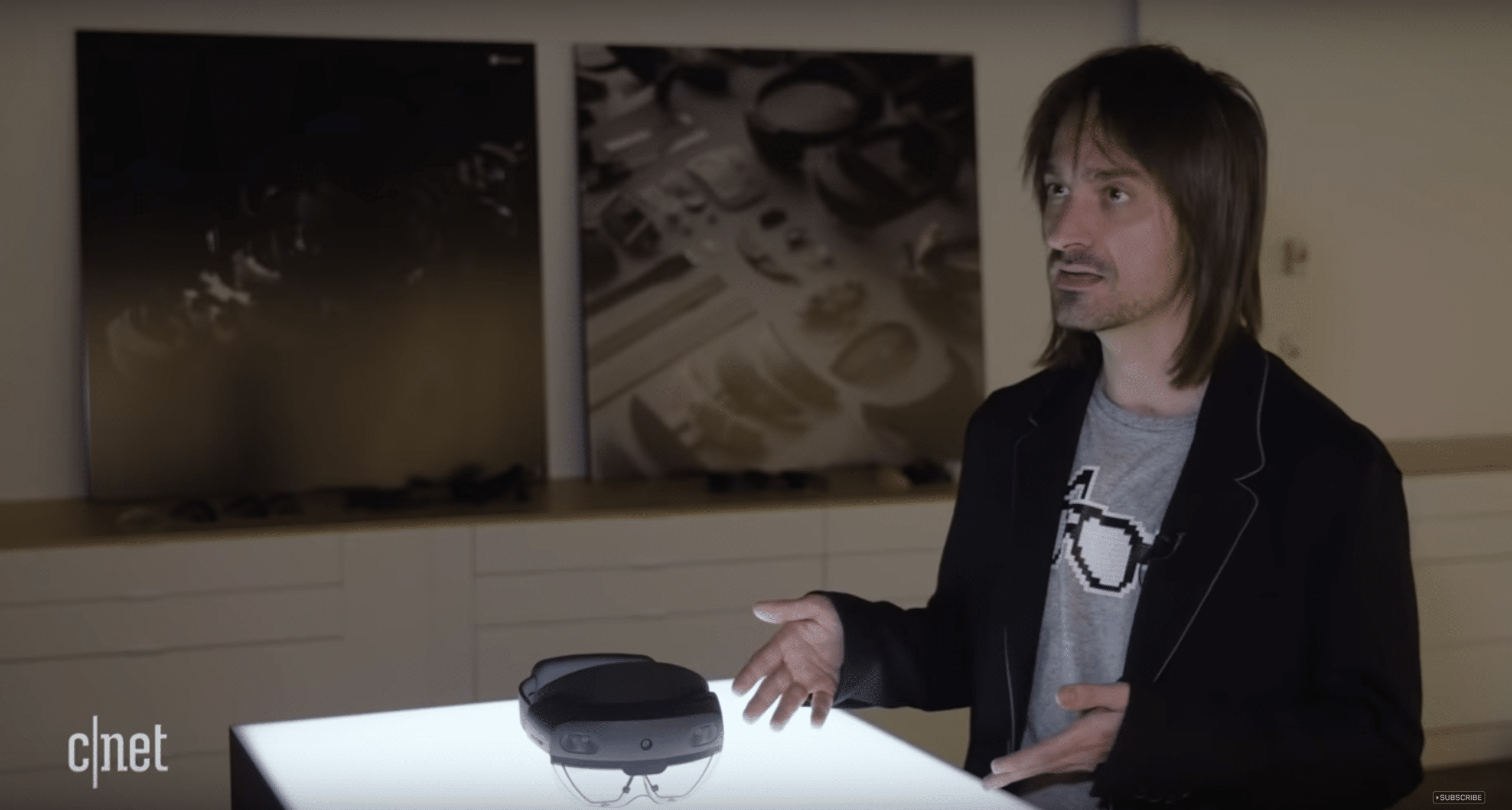

Speaking of hands, Hololens 2’s hand tracking eschews hand controllers, like Magic Leap’s. Kipman isn’t opposed to hand controllers and treats user demand as the ultimate guide. In testing, controllers didn’t add much to the UX. But Microsoft has the technology for hand controllers.

“We don’t have any Dogma that you can’t have something on your hands,” he told CNet (video below). “In fact, in our VR headsets, we have [controllers] that work with the same sensor set that ships on a Hololens. It’s absolutely in our roadmap to think about holding things in the hand.”

As for other biometric inputs, eye tracking is another area where Hololens 2 has been improved. Kipman foresees representing our personalities in virtual environments by sensing not only eye movement but the expressive facial areas that sit under a head-worn device.

“Think of it as another human signal in our quest to fundamentally understand people in a natural and instinctive way,” said Kipman. “The motion of your eyes and this area of your face, there’s so much signal there for us to mine to create more immersive and more comfortable experiences.”

Lastly, who are the intended users for Hololens 2. Much like Hololens 1 (but more explicit this time), Microsoft is sticking to its DNA in targeting enterprise use cases, but Hololens 2 expands its traditional PC market by addressing the majority of workers who don’t use computers.

“If you think about seven billion people in the world, people like you and me — knowledge workers — are by far, the minority,” said Kipman. “The majority [are] fixing jet-propulsion engines, maybe they’re the people in retail space, maybe they’re the doctors that are operating on you.”

As for when and if this will ever be targeted to consumer markets, that will require a few more innovation cycles. Kipman acknowledges that consumer markets have higher standards, such as size, weight and stylistic considerations. And we’re not there yet with headworn AR.

“These devices need to become more comfortable. They need to become more immersive and ultimately have more value-to-price ratio before they become a consumer product,” said Kipman. “There’s a threshold in the roadmap when there’s enough immersion, enough comfort, enough out-of-box value when I’ll be happy to announce a consumer product. This is not it.”

See both videos below, embedded in the order they appear in the above narrative.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.

Header image credit: Microsoft