This article is the latest in AR Insider’s editorial contributor program. Authors’ opinions are their own. Find out more details, dynamics and submission guidelines here.

Who Will Own the Metaverse?

by Marko Balabanovic

It’s been called the AR Cloud by many, the Magicverse by Magic Leap, the Mirrorworld by Wired, the Cyberverse by Huawei, Planet-scale AR by Niantic and Spatial Computing by academics.

It’s a set of ideas straight out of science fiction, literally. “Cyberspace” was coined by SF author William Gibson. In his 1984 classic Neuromancer, characters entered a virtual reality world called “the matrix” (inspiration for the 1999 film of the same name by the Wachowskis). In Neal Stephenson’s Snow Crash of 1992, the internet has been superseded by the Metaverse, a multi-person shared virtual reality with both human-controlled avatars and system “daemons”. Augmented vision is commonplace in futuristic films, from Minority Report to Iron Man. When you’re “wearing” in Vernor Vinge’s 2006 book Rainbow’s End, contact lenses and computers woven into clothing serve up an assortment of competing realities and overlays. Teenagers craft and inhabit elaborate fantasy worlds, where shrubs have tentacles, modern libraries become ancient timbered halls, bicycles are jackasses ridden by anime avatars.

Now, nearly 30 years on from the earliest of these writings, companies are building the AR Cloud for real. Ori Inbar defines it as “a persistent 3D digital copy of the real world to enable sharing of AR experiences across multiple users and devices”.

Who will power this new internet? Who will profit? Will new tech giants emerge, or the existing hegemony continue to rule?

This is a whirlwind tour of the potential layers and components of this new world, the companies competing in each, and the acquisitions and investments from the bigger players.

But first, what will the AR Cloud actually be used for? From the vantage of the early 90s and the first web pages, not even science fiction authors predicted Instagram influencers, a US president tweeting policy or 300 hours of video being uploaded to YouTube every minute – so we can only make some early guesses! Location-based games are already popular, with over a billion downloads of Pokemon Go in the three years since it launched, and over 20B kilometers walked playing the game. Its wizarding successor grossed $12M in its first month. AR is being applied in training and education, healthcare, heads-up wayfinding and navigation, tourism, retail, field service, real estate sales, design and architecture. These are currently simpler, single-person experiences, but all could evolve into AR Cloud applications, or an entirely new set of business models and experiences could emerge.

Data

Location is at the heart of the AR Cloud. Your experience is driven by where you are in the world, not just in terms of GPS coordinates but also your immediate surroundings, outdoor or indoor. What room are you in, what objects or other people are around you. What virtual avatars, creatures, information layers or interactive components are here.

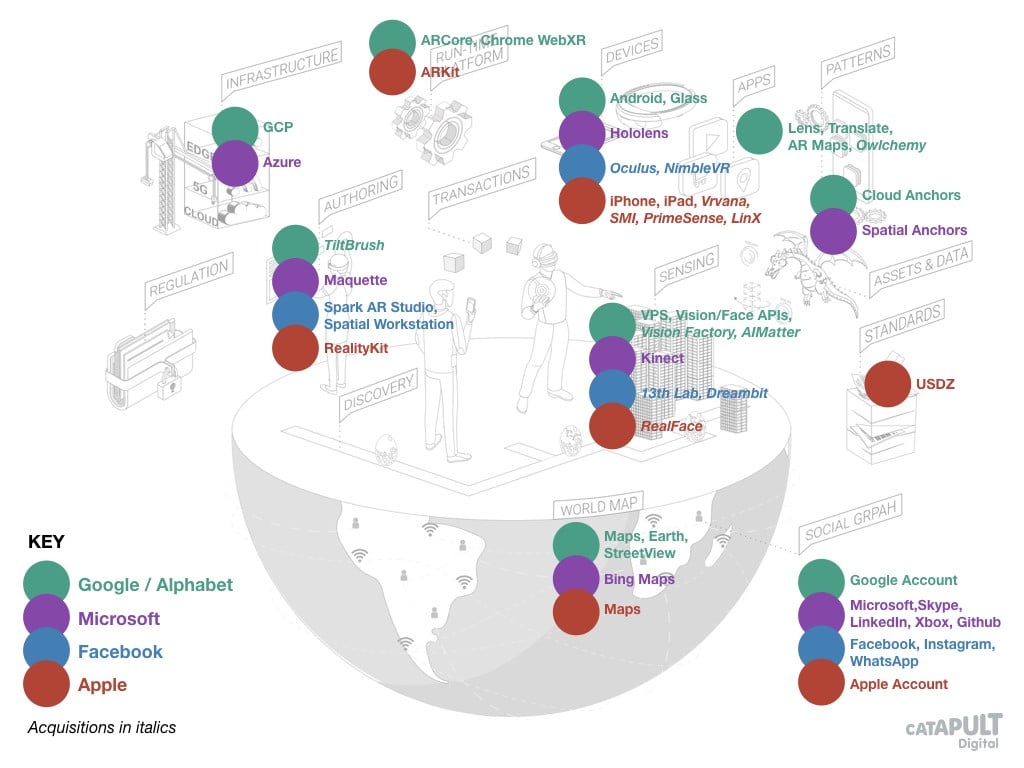

The World Map in this world therefore isn’t a 2D street map like we have with Google Maps or Open Street Map, nor is it a 3D map with terrain and building volumes. This is a hyper-local map containing the insides of rooms, street furniture, doorways, steps, surfaces and the connections between them — an insane level of detail! Insane, yet companies are mapping it already. The incumbents have an advantage with existing mapping such as Google Maps, Earth, Street View, Apple Maps and the Indoor Maps Program, and Bing Maps but there are also significant geographic data players such as Foursquare and OpenStreetMap.

Accompanying this will be persistent, stateful geographic Assets and Data, some static, others interactive with behaviors of their own. They could be layers of information, such as instructions, directions, tourist information, information about objects in the location, or game characters, artworks or virtual scenes. They will form the Content of this new medium: media tethered to a physical location, available to be combined and experienced by people in the same area, in the same way that webpages today can combine assets from across the internet.

Companies hoping to own aspects of AR Cloud data include Scape, building centimeter-level positioning, or ContextGrid, building an open registry for AR assets in locations. Both Azure Spatial Anchors and Google Cloud Anchors are leveraging existing strengths in mapping towards the AR Cloud.

And interlinked, a Social Graph, with the identities of people and the connections between them. The incumbents like Facebook, Google, Apple, Microsoft or Snap already own global social graphs, but perhaps looking to unseat them could be the multiplayer gaming behemoths like Sony, Activision Blizzard or EA, or 3D-specific ideas like Aura’s “avatar as a service”.

Stack

What kind of devices and software will we need to participate in this AR Cloud?

Starting with the Device itself: today we use smartphones, and tomorrow we may be using some kind of headset, glasses or audio-only wearable, with different kinds of control or head/gaze tracking. An Apple AR headset has been a mainstay of the rumor mills for several years, and we expect Facebook to be creating an AR Oculus device. Meantime we already have iOS and Android smartphones and tablets: Microsoft Hololens, Google Glass and many others such as Magic Leap, North, Vuzix, Vrvana (acquired by Apple), SMI (same), Snap Spectacles (via the acquisition of Vergence), Bose, Meta View, nReal, DreamGlass, Epson, Rokid (working with the UK’s WaveOptics), Daqri and Realware. We’ve also seen smartphones with depth cameras, for instance from Lenovo and Asus with Google’s pre-ARCore Tango technology.

On the device we expect some kind of real-time Run-Time Platform to power AR Cloud applications. In one scenario this may be some kind of AR browser, equivalent to today’s web browsers, like WebXR running on Chrome. In another scenario, we may see game engines dominant, like Unity or Unreal. On iOS and Android we have ARKit and ARCore, and there are also long-standing AR platforms like Wikitude. Some recent acquisitions include AltspaceVR (Microsoft), and Escher (Niantic).

Sensing the world around you will be essential for AR Cloud applications, both for mapping and localization and for detecting objects, environments or other people. This is one of the key new capabilities required, and it builds upon advances in deep learning and computer vision (Benedict Evans writes well about the camera as an input method), as well as localization and mapping techniques developed for mobile robots, initially running on powerful smartphones. It is a hotly contested market, with an explosion of startups and acquisitions, aiming at one of two nascent markets with similar sensing needs: the AR Cloud and autonomous vehicles.

It is tricky to categorize the many overlapping innovators in this space. A prominent company is 6D.AI, and some other recent examples are:

Simultaneous Localisation and Mapping (SLAM): Visualix, Kudan, Jido, Augmented Pixels, Blue Vision Labs (acquired by Lyft), Dent Reality, Fantasmo, Sturfee, Insider Navigation, Immersal, Scape, Google’s Visual Positioning System, 13th Lab (acquired by Facebook), Infinity AR (acquired by Alibaba).

Vision processing (object or person detection, 3D occlusion, image manipulation and related capabilities): Flyby (acquired by Apple), Regaind, Dreambit (acquired by Facebook), RealFace (acquired by Apple), Matrix Mill (acquired by Niantic), Obvious Engineering (acquired by Snap), Cimagine (acquired by Snap).

There are also the underlying depth mapping systems such as Intel RealSense, Microsoft Kinect, Leap Motion, Occipital, Apple TrueDepth (with related acquisitions like PrimeSense and LinX), Nimble VR (acquired by Facebook), and Apple’s predicted move into ultra-wideband spatial sensing with the U1 chip announced as part of the iPhone 11 launch.

AR Cloud systems will connect to Infrastructure and networks, and when operating at scale will impose huge requirements in terms of bandwidth, latency and local processing (on devices themselves as well as the edge of the cloud). This is where the capabilities of future 5G networks will come into their own, including the mobile edge computing standards. But we’d also expect to see the existing edge providers such as Cloudflare, Fastly, Akamai, the big cloud providers like Amazon, Google, Microsoft and a host of open-source tools for automation, deployment, caching, federated learning, serverless computing and so on. The idea of hyperlocal maps and meshes will fit naturally into the distributed world of edge storage and computing. Specialized distributed platforms serving the AR Cloud may also emerge, such as youAR for publishing and deploying localized AR content or Improbable’s SpatialOS enabling multiplayer experiences.

The AR Cloud apps, layers and objects that exist within the stack we’ve just described could be served globally from a few closed central systems, using distributed infrastructure. Think massive multiplayer games like Fortnite running over AWS. Or one can imagine a world where anyone is free to develop and host AR Cloud content, using open standards and protocols, potentially just serving their own local areas.

Tools

The ecosystem around the AR Cloud has to include tools for Authoring: applications, behaviours, creation of assets and environments. We’d expect the big platforms like Unity, Unreal, Autodesk to continue their dominance and innovate with projects like EditorXR or MARS, but also to see more specific offerings emerge such as Torch, Sketchbox, Dotty AR for sharing models, Cognitive3D for analytics, Anything World to add voice interaction or Blippar’s Blippbuilder. The big tech players will entice developers with tools like RealityKit (Apple), Maquette (Microsoft), Spark AR Studio (Facebook), Spatial Workstation for immersive audio (Facebook via acquisition of Two Big Ears), Lens Studio (Snap), Tiltbrush (Google). Facilities like Digital Catapult’s volumetric capture studio Dimension will enable the filming and creation of assets.

Once you’ve created content or an application, how will people discover it? Tools for Discovery will have to emerge, the equivalent of today’s app stores or search engines, but perhaps we are still too early for them.

Finally, what kinds of Transactions will happen in the AR Cloud? ARtillery Intelligence predicts $14B of consumer AR revenues by 2021, with only around $2B of that from hardware sales. The AR Cloud will enable the sale of apps or layers of content, marketplaces for assets or avatars, trading between users, ownership of real-world locations and of course advertising. Companies exploring this world include Arcona, Darabase, AR Grid.

Context

The AR Cloud will happen within a broader context. An obvious consideration is Regulation. How can we ensure people are safe, if they’re playing a game tempting them to visit locations in the real world? Are some publicly accessible locations inappropriate or offensive (2016 saw complaints of Pokestops in graveyards, hospitals, churches and memorials). Does my ownership of land or property or landmarks in the real world translate to the AR Cloud, and if so is an AR app liable if it encourages users to physically trespass, or just add virtual content? Are there new privacy considerations if everyone’s device has biometrics, cameras constantly scanning, or even running face recognition? The worlds’ legal systems may take time to catch up. At the moment there is just the start of public discourse, such as the “mirrorworld bill of rights”, the recent XR Privacy Summit, or this AR Privacy and Security explainer from W3C working groups.

Alongside will come Standards to help speed development and interoperation. We already have WebXR from W3C, OpenXR from Khronos, the USDZ file format from Apple and Pixar, 5G MEC from ETSI, ARML from OGC, ARAF from MPEG, but it is early days yet. There’s a problematic proliferation of formats for 3D models like gITF, FBX, OBJ, COLLADA. We are seeing industry groups like Open AR Cloud campaigning for interoperability.

And finally, we’ll have Patterns and conventions. How do we interact, will we standardize gestures or specific affordances, will there be regularly used icons or representations, what will be the social conventions, in both the real and virtual worlds? Matt Miesnieks has suggested parallels such as recording indicators (already implemented on Snap Spectacles) or audible clicks when taking photos (mandatory in some countries like Japan). Will we have the equivalent of robots.txt files — to control the scanning of your physical property, as opposed to controlling whether search engines can index your web site?

Putting it All Together

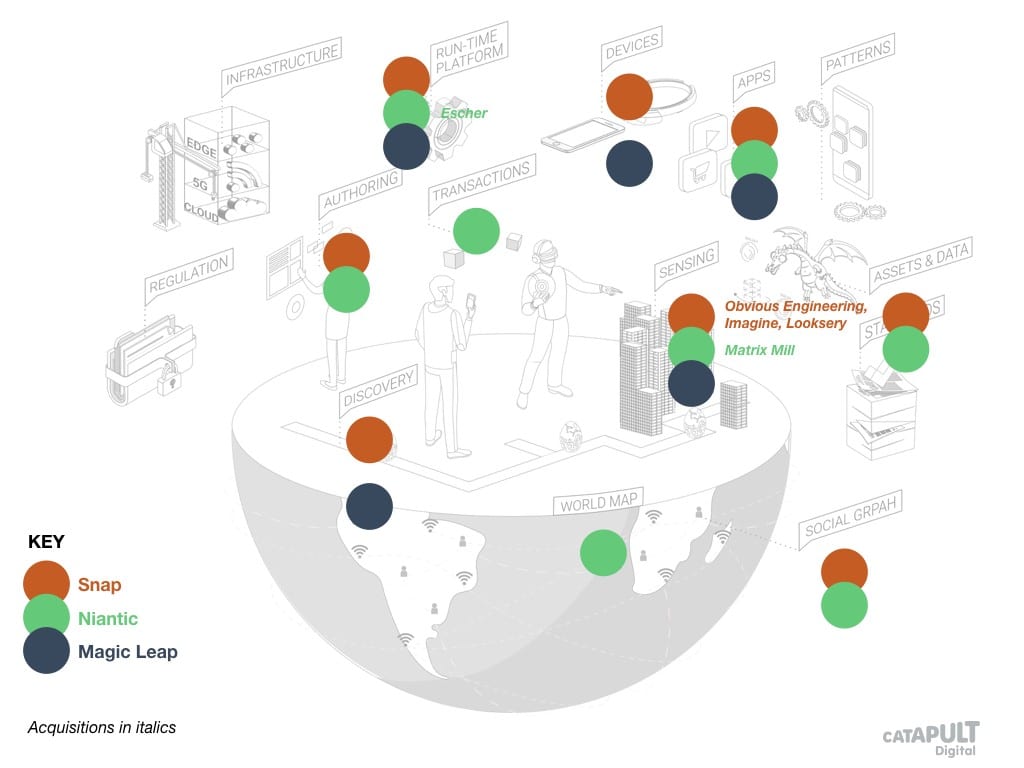

The organization that has pioneered the AR Cloud at the most impressive scale is Niantic, with Pokemon Go and more recently Wizards Unite, based on their Real-World Platform. Alongside Niantic, we’d consider Snap, whose Snapchat lenses have been a hugely successful deployment of AR technology across a broad audience (lenses are the biggest source of AR advertising revenue), and who are venturing further with “landmarkers” lenses over well-known landmarks. Then there are the upstarts large and small but growing quickly across the territory. Magic Leap are the highest-profile, with both a device and a platform, and more recent entrants include Ubiquity6, AIReal and Placenote. We can visualize how Snap, Niantic and Magic Leap provide many of the AR Cloud ingredients across this landscape.

Among the tech giants, we can see that Google, Apple, Microsoft and Facebook all have products, services or acquisitions covering most of the ingredients we’ve identified, and are all well placed to move swiftly into an AR Cloud future and create new end-to-end offerings.

Summary

Rudimentary augmented reality has been possible on widely available smartphones since 2008, when the first Android device launched, with both GPS and a compass. It was my first involvement: at lastminute.com labs back then we launched one of the first apps. So what’s different now? Four things:

1. Today’s devices have great cameras and massive compute power

2. Companies are launching advanced algorithms for centimeter-level mapping and localization, even without specialized depth cameras.

3. Modern deep learning allows us to efficiently detect and understand scenes, objects or people

4. Today’s cloud and networking infrastructure enables massively scaled, global multi-person experiences, and the move to 5G will provide a further boost

AR headsets and glasses are on their way but we can see much of the potential already with smartphones. It is time for the AR Cloud to move from science fiction into reality. It is no coincidence that science fiction author Neal Stephenson is now also Chief Futurist at Magic Leap. We are looking at what could be the next web, with an entirely new set of interactions, businesses, behaviors, platforms, social, legal and ethical norms. In this article, I’ve shown that Google, Facebook, Microsoft and Apple are already heavily invested as we move into this new world, and are continuing to make acquisitions and build services in a bid to dominate. Companies like Niantic, Magic Leap and Snap are looking to unseat them, and startups like 6D.ai to own crucial territory such as hyper-local 3D world maps. The crucial question is: will it develop in an open way, as the web did originally, or as a series of proprietary closed systems?

![]() Marko Balabanovic is CTO at Digital Catapult and non-exec director at NHS Digital

Marko Balabanovic is CTO at Digital Catapult and non-exec director at NHS Digital

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.