“Trendline” is AR Insider’s series that examines trends and events in spatial computing, and their strategic implications. For an indexed library of spatial computing insights, data, reports and multimedia, subscribe to ARtillery PRO.

A realization has begun to sink into the spatial computing sector. After a few years of excitement over the impending era of world-immersive AR, there’s a growing consensus that the technology is still years from bringing that dream to a pair of glasses that most people will wear.

This comes down to a classic design tradeoff. Visually immersive and contextually-aware AR glasses like Magic Leap One and Hololens 2 require optics whose power consumption and heat dissipation necessitate bulky headgear, rather than anything you’d consider “eyewear.”

At the other end of the spectrum are the likes of North Focals — lacking immersion and contextual awareness, but stylistically-viable (though challenges and sale to Google should be noted). Between these endpoints is a sliding scale where we see glasses like nReal Light.

The Next Mobility

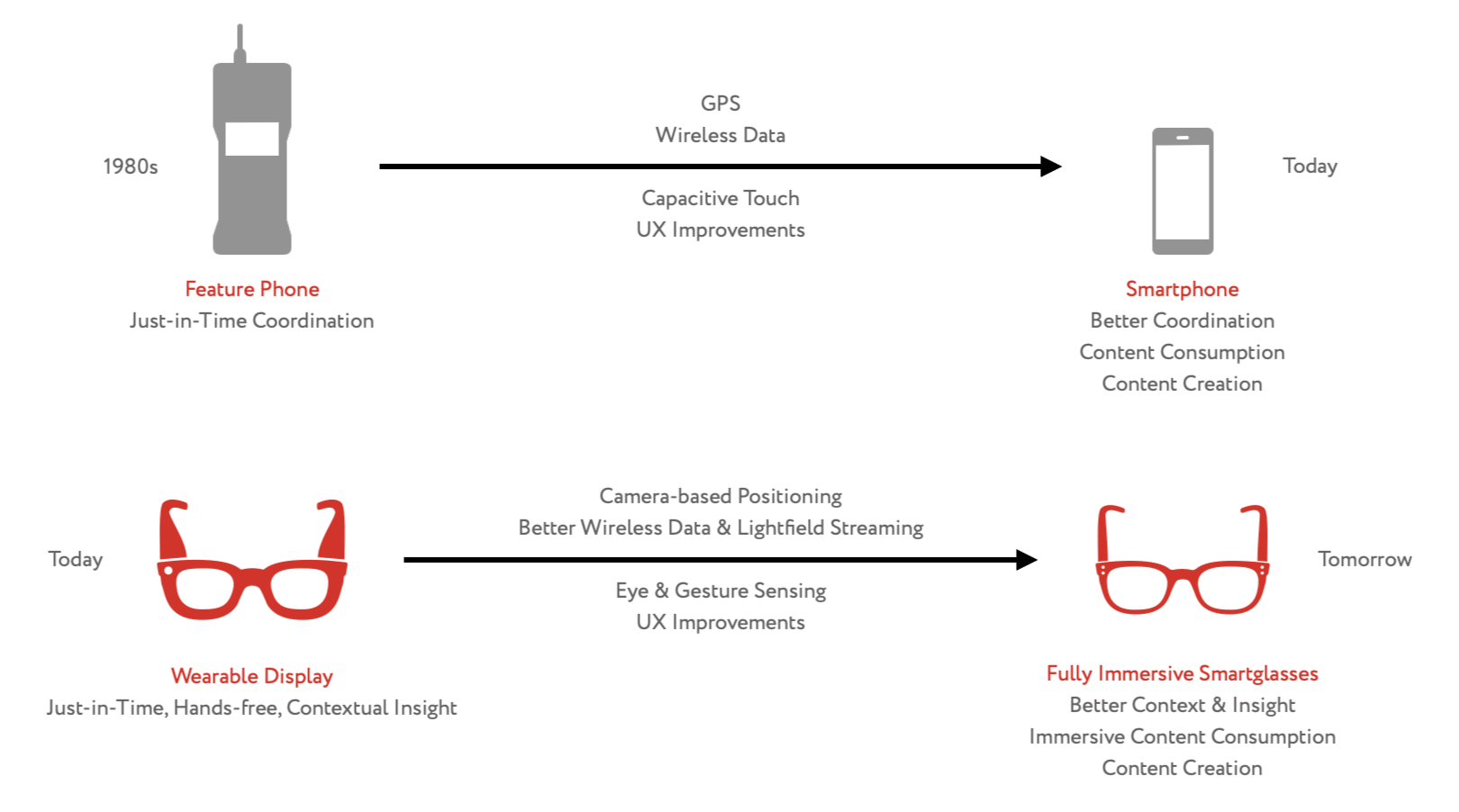

The North approach could be right for today’s AR glasses if viewing product evolution in light of historical examples. In other words, consider the iPhone 1 which launched with relatively few features and apps, no GPS, low-quality camera, and other gaps that were gradually filled.

Going back farther, smartphones didn’t start with the iPhone, as earlier iterations from RIM and Windows Mobile let you make calls and send e-mail on the go. And feature phones before them gained rapid ubiquity with the simple value proposition of letting you make calls anywhere.

Throughout this progression, there was one common point of value: mobility. Whether it’s calls, texts, email, web browsing, summoning Uber or swiping Tinder, mobility has sustained as the core value proposition — albeit increasingly dressed in incremental value over time.

Applying that principle back to AR, could wearability be the next era’s mobility? And if so, should it represent AR glasses’ V1 design target, which then evolves over time towards advanced AR functionality; versus starting with advanced AR then sizing-down over time towards wearability?

Signals in today’s market support this play. The latest from ongoing Apple-glasses rumors suggest regular glasses that eschew a Magic Leap-style AR experience in favor of simpler optical enhancements like helping you see better, or Doug Thompson’s “Pop Principle.”

Design Target

These concepts have been bouncing around in industry discussions, events and ongoing rhetoric of late. But for us, it coalesced during a recent discussion with Ostendo VP Jason McDowall. Many of you may know McDowall as producer and host of the AR Show Podcast.*

He approaches this discussion in light of the novel display and optics that Ostendo develops. With the wearability principle in mind, Ostendo is betting on a sleeker form factor for AR glasses that emerge and scale in the near-term as a function of mainstream consumer viability.

On the enterprise side, McDowall believes AR glasses will verticalize to some degree. In other words, one monolithic Hololens that’s used across manufacturing, medicine and education, could give way to glasses that are streamlined and purpose-built for the nuances of each field.

Whether it’s consumer or enterprise, the point is that all-day wearable displays need to have UX endpoints well in mind, as they impact key decisions. In other words, technical approaches can deviate widely in the quest to manipulate photons to render imagery optimally to the human eye.

“We’re fundamentally providing a set of capabilities that unlocks the level of wearability that’s not yet been achievable,” said McDowall. “And it’s based on the way that we’re generating these photons, and conditioning and preparing them to be used within the context of AR glasses.”

Tech Stack

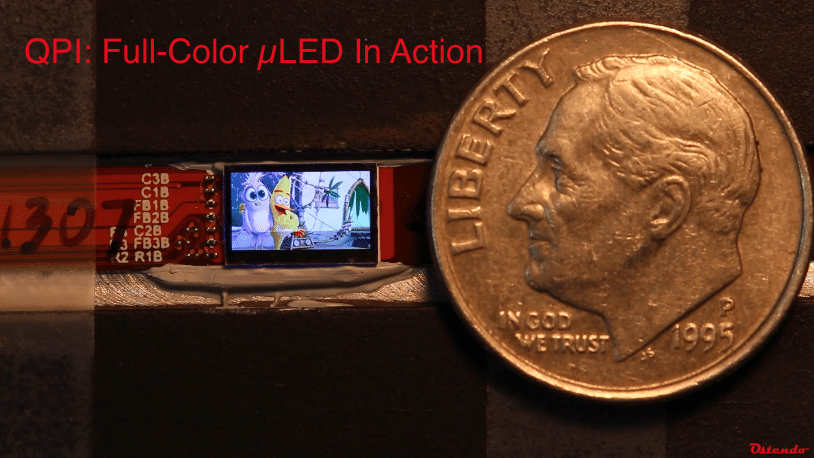

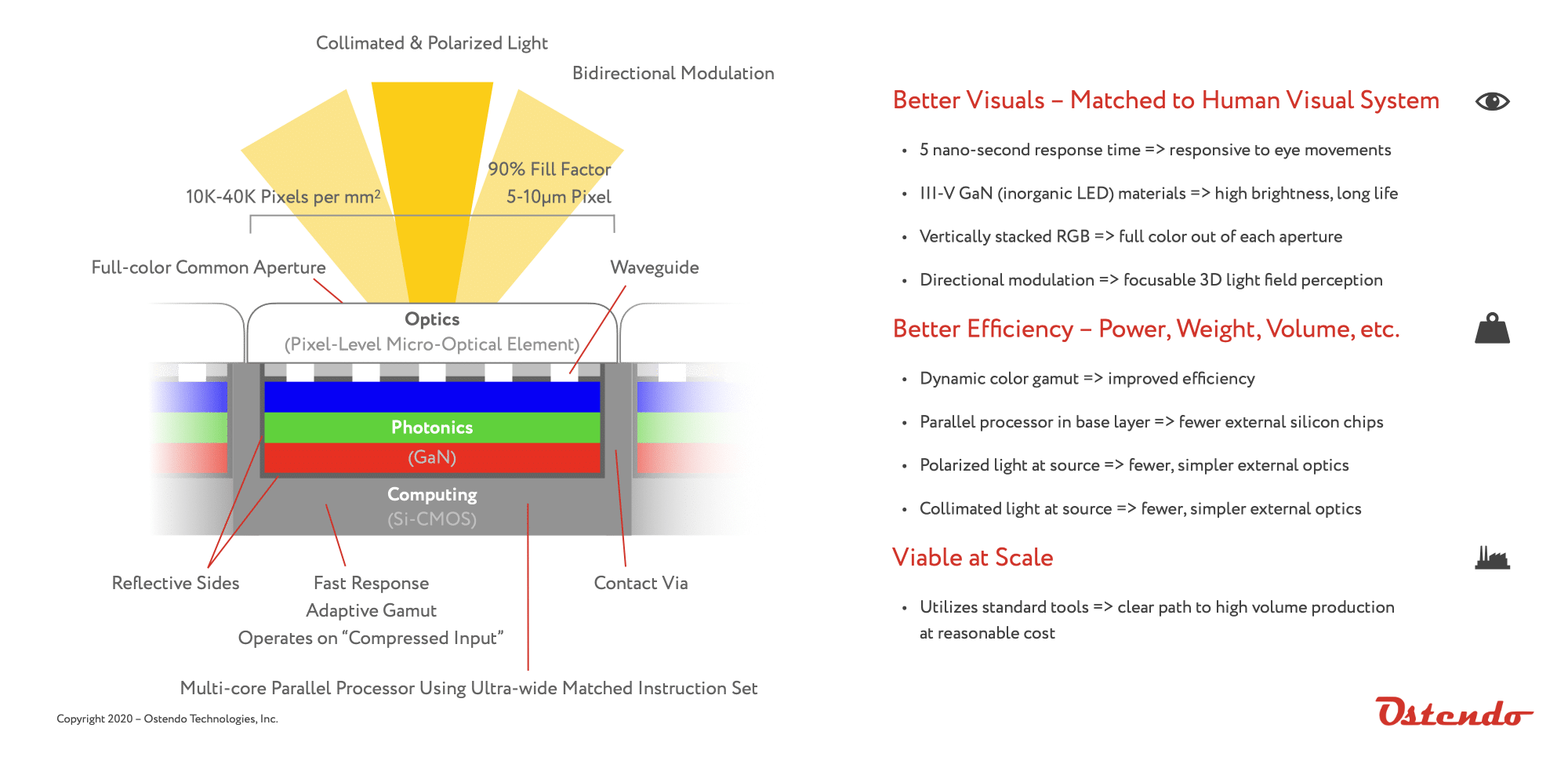

Diving one level deeper, one of Ostendo’s differentiators is LED micro-displays* whose panels vertically stack red, green and blue pixels, rather than place them side by side. This lets it fit more pixels onto a display and achieve full-color and higher pixel density with less volume.

“There’s a huge advantage to having them vertically stacked when making tiny pixels,” said McDowall. “Because the display is so close to your face, you need densely packed pixels to generate an image that matches human visuals. Vertically stacking also achieves more efficient integration with optics.”

Speaking of efficient integration, Ostendo places a graphical processing unit (GPU) on the base plane of the display panel. This achieves efficiencies that aren’t seen when a GPU is further upstream to process graphics and send photons along to be channeled into a display.

The upstream GPU still exists here, but the additional computing layer on the display allows it to be responsive to eye movement. This lets the display operate on compressed input, rather than driving all pixels at full color all the time, which is a waste (read: more heat & hardware bulk).

This is analogous to a system-on-a-chip architecture that achieves greater efficiencies in your smartphone by having integrated GPU, CPU, modem, etc. Ostendo similarly combines functions to process full color light into a single chip to match the capabilities of the human visual system.

“One key to crafting a truly wearable display is recognizing that the goal is to couple information ultimately to our brain,” said Ostendo founder & CEO, Dr. Hussein El-Ghoroury. “To do this, we have to match the movements and capabilities of the human visual system, and only put visual information where it’s useful.”

Smarter Glasses

All of this is in the company’s DNA, given El-Ghoroury’s background — everything from military-grade satellite comms, to consumer cellular tech, to being CTO of IBM Microelectronics. From that, he’s developed a keen eye for mobility’s evolutionary path towards wearability.

Speaking of evolution, Ostendo sees AR’s long-term future, in addition to critical elements to achieve near-term wearability. McDowall pegs three pillars to uphold AR’s viability as the next personal computing paradigm: Just-in-time, hands-free, and contextual insight.

The hands-free part we’ve covered in the wearability principle. Contextual insight is the “defining lever of value” says McDowall, involving situationally-aware content that can be visual, audible or haptic. And the just-in-time part is about having it delivered intelligently when it’s needed.

McDowall and company are confident we’ll get there, and have that future baked into the road map. The next few years could even see important milestones towards the holy grail: a device that’s both wearable and fully immersive beyond what Hololens or Magic Leap deliver today.

But to run that marathon requires revenue to reinvest (the anti-Magic Leap), hence wearability as a near-term design priority. Here, irony lies in the term “smart glasses” itself. Do we first need smarter glasses… as in slightly-smarter tech-fueled versions of today’s corrective eyewear?

“A good way to get consumer wearable displays into the mainstream is to start with the functionality of glasses,” said McDowall, “and make them a little bit better… Glasses+”

For more technical detail, see McDowall’s presentation below, derived from his talk at AWE; and see today’s article by Charlie Fink, further examining Ostendo’s approach.

*inorganic LED micro-displays with ≤ 10 micron pixel pitch.

**AR Insider has a content distribution partnership with the AR Show, but no money changes hands for that partnership nor the production of this article. See AR Insider’s Disclosure & Ethics Policy here.