When looking at the technologies in the spatial spectrum — from AR to VR — one promising endpoint is holograms. Involving three-dimensional projections that don’t require a headset, the classic example is Princess Leia’s hologram in the opening scenes of Star Wars, Episode IV.

Though that occurs fictionally in a galaxy far far away, there are glimmers of hope 😉 today — most notably Looking Glass Factory. For those unfamiliar, the company develops lightfield displays that project 3D images in a small box that come to life and interact with hand gestures.

After launching its smaller and more accessible Looking Glass Portrait last month, the company this week announced a new content creation engine. This allows users to generate holographic content from existing digital photos — a move that will unlock lots of content for the device.

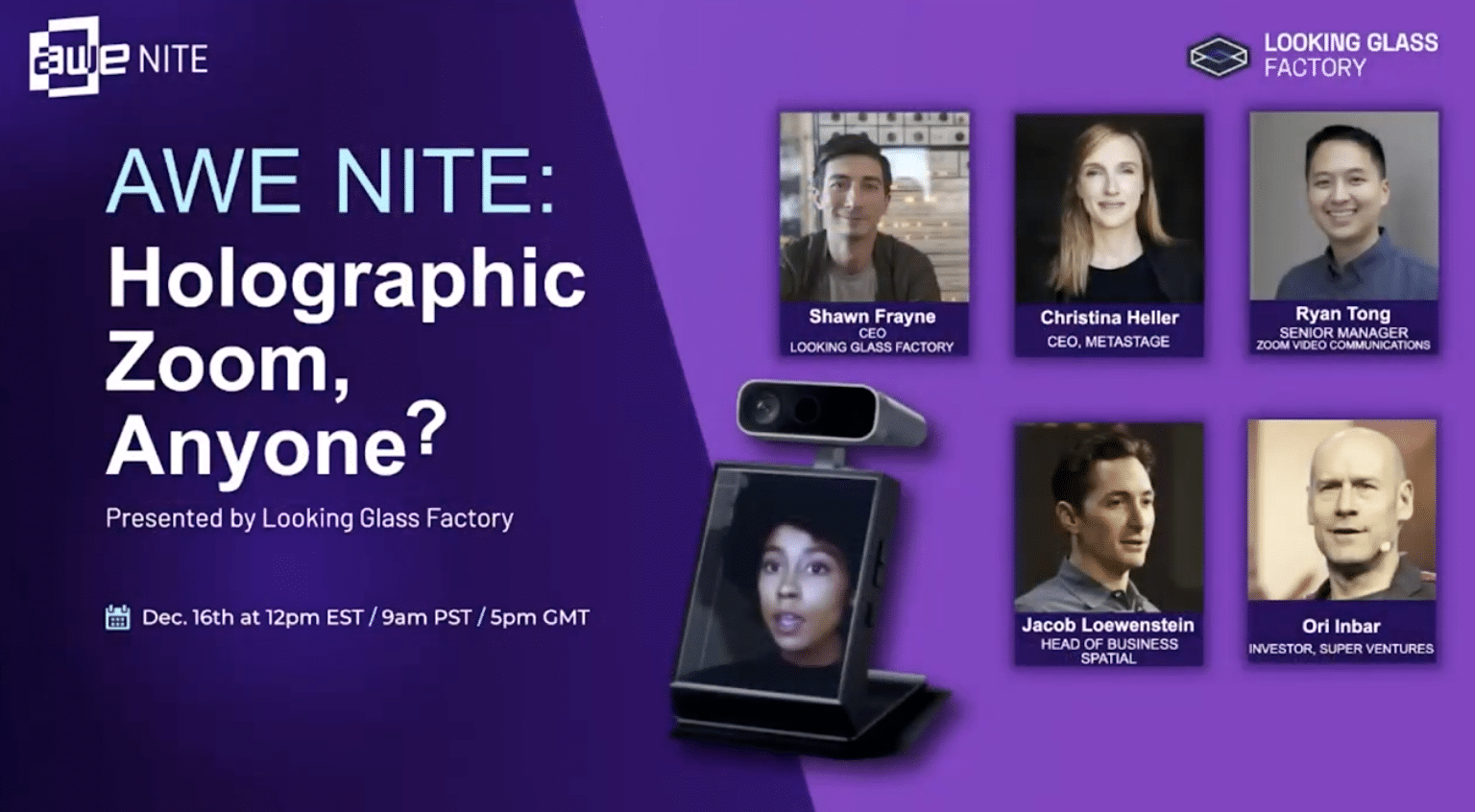

Aside from cutting-edge holography that Looking Glass develops (more on that in a bit), we’re seeing innovation around 3D virtual interaction — which of course resonates during global lockdowns. This was the topic of a recent AWE Nite NYC event (video and takeaways below).

An Ecosystem is Born

One thing evident about spatial computing — and its eventual manifestations in holography — is that it requires an ecosystem and a value chain. Like several content-based sectors, this includes platforms, creation tools, delivery platforms, app layers and of course hardware.

Taking this batch of speakers as an example, Spatial represents the app layer with a tool that enables synchronous 3D meetings and interactions. Zoom is similarly at the app layer, though it’s expanding as more of a platform. Looking Glass is a vertically-integrated hardware play.

Metastage represents the content creation segment of that stack with a capture studio for 3D assets and live-action characters. And even within that segment, there are different use cases and budgetary ranges — Metastage representing the high end of professional motion capture.

CEO Christina Heller believes that this is an area that will be democratized to some degree, but there will always be a need for the high end. This is analagous to how Zoom has democratized telepresence, but pros still require high-end gear and practices for remote interviews.

Coexisting Technologies

This theme of coexisting technologies applies elsewhere. Spatial’s Jacob Loewenstein and Zoom’s Ryan Tong admit that situational variables call for Zoom’s dead-simple UX in some cases and Spatial’s deeper interaction in others. And they may move towards each other in time.

For example, a core Spatial design principle is to maximize its addressable market by accommodating a wide range of users and hardware. So it has walked a fine line with a user experience that is both graphically rich but accessible by a wide range of people and devices.

The coexist principle likewise applies to hardware. Looking Glass Studio’s Shawn Frayne sees a future that involves AR and VR headsets, but they won’t be ubiquitous all-day devices. That world has room for the hardware road map he’s developing, which will be more display-based.

He points to a Steve Jobs quote that likens various computing approaches to the variance in transportation formats. Sometimes you need a truck, sometimes you need a car, other times you need a motorcycle. They each serve different purposes as will spatial computing endpoints.

Chicken & Egg

Going deeper on Looking Glass, the company announced a content creation engine this week as noted. Known as HoloPlay Studio, it will convert users’ existing 2D photos into holographic content that can be viewed on Looking Glass devices, including the Kickstarter-propelled Portrait.

This one-two punch makes Looking Glass more accessible and usable. The cheaper and smaller Portrait covers accessibility, while more content covers usability. Content of course is one side of the classic chicken and egg dilemma that challenges new technologies — VR for example.

Previous to HoloPlay Studio, Looking Glass was compatible with pictures taken with Apple’s depth cameras, including Portait Mode photos. Because Apple creates depth maps for these shots, Looking Glass can reverse-engineer them in order to render dimensionality.

That mission advances with HoloPlay Studio by allowing similar functionality for any photo. Looking forward, capabilities will be further unlocked when LiDAR phases into commodity mobile hardware. This primes looking glass to gain value as a function of underlying hardware evolution.

In fact, Looking Glass’s trajectory makes it a possible acquisition target for Apple. It’s headed towards live-streaming 3D video capture and display, which is a more photorealistic version of what Apple does today with Memoji. And lightfield displays could be in Apple’s road map.

We’ll be watching closely for clues that point in that direction. Meanwhile, check out the full AWE Nite session below, which goes deeper into all of the compelling speakers referenced above.