The biggest advancement in AR in 2020 was arguably Apple’s iPhone LiDAR camera. Though it’s only available on the iPhone 12 Pro and Pro Max, LiDAR will trickle down to the rest of the iPhone lineup in the coming years, which has big implications for AR’s future.

LiDAR has mostly been touted by Apple as enhancing iPhone photography — a big competitive differentiator and marketing focus for smartphone players these days. But the spatial computing world has its eyes on other outcomes, as LiDAR unlocks more robust and user-friendly AR.

This was the topic of a recent AWE Nite NYC event. Once again, AR thought leader and community builder Ori Inbar assembled an astute lineup, this time to examine LiDAR dynamics and strategic implications. It’s also this week’s featured XR talk (video and takeaways below).

Early Mover

One of the first companies to take advantage of LiDAR iPhones was not surprisingly Snap. In fact, it was featured in Apple’s keynote to unveil the new camera. In short, this elevates capabilities in Snap’s Lens Studio, unlocking a new creative range for AR developers.

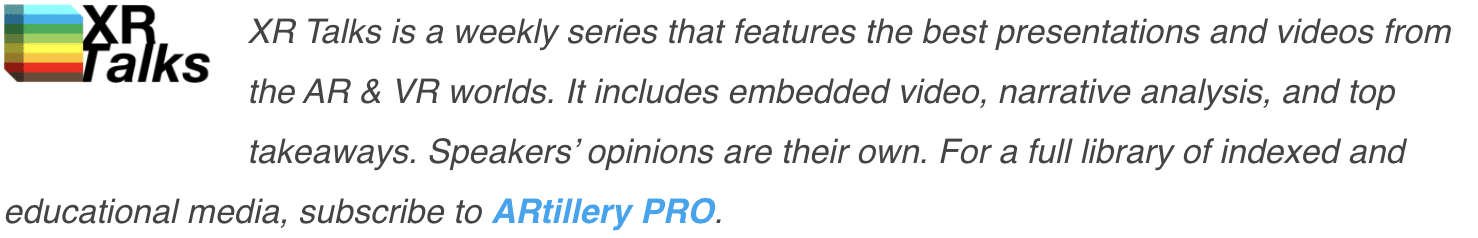

Specifically, Snap’s Qi Pan believes that LiDAR upgrades both underlying capability and user-friendliness. The former involves better object tracking, which translates to graphics that interact with real-world items in more realistic ways. It can especially boost these capabilities in low light.

That will engender several new use cases for Snapchat lenses. One, it means more indoor activations, such as augmenting your office or bedroom. Two, it brings lenses more to the rear-facing camera to augment the world, in addition to selfie lenses — a path Snap was already on.

As for user-friendliness, LiDAR can not only perform more accurate spatial mapping, but it can do so much faster than standard RGB cameras. To activate AR experiences, users don’t have to wave their phones around — a more approachable UX that could appeal to a wider user base.

Likely Candidate

Another likely candidate to benefit from LiDAR is Niantic. The company was already well-positioned given its 6D.ai acquisition — a company that engineered advanced spatial mapping capability in standard RGB cameras. So what additional benefits does LiDAR bring to Niantic?

According to go-to-market lead Meghan Hughes, it elevates the entire software stack. This includes being able to spatially map in low-light conditions, and better object recognition for “semantic understanding” (knowing that a couch is a couch and a chair is a chair.)

This will advance the things that Niantic was already working on, including crowdsourcing spatial maps from its highly-engaged user base. This involves Pokémon Go and Ingress players mapping public spaces while they’re playing, thus contributing to Niantic’s Real World Platform AR cloud.

LiDAR now equips them with a tool to get better scans, and with greater ease. Speaking of which, like Pan, Hughes believes that LiDAR’s benefits manifest in both capabilities and user-friendliness. The latter could attract more people to AR in general which is good for the sector.

XYZ

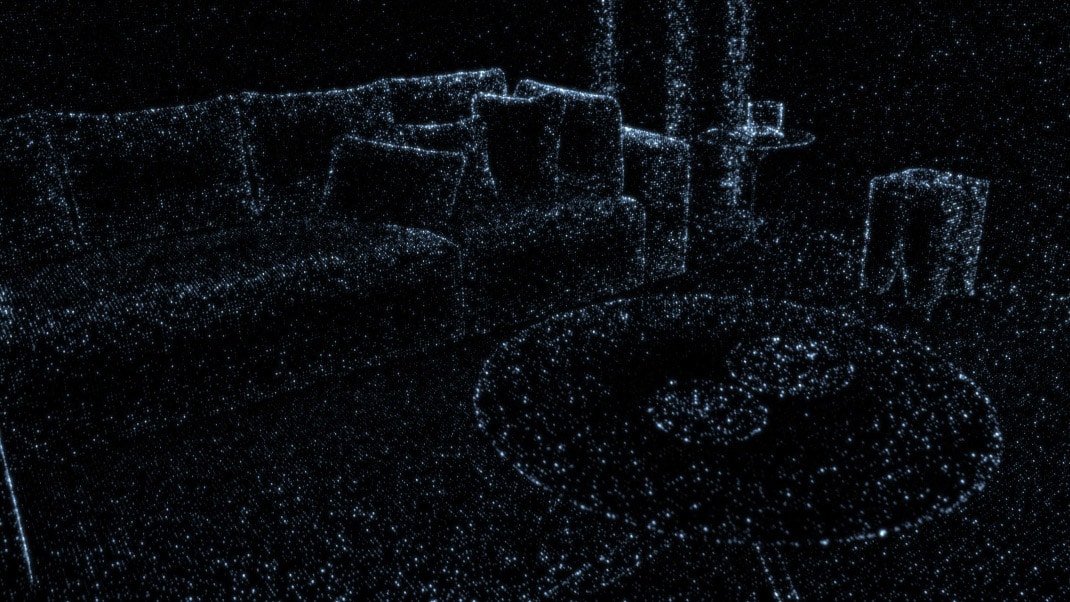

Yet another likely candidate for LiDAR’s benefits is Occipital. For those unfamiliar, Occipital is a longtime leader in mobile optical sensors. Co-founded by Jeffrey Powers, its Canvas app allows users to get high-definition scans of their interior spaces using tablets and smartphones.

Like Snapchat, Canvas was spotlighted in Apple’s LiDAR keynote. And like everyone above, the app has a lot to gain from LiDAR. This will work on all levels mentioned — more precision in spatial mapping, user-friendliness, and speed. All of these factors apply to Canvas’ UX.

For example, room scans that took 15 minutes with other depth cameras, now take 1 minute with LiDAR (and an additional minute of processing). Because it’s best experienced visually, you can see Powers’ quick demo at this timestamped portion of the event, and in the image below.

Powers also asserts that Apple’s LiDAR has great Z-axis (depth) resolution but not X and Y-axis resolution. But when combined with the iPhone RGB camera, possibilities arise to up-resolution scans in potentially game-changing ways. So lots of software development is still to come.

Let’s Get Physical

Lastly, LiDAR’s true potential could be in the capabilities it unlocks for developers. It’s a tool in the toolbox that will be combined with other tools and spun in creative ways by the developer community (as Pan asserted earlier). Patched Reality’s Patrick O’Shaughnessey is living proof.

The Babble Rabbit creator has been working with LiDAR on a prototype game that demonstrates the range of experiences that LiDAR could unlock. Known as Epic Marble Run, it lets users set up physical-world start and end points, which represent paths for a virtual marble to travel.

Integrated with Unity’s physics engine, the marble follows the laws of physics such as dropping at 9.8m/s. Players can create courses in which the marble bounces down stairs. They can also position everyday objects to channel the marble to its destination, or engineer “trick shots.”

One takeaway from all of the above is that LiDAR can be used in tandem with other technologies to formulate machine-learning magic and several combinations of experiences that haven’t been imagined yet. Put another way… things just got a lot more interesting in AR.

See the full event below.