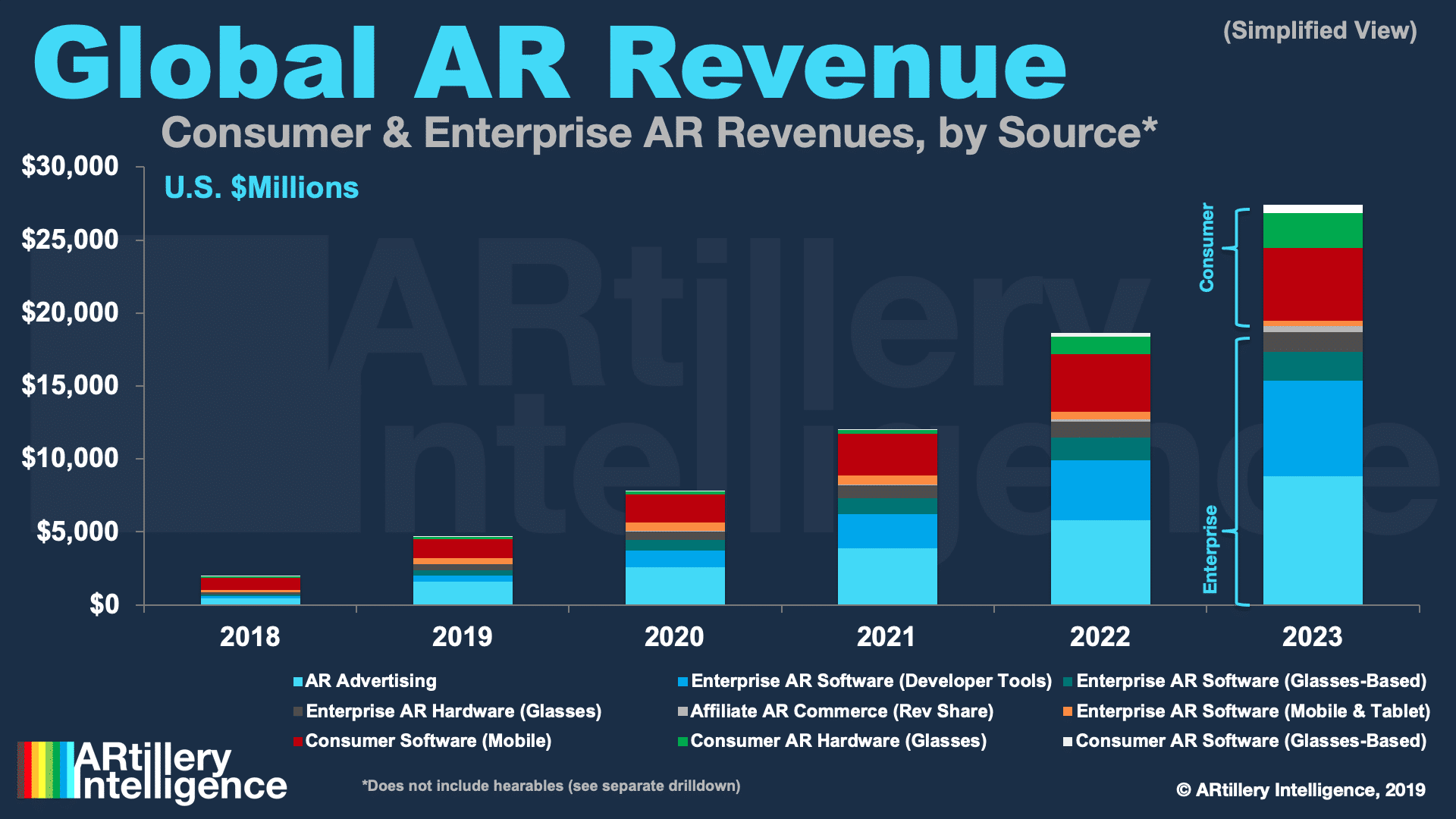

This post is adapted from ARtillery Intelligence’s report, Spatial Computing: 2019 Lessons, 2020 Outlook. It includes some of its data and takeaways. More can be previewed here and subscribe for the full report.

At this stage of spatial computing’s lifecycle, it’s becoming clear that patience is a virtue. After passing through the boom and bust cycle of 2016 and 2017, the last two years were more about measured optimism in the face of the sobering realities of industry shakeout and retraction.

As we roll into 2020, that leaves the question of where we are now? Optimism is still present but AR and VR players continue to be tested as high-flying prospects like ODG, Meta and Daqri dissolve. These events are resetting expectations on the timing and scale of revenue outcomes.

But there are also confidence signals. 2019 was more of a “table-setting” year for our spatial future and there’s momentum building. Brand spending on sponsored mobile AR lenses is a bright spot, as is Apple AR glasses rumors. And Oculus Quest is a beacon of hope on the VR side.

AR’s Scaffolding

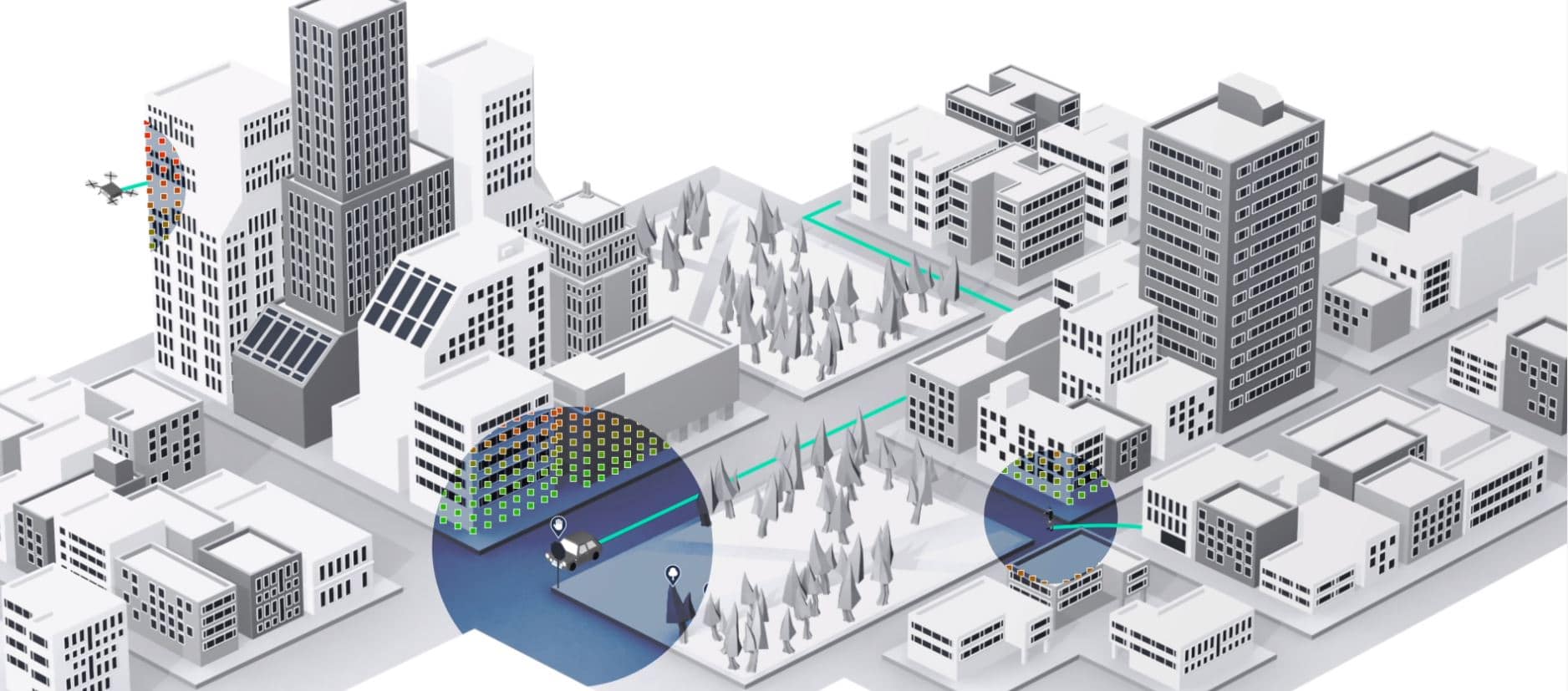

To achieve the vision we all have for persistent AR overlays that show up at the right time, there are technical gaps that still need to be filled. One framework in particular is under construction and will represent a key enabling entity for that vision. We’re of course referring to the AR cloud.

This is the missing piece for the vision we all have for AR that “just works.” As an invisible data layer that coats the physical world, it will empower AR devices to place graphics in the right spots. Also known as “Mirrorworld,” “Magicverse,” and the “Internet of Places,” it’s AR’s lynchpin.

But the challenge is building it, given that it happens on the scale of the inhabitable earth. This has led to approaches like crowdsourcing (6d.ai), visual databases for object recognition (Google & Apple), satellite imagery (Sturfee) 2D image compositions (Scape) and others (YouAR).

In addition to drones and cars, this involves humans scanning the physical world via smartphones to achieve spatial maps. These then become the foundation for data that can feed AR devices on the fly – enabling them to overlay graphics with positional and contextual accuracy.

The crowdsourced approach is interesting in that it scales. But its downside is reliance on humans to participate in an activity that may not be familiar. Panning your phone around to scan physical spaces is a deliberate behavior that has to be conditioned. It’s not culturally a thing yet.

Training Wheels

6D.ai is tackling this in interesting ways with SDKs for developers who utilize its spatial mapping in return for sharing back data that their users capture, a la Waze. Apps like Babble Rabbit have found creative ways to get users to pan their phones around a space in gamified ways.

Ubiquity6 is likewise addressing this challenge. Its display.land lets users 3D scan their physical spaces to share with friends. This isn’t AR, just a 3D model, but AR comes into the picture with display.land studio to add AR elements to those models, and their real-world counterparts.

The point is to introduce a new behavior that piggybacks on users’ conceptual understanding of an old one. In other words, one of today’s most popular mobile activities is capturing photos and videos, then sharing to a social graph. Dislplay.land, taps into that sense of familiarity.

This could advance user acclimation towards the relatively unnatural act of scanning physical spaces, thereby accelerating the AR cloud’s construction. This “training wheels” approach will be a key theme in getting users into AR activities by piggybacking on things they’re familiar with.

Another way AR will get over the adoption hump is utilities that offer tangible value to consumers – in addition to the social and entertainment use cases that have erstwhile ruled. This includes visual search (e.g. Google Lens) and navigation (e.g. Live View) and others that develop natively.

Stay tuned for more excerpts, ongoing commentary on the Internet of Places and check out the full report here.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.

Header Image Credit: Apple