Google Lens is getting easier to use. As the tool and its machine learning brains continue to develop, it’s being dispatched to more access points. First available on Pixel 2, then Google Photos on iPhones, it’s now on Google’s core iOS app.

So we decided it was time for a more thorough test drive. Not a full-blown product review, we’ve developed some observations on its UX, utility and strategic implications for Google. This stems from using the tool for a few days, and testing it on a variety of physical objects (images below).

Lowering Friction

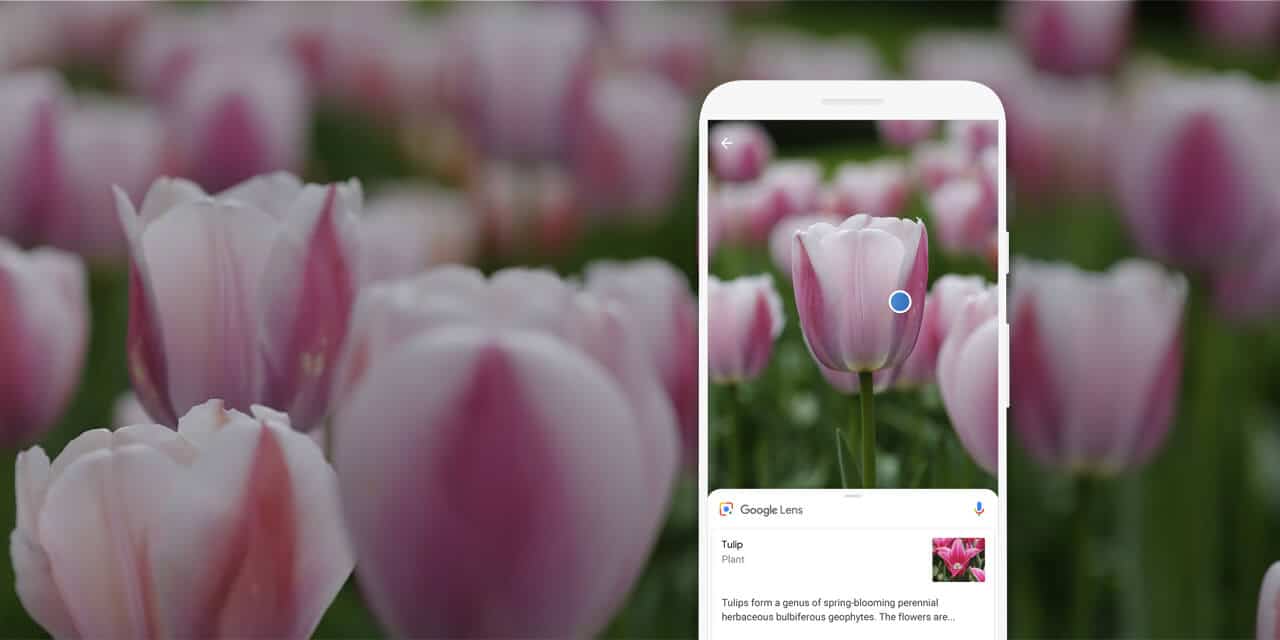

First, what is Google Lens? Sort of a cousin of AR, it identifies items you point your phone at. It isn’t the sexiest AR modality, but its broad utility could end up as AR’s most prevalent “all day” use case. And Google, armed with its knowledge graph, is the right company to build it.

The first thing that’s different about this update is that it’s activated right in the search box of Google’s main iOS app. Unlike the previous Google Photos integration on iOS (which made you take a picture first), this Lens version lets you just tap on objects in the camera’s field of view.

This makes it a lot easier and dynamic, including walking around with the phone and identifying items on the fly. Its machine learning and image recognition go to work pretty quickly, while tapping into Google’s vast knowledge graph to identify items as they come into view.

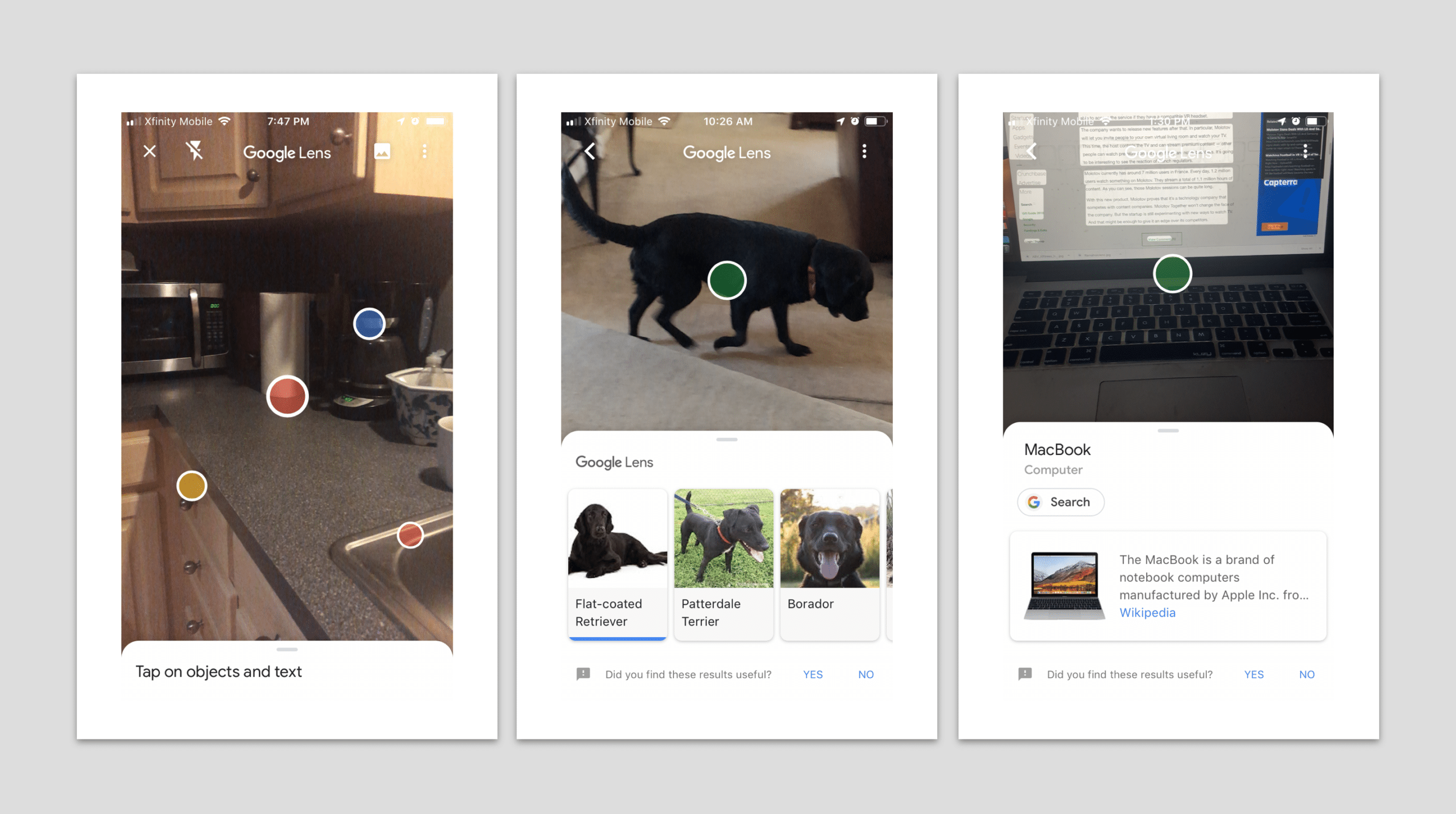

Most of this is hidden until you actively decide what to identify. Object search-prompts take form in Google-colored dots that, when tapped, launch a card from the bottom of the screen. This employs Google’s familiar Material Design-oriented cards that are seen in apps like Assistant.

Organize the World’s Information

We tested Lens on a range of household objects like laptops, packaged goods and dogs, all of which it identified (mostly) accurately. This range is what will make Google shine in visual search compared with, say, Amazon which will nail products but not general interest searches.

Google is rightly holding users’ hands by promoting select categories and use cases, like dogs & flowers (fun) and street signs & print menu items (useful). Utilizing its vase knowledge graph, these vertical specialties and use cases will develop and condition user behavior over time.

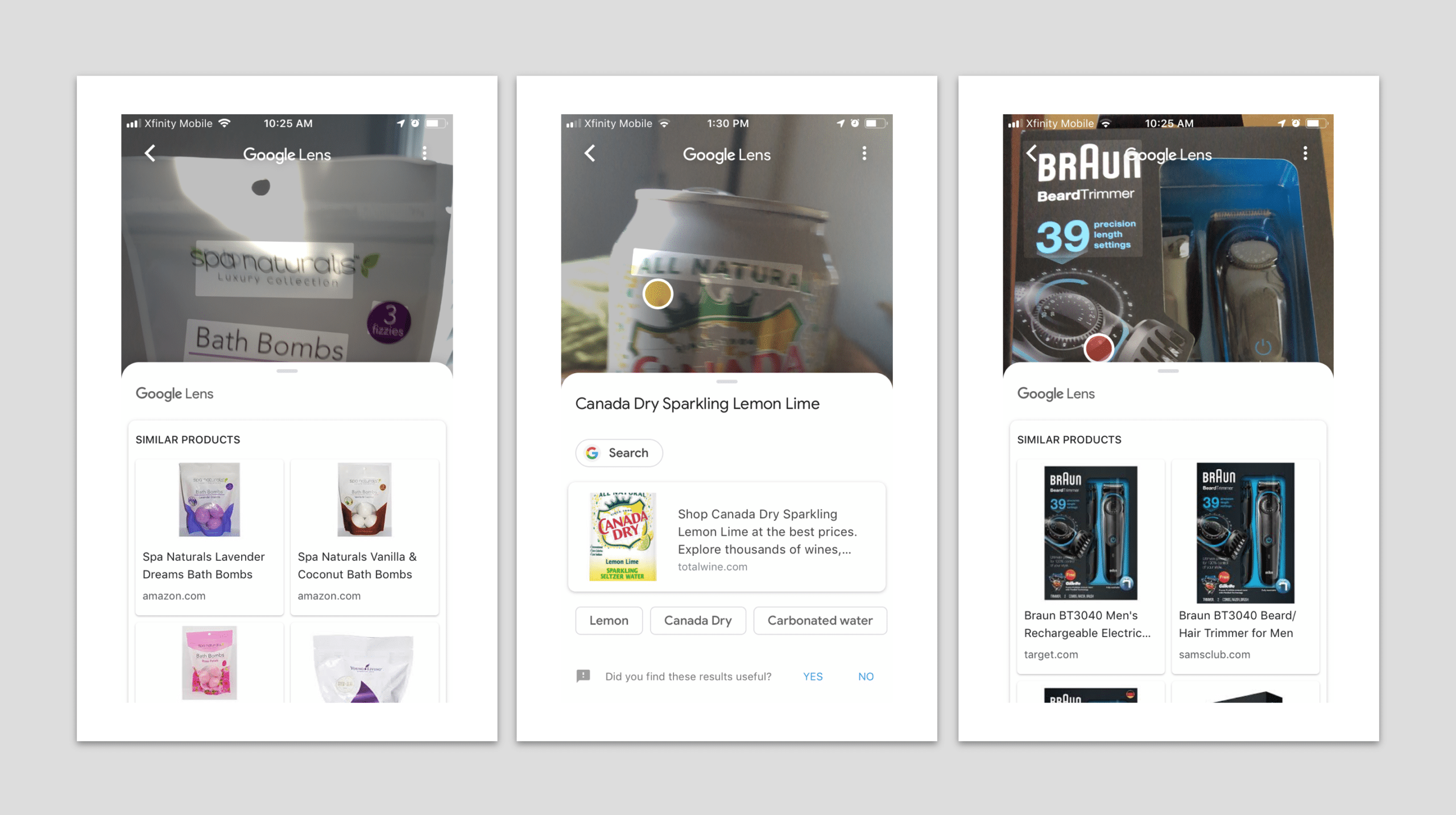

For now, we found it works best in “structured” visual queries. Think: packaged goods where it can pick out a logo, as opposed to generic items like a pillow. Products also represent the most reliable images (professionally shot, clean) in the database from which it matches objects.

Luckily for Google, these are also the types of visual searches that have the most monetization potential. Just like search, Lens will be a sort of free utility for things like general interest queries, but the real business case will be for the smaller share of commercial-intent searches.

Business Case

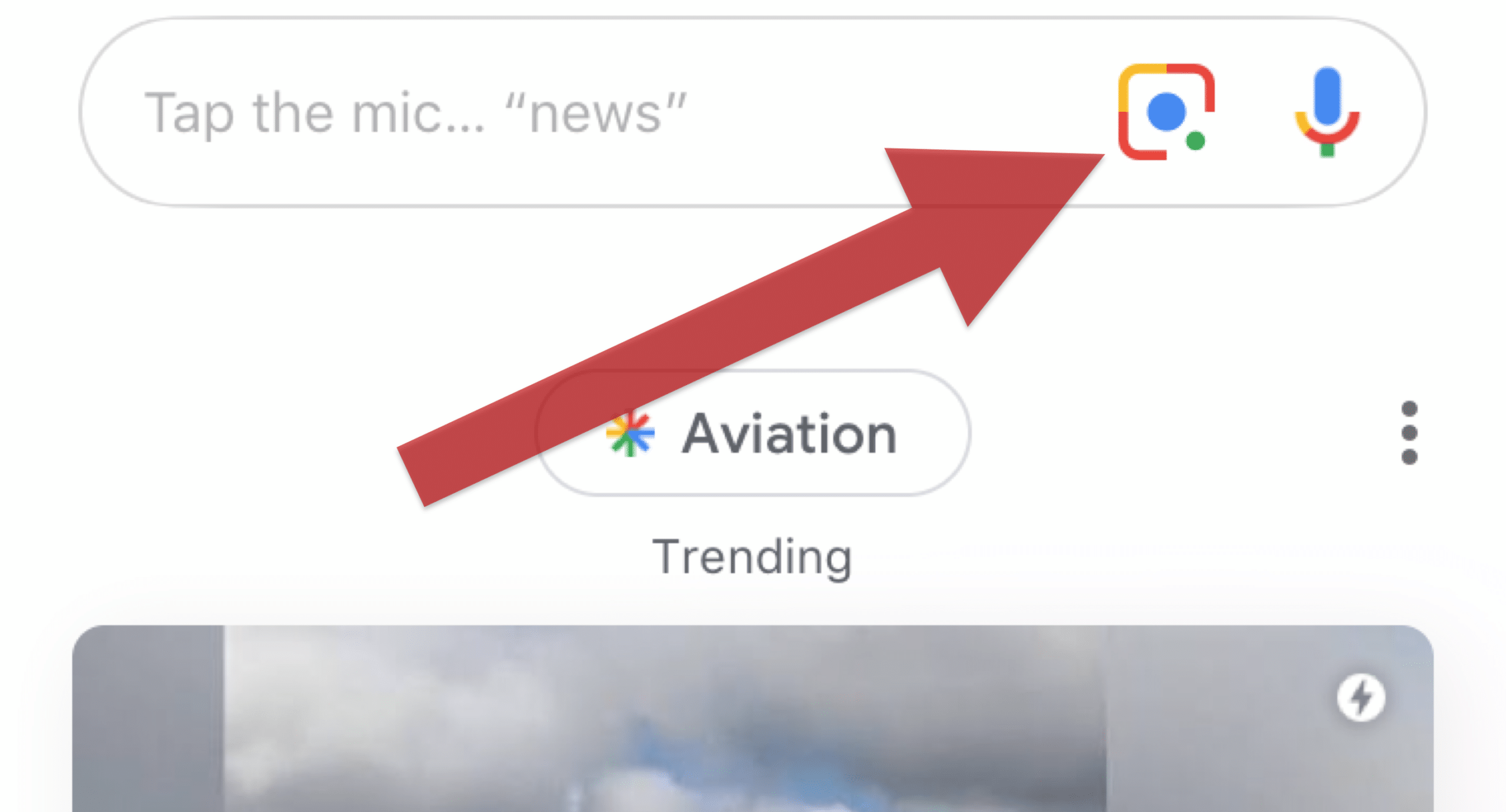

It’s also interesting to note that the Google Lens activation button sits right next to the voice search button in the search bar. This is symbolic of their common goal. Like voice, visual search’s job is to create more search modalities and user touch points (read: more query volume).

As background, Google has a longstanding goal to boost query volume and search performance since the smartphone’s introduction. The app-heavy use case and declining cost-per-click from mobile searches are negative forces that Google is challenged to counterbalance in other ways.

The other key Goal for Lens (along with retail-oriented VPS) is bringing Google to the last mile of the consumer journey. Given the object proximity of visual search, it plants Google closer to the transaction, and thus the ad attribution data that’s mostly missing in online-to-offline commerce.

As even further background, 92 percent of the $3.7 billion in U.S. retail commerce is spent in physical stores. Mobile interaction (such as search) increasingly influences that behavior, to the tune of $1 trillion in spending. This is where AR and visual search will live, and Google knows it.

Priorities

Google will continue investing heavily in AR for the above reasons. It’s off to a good start with a training-wheels approach to educate users on Lens, and to plant it in easy access points. Bringing it to the main Google iOS app is a big step in that direction. We’ll see many more in 2019.

Another question we’ve heard is, why is Google making Lens available on iOS? The answer is the same reason it wants Maps and Search on iOS — it’s the best possible coverage to drive its core search business. If the priority was selling hardware — like Apple — it would be a different story.

Panning back, Lens represents mundane utilities that will emerge as AR value drivers. Though gaming and social hold valuable spots, history indicates that killer apps (think: Uber, Waze, messaging) combine frequency, connectivity and utility. Lens is primed to check those boxes.

For deeper XR data and intelligence, join ARtillry PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.