This post is adapted from ARtillry’s latest Intelligence Briefing, AR Business Models: The Top of the Food Chain, Part I. It includes some of its data and takeaways. More can be previewed here and subscribe for the full report.

One key question when examining tech giants’ motivations for, and influence on, XR is to ask how they will monetize it. Asking that question of Google yields several answers – some explicit and some extrapolated from the moves it’s made. In either case, there are key lessons.

As is a key theme in our latest report, Google’s AR initiatives support its core business model. For example, its visual positioning service (VPS) ties to its core mapping and search functions. Visual search similarly aligns with Google’s core mission to answer users’ search “queries.”

In other words, Google sees AR as a way to boost search query volume and quality – its biggest source of revenue. AR is inherently a form of search, but instead of typing or tapping queries the traditional way, the search input is your phone’s camera and search “terms” are physical objects.

“Think of the things that are core to Google like search and maps,” said Google’s Aaron Luber at ARIA. “These are things we’re monetizing today that we see added ways we can use [AR]… All the ways we monetize today will be ways that we think about monetizing with AR in the future.”

As background, Google has a longstanding goal to boost query volume and search performance since the smartphone’s introduction. The app-heavy use case and declining cost-per-click from mobile searches are negative forces that Google is challenged to counterbalance in other ways.

That includes things like voice search. Now as we enter visually-immersive computing era, Google wants to position the increasingly-popular smartphone camera as a search input too. Visual search and VPS are more ways to ensure its positioning in the next era of monetizable search.

“A lot of the future of search is going to be about pictures instead of keywords,” Pinterest CEO Ben Silberman said recently. His claim underscores another key factor that indicates visual search’s potential appeal: millennials. The buying-empowered generation has a high affinity for the camera.

Visual Search: The Internet of Places

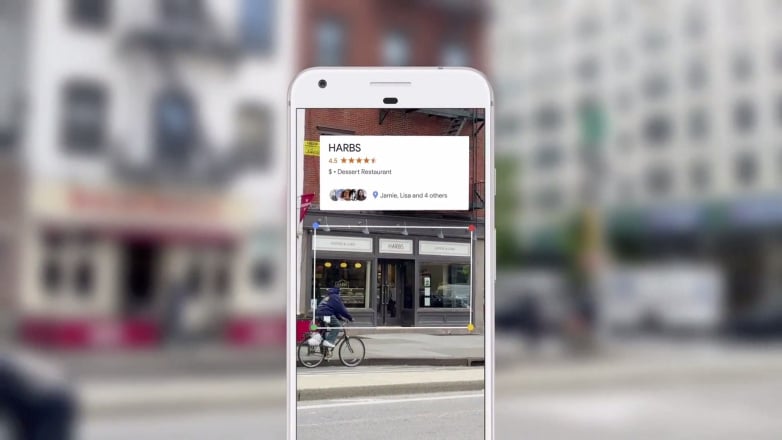

Represented best by Google Lens, visual search lets users point their phones at items to retrieve information, or potentially transact. Its use cases and product categories will materialize over time (think: electronics and apparel) but could end up being almost as broad as search itself.

For example, point your phone at a store or restaurant to get business details overlaid graphically. Point your phone at a pair of shoes you see on the street to find out prices, reviews and purchase info. All of these use cases will apply Google’s vast image database and knowledge graph.

One feature of Google Lens is “Style Match,” which searches for items similar to apparel users point their cameras at. That has clear shopping and commerce tie-ins, and the next step will be to integrate visual searches into transactional functionality through Google Shopping.

Google Lens will also use computer vision and machine learning to ingest and process text. For example, it will scan restaurant menus to search for the ingredients in a dish. It will do the same for street signs and other use cases that develop in logical (and eventually monetizable) ways.

This can be considered an extension to Google’s mission statement to “organize the world’s information.” But instead of a search index and typed queries, AR delivers information about an item on that item. Instead of a web index, this works towards a sort of “internet of places” (IOP).

“The camera is not just answering questions, but putting the answers right where the questions are,” said Google’s Aparna Chennapragada at Google I/O. Like search, these activities have the magic combination of frequency and utility, which could make them the first scalable AR use case.

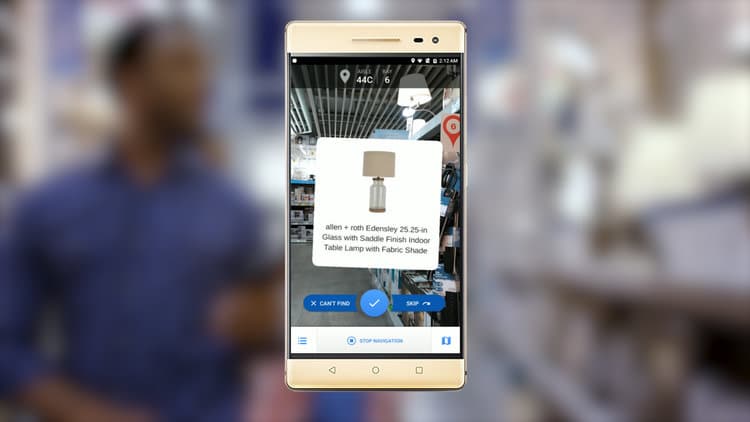

VPS: The Last Mile

Google’s visual positioning service (VPS) is another manifestation of IOP. It helps shoppers navigate and obtain product information in retail stores. Using point-cloud based 3D mapping data within retail partners’ locations, it will help consumers find the aisles and products they want.

“GPS can get you to the door, and then VPS can get you to the exact item that you’re looking for,” said Google’s VR/AR lead Clay Bavor at last year’s VPS unveiling at Google I/O. “Imagine in the future your phone could just take you to that exact screwdriver and point it out to you on the shelf.”

Like visual search, this will help Google serve monetizable information to consumers. But it also ties nicely into Google’s existing search ad business with “last-mile” attribution data to report ROI to its advertisers. It knows the best way to do that is to track dollars where they’re mostly spent.

As background, 92 percent of the $3.7 billion in U.S. retail commerce is spent offline in physical stores. Mobile interaction (such as search) increasingly influences that purchase behavior, to the tune of $1 trillion in spending. This is where AR could take the biggest bite and Google knows it.

Furthermore, commercial “intent” – a key factor for Google – is high when you’re in a physical store. And attributing ROI and conversions – another key factor – is a longstanding holy grail, of online and mobile advertising. These add to the list of motivating factors for Google’s AR vision.

We believe this “local AR” opportunity will eventually be applied to lots of monetizable search such as retail and local discovery. But first it’s taking form in utilitarian products that will attract and grow a user base. That includes most notably AR navigation for street walking, built on Google’s VPS.

“Just like when we’re in an unfamiliar place, [we] look for visual landmarks — storefronts, building facades, etc.” said Chennapragada at Google I/O. “It’s the same idea: VPS uses visual features in the environment [to] help figure out exactly where you are and where you need to go.”

To continue reading, see ARtillry’s latest Intelligence Briefing, AR Business Models: The Top of the Food Chain. Also stay tuned for the second half of this analysis on Google, which we’ll post next week.

For deeper XR data and intelligence, join ARtillry PRO and subscribe to the free ARtillry Weekly newsletter.

Disclosure: ARtillry has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.