Let’s do a simple vision test. Close one eye, and look with the other one at your finger close in front of you. You will notice that you see it sharp since you focus on it. What you may not have noticed so far, is that everything around, apart from your finger, is blurred. If you now look beyond your finger at a distant object, that object will come into focus and your finger will become blurred. This simple test shows how our three-dimensional monocular perception works.

Until today, almost all AR and VR devices are ignoring this natural focusing function of our eyes. They use flat displays to project the virtual images, meaning we can see virtual images sharp only when our eyes focus at the distance of the display. Any other objects placed at different distances (see the bird below) will get out of focus, just like in real life. This is a massive problem for augmented reality – most AR devices today provide flat images at a fixed distance of 1.4 – 2 meters and you are physically unable to focus both on your hands and virtual objects, … unless your hands are 2 meters (6 feet) long, that is. This problem is called a focal rivalry.

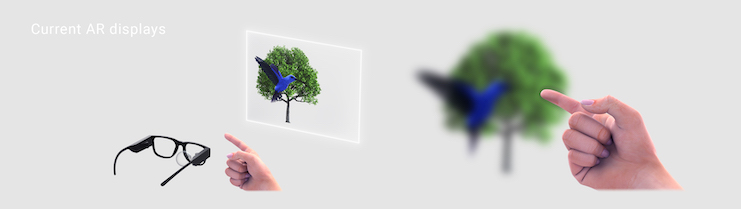

To give the perception of depth, current AR and VR devices project a slightly different image to each eye, the so-called stereoscopic image. This creates a stereoscopic impression of depth. However, this leads to another problem. When you look at something with 2 eyes, your eyes converge at the object you are looking at (converging more, the closer the object is). At the same time, your eyes focus or “accommodate” at the distance you are looking at. In real life, vergence and accommodation are always working in harmony (the more your eyes verge, the closer you accommodate).

But with virtual objects shown on flat displays, those distances are very often out of sync. While the vergence works naturally in the stereoscopic image, accommodation is always at the distance of the display. The brain receives contradicting information about vergence and accommodation. This problem is called the vergence-accommodation conflict.

Those 2 problems are one of the key reasons why most people will feel a headache, dizziness, nausea, and eye strain after even brief usage of AR or VR headsets (each person feels it differently and, as with everything in life, some people feel it more and others less).

These are fundamental problems that will always haunt headsets based on flat-display technology. They are determined by physics, optics, and human biology and cannot be solved simply by adding pixels, smarter software, or marketing dollars. They require a fundamental change in how an image is generated in AR or VR.

To the Rescue

Luckily companies are working on solutions to the above challenges. Let’s see the main ones:

1. Retinal laser beam scanning

The image is painted by shining a laser directly at your retina. The process is very similar to old CRT TVs (those with big bulging glass screens) where a beam sweeps across the screen painting individual pixels line by line as it goes. Here the laser sweeps directly onto your retina, painting pixels as it goes. Laser properties make it possible to generate an image practically always in focus, meaning an observer will see a sharp virtual image regardless of where her/his eyes focus. Such a system in binocular configuration can potentially solve both problems. Another advantage is that it can be made very small and very bright making it suitable for outdoor AR. However, the price to pay is a small eyebox (area through which the observer’s eye sees an image) which can drastically reduce the user’s immersion. Steering the eyebox to follow the eye movement could be a solution but no commercially viable solution has yet been seen. Another problem is that projection through a small eyebox inevitably casts shadows of all the dust in the optics, eyelashes, and floaters in the eye on the retina, too, making the image always ugly. Several companies used retinal laser scanning with an expanded eye-box that helps to clean the image and provides more freedom to eye movement, such as North (acquired by Google) and reportedly Eyeway Vision. The expanded eye-box however removes the always-in-focus effect and brings the accommodation problem back.

2. Varifocal

This approach works (also) with a classical flat display, but moves it optically to the distance on which the eyes are converging, thus keeping the vergence and accommodation stimuli in harmony. The change of optical distance can be done by physical movement or by a variable focus lens. It requires a well-working eye-tracking and digitally blurring the parts of the image that are not supposed to be in focus. The main advantage of this approach is that it leverages many of the existing and well-working VR technologies, so it is easy to integrate into existing VR product pipelines. The disadvantage is that the whole system needs to work perfectly and very fast, and needs to be calibrated for each user, otherwise, it makes everything worse. Meta Reality Labs was working on such an approach for a long time in a project Half Dome and it is rumored to be used in project Cambria.

3. Multiple depth planes

By stacking multiple planes at different distances behind each other, the image can be shown on the plane closest to its real depth. Such a system does not need eye-tracking and blurring of out-of-focus images is natural. However, stacking multiple planes can be a very hard engineering problem, possibly negatively impacting the quality of real-world images in AR, and virtual images can visibly break between planes. Also, there might not be enough planes to cover all distances, leading to some vergence accommodation conflict anyway. Such a solution was used in Magic Leap 1 (2 depth planes, but only one was used at a time chosen by eye-tracking), supposedly by Avegant, and is commercially used by Lightspace Labs (4 depth planes).

4. Lightfields

Unlike previous approaches, light-field aims to genuinely recreate light rays in the same way as if they were coming from the real world. It accomplishes this by projecting slightly different perspectives of the same scene through an array of slightly displaced viewpoints into your eye. The three-dimensional perception experienced is nearly identical to that of looking at the real world. Just like the real world does not need eye-tracking to work, light-field does not need eye-tracking either. Although it has not yet been commercially proven, CREAL is the only company that publicly demonstrated it with high-resolution in a headset form factor prototype, and is currently working on shrinking down the size even more. Some consider light-field very computationally demanding, but CREAL demonstrated a solution with computational complexity comparable to that of normal VR. Peta Ray is rumored to use similar technology. The downside of the light field is that its resolution is limited by the so-called diffraction limit. This limit is still higher than the resolution of any current AR headset.

5. Holography

Similarly to light-field, holography aims to recreate the flow of light waves with the same properties as if it was coming from the real world. It exploits diffraction and interference properties of light. Again, holography is projecting virtual images that are optically entirely natural to the human eye. It can also reach high brightness in a reasonably small form factor and does not have, in theory, the same resolution limit as light fields. The main disadvantages are currently very heavy computational requirements, speckle or graininess of the image, lower resolution, and mainly a fundamentally low framerate and high sensitivity of the key component. It also has not been commercially proven yet. VividQ is using this approach and has reduced the computational effort significantly.* Samsung, Stanford University research, Meta Reality Labs, and Nvidia, among others, also presented lab research on this technology.

The Race is On

These 5 technologies aim to solve or mitigate the focal rivalry and vergence-accommodation conflict. The race for the best one is still out and it might be that different approaches will be adopted for different use cases or at different times. However, regardless of the winner, this will be an extremely fascinating race to watch. We are witnessing an emergence of fundamentally new display technologies, something we have not seen for decades.

Tomas Sluka is the CEO and co-founder of CREAL.

Tomas Sluka is the CEO and co-founder of CREAL.