Are we creating augmented reality experiences or merely VR experiences with reality as a stage prop?

There is (seemingly) an obvious and binary distinction between Augmented Reality (AR) and Virtual Reality (VR). From a rudimentary perspective, you can see the real world in an AR experience but not in VR. Applications and hardware devices are either one or the other. If you are working in the augmented/mixed/virtual reality space, how confident are you in defining augmented reality or mixed reality (MR)? No doubt you can comfortably wrap your arms around and squeeze the idea of VR, but AR/MR is more challenging. Under weighted scrutiny, how well does your construct of AR and MR hold up before getting fuzzy or diffused?

For users, the differences have little, if any, meaning. Users want to be entertained or given tools that provide value (e.g., increase efficiency or reduce cognitive load), and few are concerned with semantic qualifiers. For developers and designers, these classifications are important as they dictate technology stacks, design principles, and interaction models. Product stakeholders and strategists should care, too, because it affects an application’s target market and user base in addition to hardware and platform decisions.

To be provocative, let us question our understanding of augmented reality and ask if existing AR-labeled experiences are truly augmented reality or something else. Having a framework helps structure discourse such as this; fortunately, one already exists.

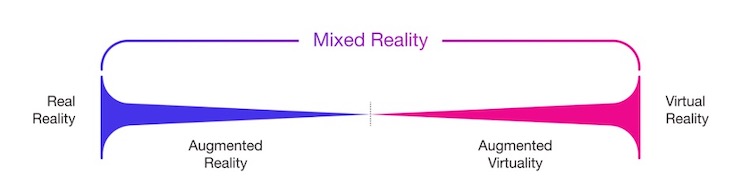

The Reality-Virtuality Continuum

In 1994, Paul Milgram et al. published a paper describing a taxonomy for categorizing Augmented Reality displays. This paper introduced the world to the term “Mixed Reality” and the Reality-Virtuality (RV) Continuum. The latter states there are two foundational realities, our natural reality and virtual reality, and a vast blended space between the two realities – mixed reality.

Mixed Reality, the term as used today, askews the original intent by placing mixed reality as an extension to augmented reality or as a higher-order augmented reality based on greater integration of virtual elements. I prefer Milgram’s original definition of MR as the space between reality and virtuality, with AR a sub-segment of MR.

Augmented reality is an experience grounded in the physical world interfused with digital content. The digital elements must continuously integrate into the physical environment rather than simply be slapped on stickers. Digital content must react and express emergent behavior in response to the dynamic physical environment.

Augmented virtuality (AV) (Milgram’s term) is the opposite. It is an experience rooted in a virtual environment and supplemented with elements of the physical world. An experience like the game Beat Saber is emblematic of augmented virtuality at the absolute edge of VR. When players move their hands, sensors in the controllers provide data from the physical world to the virtual environment. The natural physiologic and spatial movements connect the physical and virtual environments, unlike the primitive electrical impulses of button presses.

A Bias To Virtual Reality

Most mixed reality experiences are actually closer to VR than the reality endpoint of the RV continuum. Accordingly, they are augmented virtuality (AV) and not augmented reality (AR). In AV, a virtual environment is the foundation of the experience, with the physical environment augmenting the virtual.

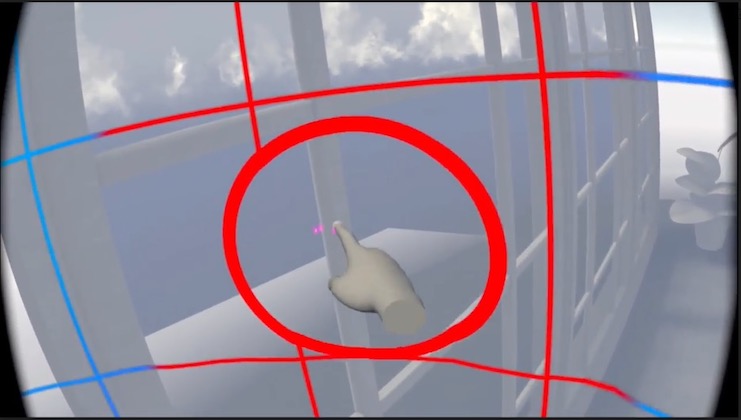

Mixed reality requires some understanding of the physical environment. How much and how the data is used influences the subcategorization of MR the experience. The more an MR experience integrates with the physical environment, the more thorough and persistent understanding of that environment the system needs. This understanding comes from spatial computing, which is computationally expensive and requires sophisticated hardware. Further, spatial computing algorithms and AI models are still evolving and are not yet capable of supporting the ideal or genuine augmented reality experience. In short, we do not yet have the technology to support the full MR spectrum, and consequently, we are only scratching the surface of understanding how to design and work in MR.

Conversely, the knowledge and skills to design and develop for VR have existed for many years. Virtual reality applications are not significantly different from games played on screens. The transition from screen-based game-like experiences to VR is natural and familiar to creators, whereas MR is an entirely new and different computing pattern. Growth between VR and MR is not going to be isometric and within the MR spectrum, augmented virtuality will lead over augmented reality – at least in the near future.

There is a natural and common phenomenon of stretching from a place of familiarity and comfort in one (old or established) medium to another that is new or emerging. The Web was created to digitally present documents. Web pages mimicked physical paper with layout structures borrowed from newspapers and magazines. While static “pages” still exist, the web is also for dynamic interactive experiences and feature-rich applications. Mixed reality will go through a similar growth pattern.

A Sample Review

Take, for example, the wildly successful Pokémon Go game, which is often promoted as the prototypical AR expression. In the application’s “AR mode,” the player sees a Pokémon character layered on top of a live camera feed. The virtual character has no connection to the physical environment. Its behavior is unaffected by anything seen from the camera’s perspective. Switching out of AR mode simply changes the background to a virtual image instead of the camera feed. The camera feed is more augmenting virtual reality then the virtual character augments reality.

The passthrough feature in Quest 2 and Quest Pro are often used in the same way Pokémon Go uses the smartphone camera. The Guardian feature, built into the device’s OS, utilizes external cameras to warn the user when they are about to hit a wall or an artificial boundary. However, it does nothing to blend the physical environment into the virtual experience. The virtual is the base of the experience. The Guardian feature is more about limiting the disorienting effects of VR (i.e., occluding the real world and disrupting one’s vestibular senses) than augmenting a physical environment.

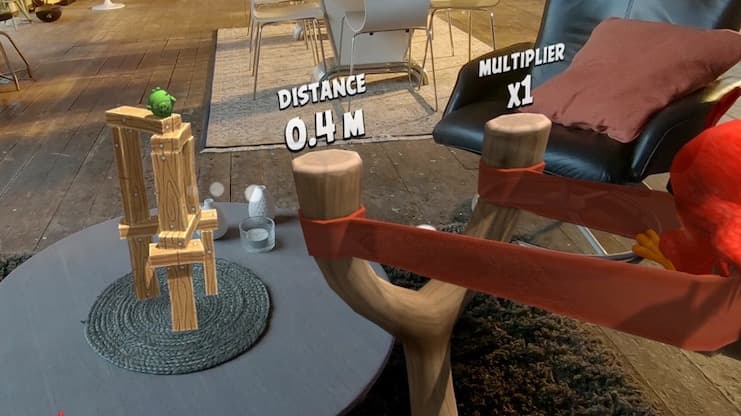

Another example is the Angry Birds app for Magic Leap One. The app begins by scanning the user’s physical environment and asking them to place the game board on a planar surface like the floor. Once placed, the virtual game has no further integration or interaction with the physical environment. You cannot ricochet a bird off a wall, and a pig cannot go flying into your fireplace. This experience also leans into the VR side of the MR spectrum.

Towards an Augmented Reality

We have only created suggestions of augmented reality experiences. Pointing out the gap between augmented reality and augmented virtuality is about elevating the conversation of experience design and not the sport of pedantically parsing terminology.

Creators must make intentional decisions about where their applications lie in the mixed reality spectrum. Making effective decisions that shape the user experience is the underpinning of Design. The user experience quality suffers when too much design is based on instinct, technical accomplishments, or raw emotional reactions and not in a cognitive understanding of the medium. We gain this wisdom through design research and by establishing philosophies.

We are in an exciting time for mixed reality. No established rules or conventions firmly exist. We are in the process of feeling them out. Certainly, we will make bad choices, but we will also make fundamental ones that will define the future of human-computer interactions in mixed reality.

Jarrett Webb is Technology Director at Argodesign.

Jarrett Webb is Technology Director at Argodesign.