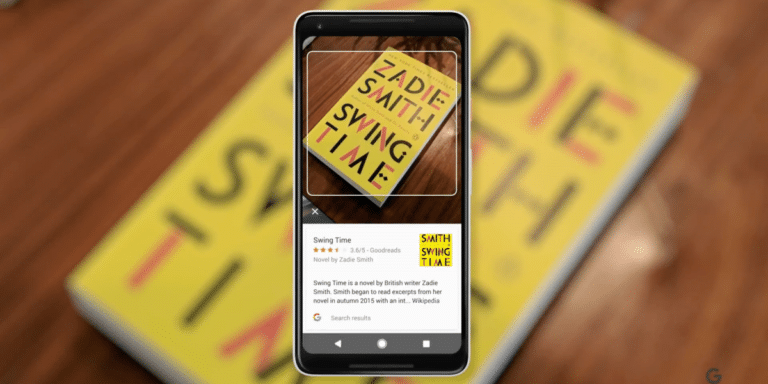

Buried deep in Google’s recent Search On 2020 event was a notable nugget. The company’s visual search tool, Google Lens, now recognizes 15 billion products. This is 15x growth in two years, given the December ’18 announcement that it recognized one billion products.

Beyond sheer numbers, this growth validates Lens’ broadening capability. Launched initially with promoted use cases around identifying pets and flowers, the eventual goal — in Google fashion — is to be a “knowledge layer” for monetizable searches like shoppable products.

In this sense, it’s telling that Google phrased this announcement as recognizing 15 billion products, rather than objects. This joins evidence we’ve tracked that Google Lens’ endgame is to find monetizable use cases for high-intent commercial searches through the camera.

This goal aligns with several factors. Millennials and Gen Z have a high affinity for the camera as a way to interface with the world; while brand advertisers are likewise drawn to immersive AR marketing. This makes visual search one way Google can future-proof its core business.

Who Makes that Dress?

The above factors raise the question of what types of products shine in visual search? Early signs (and logic) point to items with visual complexity and unclear branding. This includes style items (who makes that dress?) and in-aisle retail queries. Think of it as “showrooming” on steroids.

The common thread is shopping. Pinterest (also intent on visual search) reports that 85 percent of consumers want visual info; 55 percent say visual search is instrumental in developing their style; 49 percent develop brand relationships; and 61 percent say it elevates in-store shopping.

Another fitting use case is local discovery. Visual search could be a better mousetrap for the ritual of finding out more about a new restaurant — or booking a reservation — by pointing your phone at it. The smartphone era has taught us that search intent is high when the subject is in proximity.

These are all things that Google is primed for, given its knowledge graph assembled from 20 years as the world’s search engine. This engenders a training set for image matching, including products (Google Shopping) general interest (Google Images) and storefronts (Street View).

All of the above accelerates in the COVID Era when visual queries can replace the act of touching things — now and in retail’s forthcoming “touchless era.” Visual search will meanwhile benefit as consumers get acclimated to visual interfaces in AR-assisted eCommerce.

History Repeats

All of the above could take a while to materialize — at least the ad monetization components. Google is in the process of testing visual search, optimizing the UX, and devising interfaces for sponsored content insertion. What will be the results page (SERP) of visual search?

One challenge — just like with voice search — is that there isn’t a “10 blue links” SERP for ad inventory. So monetization will defy the traditional search model. This could be through enhanced results (think: “buy” buttons) when a visual search advertiser is discovered on Google Lens.

Until then, Google can use visual search behavior to optimize web search results. In other words, you won’t see sponsored results in a visual search flow, but you’ll see visual-search-informed search results when back on web search, assuming you’re signed into the same Google account.

To further grease the adoption wheels, Google continues to develop visual search “training wheels.” This includes making Google Lens front & center in well-traveled places and incubating it in search. This could reduce some of the friction and “activation energy” for visual search.

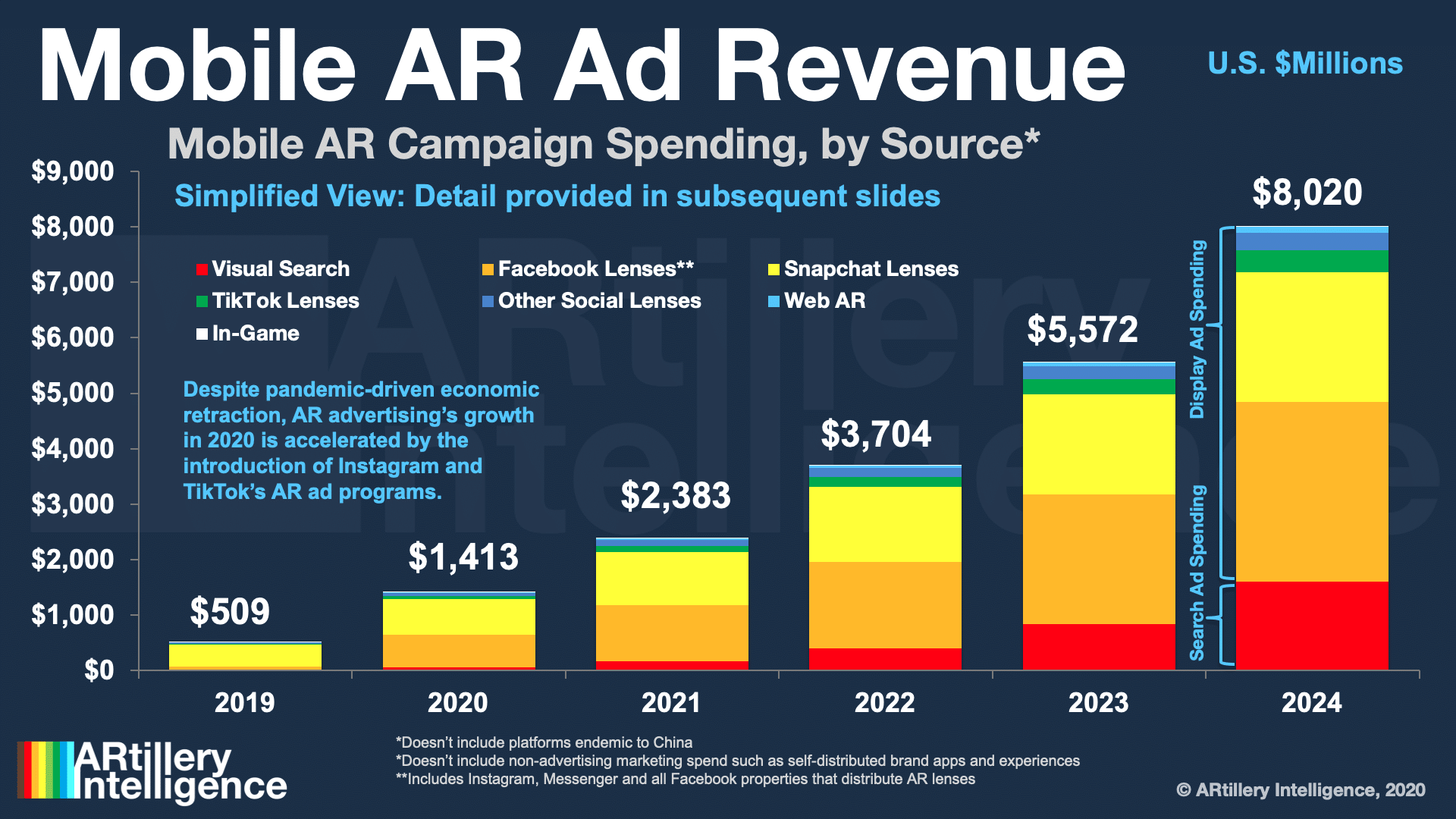

As these factors materialize, visual search will reach $1.6 billion by 2024 according to ARtillery Intelligence (see above). Just like the web, display ads (lenses in this case) emerged first. But history tells us that search’s high-intent use case, now more visual, could make it a gold mine.