One of our top pics for potential AR killer apps is visual search. Its utility and frequency mirror that of search and hit several marks for potential killer-app status. It’s also inherently monetizable and Google is highly motivated to make it happen.

To accelerate the consumer adoption that’s necessary for that vision, Google is letting visual search “piggyback” on core search as a sort of incubation play. This will help acclimate mainstream consumers to visual search and other forms of AR, such as product visualization.

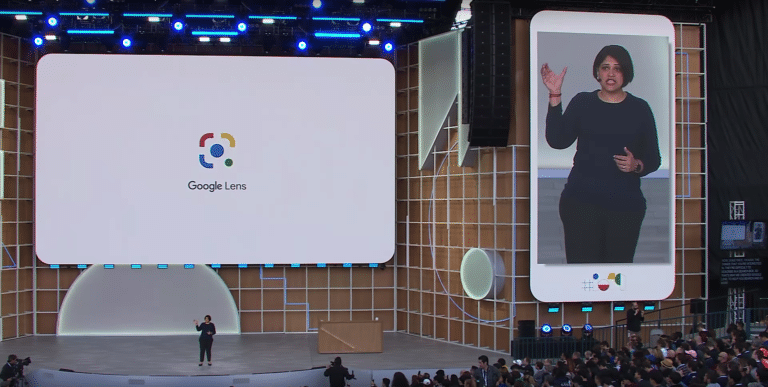

This was a key component of yesterday’s Google I/O keynote. There were lots of AR and visual search announcements, but one common theme is their growing infusion in the traditional search product. This couples AR’s unestablished value with something a lot more established.

“We’re excited to bring the camera to search, adding a new dimension to your search results,” said Google’s Aparna Chennapragada on stage. “With computer vision and AR, the camera in our hands is turning into a powerful visual tool to help you understand the world around you.”

AR at Hand

Google has been on this track for a while. Our December Google Lens review was based largely on the fact that it’s now an option available right in the core Google search app on iOS. The integrations announced yesterday make AR even more accessible in more places.

For example, search results will increasingly feature Sceneform objects that let you dive into 3D models that are related to search results. This will be particularly useful in physically-complex items/topics where AR visualization adds lots of value, such as human anatomy (see above).

“Say you’re studying human anatomy. Now when you search for something like Muscle Flexton, you can see a 3D model right from the search results,” said Chennapragada. “It’s one thing to read about flexion, but seeing it in action in front of you while studying it is very handy.”

Taking it a step further, the same 3D models can be overlaid on real-world scenes. This is where AR really comes in. It may be more of a novelty in some cases, but there will be product-based search results that can benefit from the real-world visualization (again, in monetizable ways).

“Say you’re shopping for a new pair of shoes,” said Chennapragada” “With New Balance, you can look at shoes up close from different angles directly from search. That way, you get a much better sense for what the grip looks like on the sole, or how they match the rest of your clothes.”

Search What You See

Going beyond AR product visualization, visual search is likewise integrated deeper into search. This means easier ways to activate Google Lens to identify real-world objects, and new features like real-time language translations, calculating restaurant tips, and contextualizing menus.

Speaking of food, Google Lens will bring recipes to life in Bon Appetit magazine with animated cooking instructions overlaid on the page. Other use cases will require similar content partnerships and Google has already announced New Balance, Samsung, Target, Volvo, Wayfair, and others.

These new use cases will join other Google AR utilities like VPS walking directions which are now available in all Pixel phones. But the underlying thread is that most of Google’s AR and visual search efforts will launch from, and map to, its established search products.

We already knew that in light of Google’s motivations for AR to support core products. But now it’s also apparent that it will use those very products to accelerate AR adoption. Google knows that the closer AR is planted in the search flow, the more it will be given the chance to shine.

“It all begins with our mission to organize the world’s information and make it universally accessible and useful,” said Google CEO Sundar Pichai on visual search. “Today, our mission feels as relevant as ever. But the way we approach it is constantly evolving. We’re moving from a company that helps you find answers to a company that helps you get things done.”

We’ll be back with much more analysis of Google’s latest announcements, I/O session coverage and ongoing narratives for visual search’s impact on the spatial computing spectrum.

For deeper XR data and intelligence, join ARtillry PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.

Header Image Credit: Google