Metaverse in the Real World: The Domain of AR

It seems like everyone has their own idea of what the metaverse is or will be. For some it is a fully immersive virtual world and for others a blending of the digital (or virtual) and the real-world. As often occurs, we project our own hopeful future, from our own context of need, onto this new thing. No single article could begin to resolve the differences in opinion, and I won’t try. Being an AR guy, I want to explore how AR plays in a blended metaverse. Let’s look at some opportunities and some issues with AR at world-scale.

For this discussion, I want to distinguish “virtual” from “digital”. Both are important. I can overlay virtual content on the scene via AR. I can put a virtual whale in the real sky. However, I can also scan the scene and recognize the pose of a real object. I can know the user’s real-world position. Then, via AR, I can put a map pin at the object’s location and provide a hyperlink to real (digital) data about this real thing (read: IoT). Is there anything virtual about that? Maybe something.

Who Will Build the Metavearth?

Virtual vs. Digital: Both are Opportunities

As I walk around in the blended metaverse, I want to see virtual content and real-world digital content. I want to be entertained, informed, and have a responsive, productive interaction with my environment.

I find the label Web 3.0 to be extremely valid for the metaverse. It is clearly the delivery of the next-gen web/cloud capabilities. Google and others have served us well in Web 2.0 as a pathway to all the information we want. Social media has allowed us to use the information superhighway to instantly visit and communicate and share with our friends and family and find new friends. Businesses have found unparalleled productivity, new and expanding markets and new ways to reach and delight customers.

AR in a real-world blended metaverse puts all these same capabilities on a new, faster path.

I Want to Get Digital, or Show Me the Way

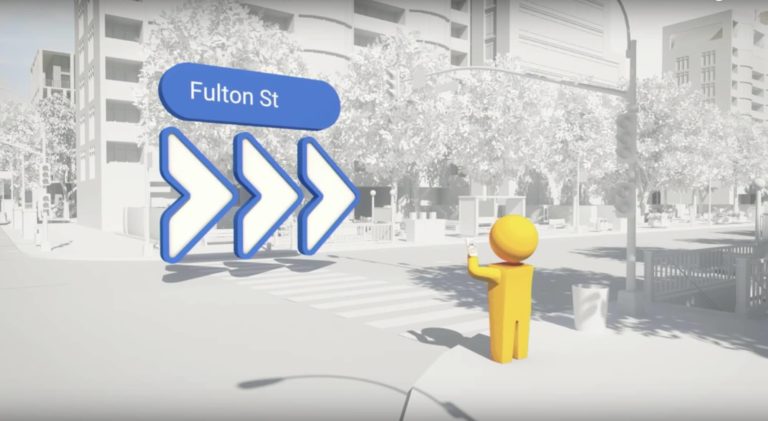

Virtual content is well-played already: virtual galleries, the aforementioned whales in the sky. Let’s drill down on a digital thread. AR wayfinding, in my car or as I walk around (outside or inside), is an obvious opportunity and a thrilling prospect.

We see one early example of this in Google Maps Live View. Using a mobile device, it shows a map view, laid down at the bottom of the screen. Signpost (like) AR glyphs, pointing directions, are added to the camera view: a kind of hybrid map-tile and AR view. They haven’t yet employed navigation traces (lines on the ground). Probably because there are issues. When you see traces in AR they are usually not occluded (hidden) by the local structures. When the trace goes around a building corner you can still see it, although it should be hidden by the building. It kind of ruins the effect. It takes a bit of work/magic to solve this issue.

The AR Space Race, Part I: Google

Everybody doing world-scale AR has this same issue, and there are many techniques and technologies being employed and developed to deal with it.

- A brute force method is to just fade out the traces at every sharp turn. This usually works OK.

- Leverage an existing 3D facet model of the scene (if you have one), and ARKit/ARCore/Unity, etc. all support real occlusion.

- Do some real-time scanning of the scene to learn the topology and occlude on the fly.

- Possible but maybe slow/technically expensive.

In addition to Google, Apple is also in this space, AR wayfinding. They have a partner program called Apple Indoor Maps. Joining the program gives you access to a technical toolkit to develop indoor maps. It seems most useful for shopping malls, grocery stores, airports, warehouses, factories, etc. Apple’s Map app itself does offer indoor maps at several airports (30+?). I assume that it utilizes this toolkit (but don’t quote me).

The Apple Indoor Maps partner program seems positioned for service providers who are developing maps for clients. It has some cool components. You have some layout tools to draft up the hallways, doorways, identify locations (store X, etc.). I am unsure how much other functionality it provides vs what you need to build yourself. However, it does look like they haven’t addressed the occlusion problem. All the demos show traces that don’t need to round a corner or have an arrow pointing around the corner.

It looks to me like Apple acquired an indoor mapping app with the layout tools, etc. and packaged it up as this offering. I can’t find the history on this, but the feel of the indoor mapping tools is distinctly non-Applish.

As typical for Apple (and of course it’s their perogative) the program is very locked down. As a “partner” you need to provide the exact location(s) where you will deploy the technology, and prove you have ownership rights for the location.

The AR Space Race, Part II: Apple

Lots of Other Work Going On

As I said already, world-scale AR visual integration is an issue for everyone doing AR. There is a lot of activity, public and not, focused on solving this issue. Solving it involves understanding the 3D topology of the scene. This not only supports occlusion but can also allow you to develop the scene pose which can be used to more precisely position the user and AR content.

1. Niantic’s Lightship ARDK Virtual Positioning System

Niantic just announced its Lightship Augmented Reality Developers Kit (ARDK). A near future component of the ADRK will be their Virtual Positioning System, VPS. Using a mobile device camera, a real-world location can be scanned and meshed. This applies technology obtained from Niantic’s Scaniverse acquisition (see video below). They will then store that in a cloud repository, along with all other users’ submissions. They are proposing to create a crowdsourced Niantic 3D World Map. This is sort of a huge endeavor that looks a bit like tilting at windmills, but what Niantic CEO John Hanke previously did with Google Street View leaves me thinking maybe they can pull this off.

2. Augmented Pixels and Augmented City

These are two smaller organizations that each have technologies and visions very similar to the VPS 3D world map. They both have unique engagement models.

3. Google Street Map

Surely all of that collected imagery is available to create exterior facet models of urban areas and more.

4. Smart Cities and BIM (Building Information Modeling)

Many of the various smart city initiatives include a 3D model of the city as a primary or secondary focus. BIM, the modern building design/construction methodology, is based on 3D modeling and an intelligent “digital twin” concept.

5. Do It Yourself

There is a wealth of available libraries, services and domain experts that can allow an organization to build their own solution. One particularly brilliant library which was pointed out to me recently is from CGAL, the Computational Geometry Algorithms Library. The CGAL 5.3.1 – Polygonal Surface Reconstruction method does sort of a fuzzy reconstruct to create simplified surfaces and cubic models (of buildings for instance), smartly ignoring smaller features to find big, flat, planar surfaces. These surfaces are just fine for nominal occlusion needs.

Can Niantic Unlock the Real-World Metaverse?

In Conclusion

AR wayfinding is just one of the many opportunities in the world-scale AR space. It blows my mind how many more are also opening in this brave new, metaversal world.

I wish I had another 1,000 words to try and finish, even this first-principles discussion of AR in the real-world, the opportunity, the work required. I know I kind of went into a deep (geek) dive at the end there, but this forum serves a wide audience.

Yes, there are many more topics to explore regarding AR in the real-world and the blended metaverse. For now I’ll just leave that to your imagination.

William Wallace is Founder & CEO of AR Mavericks.

William Wallace is Founder & CEO of AR Mavericks.