AR comes in many flavors. This is true of any technology in early stages as it twists around and takes shape. The most prevalent format so far is social lenses, as they enhance and adorn sharable media. Line-of-sight guidance in industrial settings is also proving valuable.

But a less-discussed AR modality is visual search. Led by Google Lens and Snap Scan, it lets users point their smartphone cameras (or future glasses) at real-world objects to identify them. It contextualizes them with informational overlays… or “captions for the real world.”

This flips the script for AR in that it identifies unknown items rather than displaying known ones. This makes potential use cases greater – transcending pre-ordained experiences that have relatively narrow utility. Visual search has the extent of the physical world as its canvas.

This is the topic of a recent report from our research arm, ARtillery Intelligence. Entitled Visual Search: AR’s Killer App?, it dives deep into the what, why, and who of visual search. And it’s the latest in our weekly excerpt series, with highlights below on visual search’s drivers & dynamics.

Search What You See

Google calls visual search “search what you see.” It brings the on-demand utility of web search and puts a visual spin on it: Snap rather than tap. In that way, it will inherit some of the value of web search, while also finding unique and native points of value that build from there.

For example, use cases showing early promise include shopping, education, and local discovery. Common attributes include broad appeal and high frequency. These factors give visual search a large addressable market in both quantity of users and volume of usage.

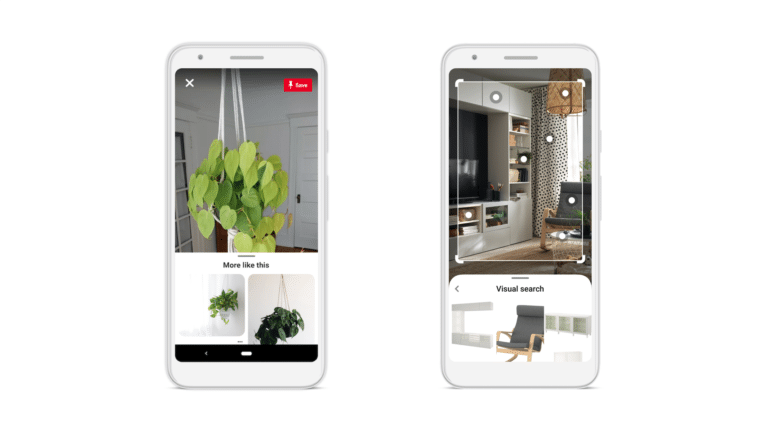

In shopping contexts, visual search is already proving its value. Pinterest reports that 85 percent of consumers want visual info; 55 percent say visual search is instrumental in developing their style; 49 percent develop brand relationships; and 61 percent say it elevates in-store shopping.

These figures were generated from Pinterest Lens, which is its visual search product for style inspiration. Meanwhile, Snap is likewise intent on visual shopping through its visual search play, Snap Scan. It specifically calls this visual-shopping use case “outfit inspiration.”

Visual search could also be a better way to find out about a new restaurant — or book a reservation — by pointing your phone at it. This could be a more user-friendly way to discover new local places, replacing the traditional act of typing or tapping text into Google Maps.

Knowledge Graph

Speaking of Google, it’s primed to do all the above, given its knowledge graph assembled over the past few decades. This engenders a training set for AI image recognition, including products (Google Shopping) general interest (Google Images), and storefronts (Street View).

Further, Google is highly motivated to develop visual search. Along with voice search, it helps the company boost query volume – which correlates to revenue – and to future-proof its core business. That motivation and investment will accelerate the development of visual search.

For example, to grease the adoption wheels, Google continues to develop visual search “training wheels.” This includes making Google Lens front & center by incubating it in web search. These actions could reduce friction in launching visual search, and give it greater exposure.

But Google isn’t alone in visual search. Its chief competition comes from two places noted above. The first is Pinterest Lens, which is Pinterest’s tool that lets you identify real-world items and pin them. The second is Snap Scan which lets Snapchat users identify items for social fodder.

The difference between all these players traces back to their core products and company missions. For example, Google will work towards “all the world’s information,” while others zero in on food & style (Pinterest), and fun & fashion (Snap). There will be market opportunities for each.

We’ll pause there and circle back in the next installment with more visual search analysis and examples.