Visual search is a flavor of AR that could represent a breakout use case. It positions the camera as a search input – applying machine learning and computer vision magic – to identify items you point your phone at. 400 million people use it according to ARtillery Intelligence.

Seen in products like Google Lens, visual search is all about annotating the world. Pointing your phone versus typing a search term can be more intuitive in some cases, such as identifying style items encountered in the real world. It especially resonates with the camera-forward Gen-Z.

Beyond Google – a logical competitor -– Snap is intent on visual search given Snap Scan, which draws on the company’s AR chops. Again, visual search is a form of AR….but it sort of flips the script by expanding from selfie lenses to more utilitarian and commerce-oriented use cases.

It also literally flips things around as it shifts from the front-facing camera (selfie fodder) to the rear-facing camera. This lets Snap augment the broader canvas of the physical world – whether that be through Local Lenses or informational overlays about products served in Snap Scan.

Prime Real Estate

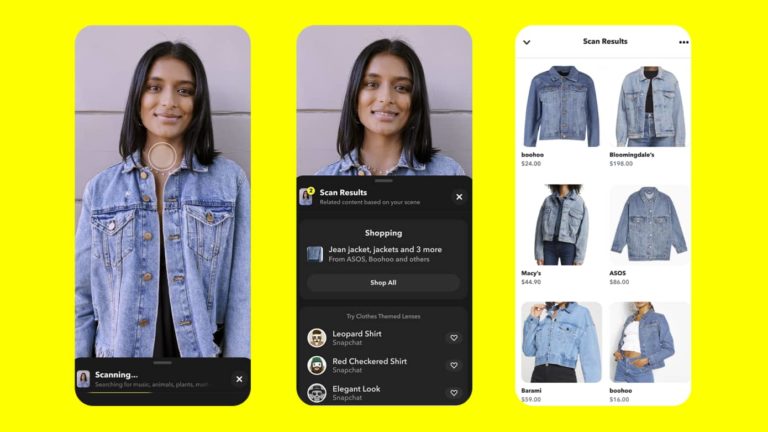

All of the above took a step forward with last week’s Snap Scan updates. It now recognizes more objects and products. Scanning a QR code is one thing….but being able to recognize physical world objects like pets, flowers, and clothes requires more machine learning.

So far, Snap has built up this capability through partners. For example, its partnership with Photomath lets Snap Scan solve math problems. By pointing one’s phone at a math problem on a physical page, it can solve the problem — a natural use case for kids doing homework.

Its newest partnership with Allrecipes will let users scan food items in their fridge to get recipe suggestions. The new Screenshop feature will meanwhile evoke product information and purchase details from retail partners when users scan style items in the real world.

Beyond identifying physical items, Snap Scan can also suggest relevant Snapchat Lenses. Its new Camera Shortcuts feature does this by examining a scene and matching it with the best lens. This tool gains utility as the library of Snap’s community lenses reaches into the millions.

Lastly, Snap Scan is now more accessible. It’s been moved to prime real estate in the Snapchat UX, right on the main camera screen. This should help it to gain more traction and condition user habits. Because visual search is still new and unproven, it needs that nudge.

Launchpoint

Zeroing in on the most notable update above, Snap wants Scan to be a launchpoint for shopping by letting users scan clothes for “outfit inspiration.” This could be visual search’s killer app as it makes the physical world shoppable — meaning both utility and monetization potential.

Beyond fashion, the same value proposition exists wherever products have unclear branding, pricing and availability. For example, wine is a complex product where pricing transparency is valuable. Visual search can also help qualify buying decisions with additional content like reviews.

These commerce-based endpoints, again, support visual search’s monetization potential. The use case carries the same user intent that makes web search so lucrative. In other words, consumers who actively scan products represent qualified leads for brands and retailers.

In that sense, visual search is a natural extension for tech companies built on ad revenue models. And the two companies noted in this article — Google and Snap — are exactly that. Adding to the list, Pinterest is intent on visual search given Pinterest Lens, which continues to grow.

But though it advances Snap’s already-robust AR monetization, the real play may be in visual search’s endgame: AR glasses. Future Spectacles will likely feature Snap Scan to discover the world and buy things more naturally. And that’s when visual search will really hit its stride.