Buried deep in Snap’s recent Partner Summit was a notable nugget. The company’s visual search tool, Snap Scan, now has 170 million users. Given that Snap’s monthly active users total about 500 million, this is a meaningful share of its user base engaged in visual search.

Visual search, for those unfamiliar, involves pointing your phone at an object to activate informational overlays that contextualize it. Visual search is just one of many developing flavors of AR, and it’s also being cultivated by Google (Google Lens) and Pinterest (Pinterest Lens).

These players haven’t revealed their user base figures but our research arm ARtillery Intelligence estimates Google Lens has 212 million users and Pinterest lens has 119 million. Along with a handful of others, this makes the total global user base (de-duped) an estimated 403 million.

Meanwhile, Visual Search’s growth aligns with several factors. Millennials and Gen Z have a high affinity for the camera as a way to interface with the world; while brand advertisers are drawn to AR marketing. This makes it attractive to the above companies as a revenue growth play.

Shoppable Content

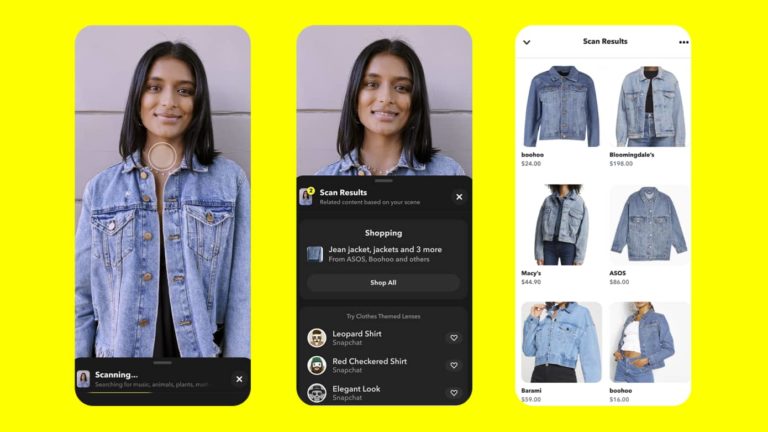

Back to Snap, visual search is a less-discussed component of its AR mix, usually overshadowed by the more exposed and popular Lens format. But it’s a key puzzle piece in the company’s continued expansion of “shoppable” content and, ultimately, AR ad revenue.

In fact, shopping is where visual search shines….perhaps even more than AR lenses. While lenses are fun and highly viral, visual search is more utilitarian….and more aligned with shopping intent. In that way, it’s an extension of the high intent that makes web search so monetizable.

In fairness to lenses and their monetization potential, they currently derive the lion’s share of AR revenue for companies like Snap that offer paid lens distribution. But visual search could emerge as a formidable AR modality that challenges lenses as AR’s go-to shopping format.

This growth could be accelerated by parallel Snap moves. For one, it’s conditioning AR use cases that expand from the front-facing camera (selfie lenses) to the rear-facing camera (world lenses) to augment the broader canvas of the physical world. Visual search is an extension of this.

Meanwhile, Snap continues to broaden Scan capabilities and use cases. It does this through partners, such as identifying physical products (with Amazon), solving math problems (with PhotoMath), and suggesting recipes given the items in your fridge (with Allrecipes).

Transactional Endpoint

One thing to detect from the above examples is the growth of product searches that have a transactional endpoint. Google Lens is doing similar – seeding broad demand with pets and flower searches but increasingly expanding to products. The latter is where the money is.

But the question still remains: what types of shoppable products shine in visual search? Early signs point to items with visual complexity and unclear branding. This includes style items (“who makes that dress?”) and in-aisle retail queries. In all cases, it’s about object recognition and AI.

For example, Snap’s new Screenshop feature offers “outfit inspiration.” This plays out when users scan an outfit, which then activates a recommendation engine for the same or similar styles. This works with real-time scans of a worn outfit, or with photos you have saved in Snapchat.

The common thread is shopping. Pinterest (also intent on visual search) reports that 85 percent of consumers want visual info; 55 percent say visual search is instrumental in developing their style; 49 percent develop brand relationships; and 61 percent say it elevates in-store shopping.

Meanwhile, Snap Scan’s use cases continue to broaden, which traces back to Snap’s overall AR playbook. By evolving and investing in the underlying platform — while empowering partners and developers via APIs — Snap continues to engender more AR entry points and interest points.

Search Intent

Another fitting use case for visual search is local discovery. It could be a better mousetrap for finding out more about a new restaurant — or booking a reservation — by pointing your phone at it. The smartphone era has taught us that search intent is high when the subject is in proximity.

As we examine in our separate Space Race series, Google is primed for this local commerce use case, given the data it has assembled over years to power local search and mapping. But Snap is making moves to catch up, including Local Lenses and its recent pixel8.earth acquisition.

Also similar to Google, Snap is “incubating” visual search to accelerate traction. Its latest move is to plant Scan directly on the main camera screen for easier access. Add it all up and Snap continues to advance its position as a camera commerce staple. Expect a lot more of the same.