As you’ve likely heard, Snap held its annual Partner Summit last week, during which it made the splashy “one-more-thing” announcement that it’s launching AR-enabled Spectacles. As we examined at the time, this is a considerable moment in Snap’s AR lifecycle.

But the news was so big that it overshadowed several other notable AR announcements and updates. These are more evolutionary than revolutionary — and less prone to headlines — but are key updates in Snap’s AR mix. In fact, most of its keynote content tied to AR in some way.

So to cover all of those unsung moments, we poured back over the Partner Summit Keynote and are featuring the summary and embedded video for this week’s XR Talks. In fact, there were too many takeaways to fit in one article….so we’ll tackle it in two, starting here with Part I.

World Facing

Beyond the new AR Spectacles (see article above), there was a rapid-fire procession of AR-related updates and announcements from the Partner Summit Stage. Those include things that are directly and indirectly tied to AR, such as Lens Studio, Snap Map, and Stickers/Bitmoji.

Starting with Lens Studio, Snap announced version 4.0 with several new features such as Connected Lenses. Like Google’s Cloud Anchors and Microsoft’s Spatial Anchors — these let multiple users experience positionally-tracked lenses together from different vantage points.

This move represents yet another expansion from front-facing (selfie) lenses to world-facing ones. This makes sense as there’s a narrow range of things that go on one’s face. In that spirit, Snap is getting better at hand and body tracking, shown in Piaget’s watch try-on experience.

Snap’s latest addition is to let users position the camera for wide-angle shots (full body), while controlling fashion lens try-ons through hand gestures and voice. This lets them swipe through full virtual outfits from across the room and could engender all-new eCommerce use cases.

Meanwhile, the existing slate of brand marketing and lens-based commerce options is working. Gucci achieved 19 million lens activations for its shoe try-on. Eyewear retailer Clearly saw a 46 percent lift in page views from its lens, and American Eagle boosted sales by $2 million.

The Path to Scale

Building on all of the above, Snapchat is on a mission to gain scale. That’s exactly what it’s been able to achieve by productizing lens creation itself and spinning out Lens Studio to scale up production. As we’ve examined, this has been the catalyst in its AR flywheel effect.

Doubling down on that principle for brand advertisers, Snap is releasing an API so that they can integrate their product catalogs into Snapchat where they’ll be more discoverable and interactive as lens-based product try-ons. Sometimes self-serve is the path to scale.

Snap is also launching Brand Profiles which help consumer brands establish a more formalized presence on Snapchat where they can spark the above user interactions. This is coupled with more granular analytics in Lens Studio 4.0 so brands can see how lenses are performing.

The latter is particularly notable in that it’s not just a standard feature for ad platforms, but could be used as a market intelligence tool. In other words, insights from lens-based try-ons can inform brand marketing strategies or decisions about product pipelines and logistics.

Lastly in terms of brand perks on Snapchat, it recently launched a creator marketplace to better match marketers with lens creators. This lowers friction for companies to test the AR marketing waters and, like all of the above, is meant to attract more brands to the platform.

Snapchat’s Virtuous AR Cycle: Users, Developers & Advertisers

Not Hot Dog

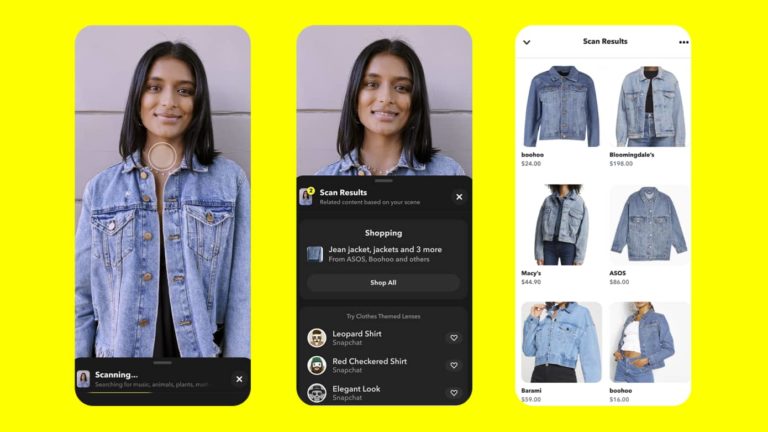

Moving from lenses to visual search, Snap Scan lets users find out about products by pointing their phone at them, much like Google Lens. And just like Google continues to “incubate” Lens by putting it front & center, Snap Scan is now available directly on the main camera screen.

Moreover, Snap Scan can spark other activities and interactions, making it a more attractive tool to discover things. For example, the new Screenshop feature lets users scan an outfit (live or via past photos) which launches a recommendation engine for the same or similar styles.

Beyond fashion and “outfit inspiration,” Snap Scan continues to develop a broader range of use cases, such as cooking. Through a partnership with Allrecipes, Snap Scan can scan food items (insert Not Hot Dog joke), to then recommend recipes that utilize those ingredients.

Speaking of broadened use cases, this goes back to Snap’s playbook to achieve scale. By evolving and investing in the underlying platform — while empowering partners and developers via APIs — Snap continues to engender more AR entry points and interest points.

And it’s working so far. As it often goes at Snap events, we got a fresh batch of figures. There are now 200,000 lens creators, two-million lenses created, and two-trillion (with a T) lens views to date. This plus all of the above validate once again that Snap is the king of consumer AR.

We’ll pause there and circle back in part II to examine other AR-related announcements from Snap.