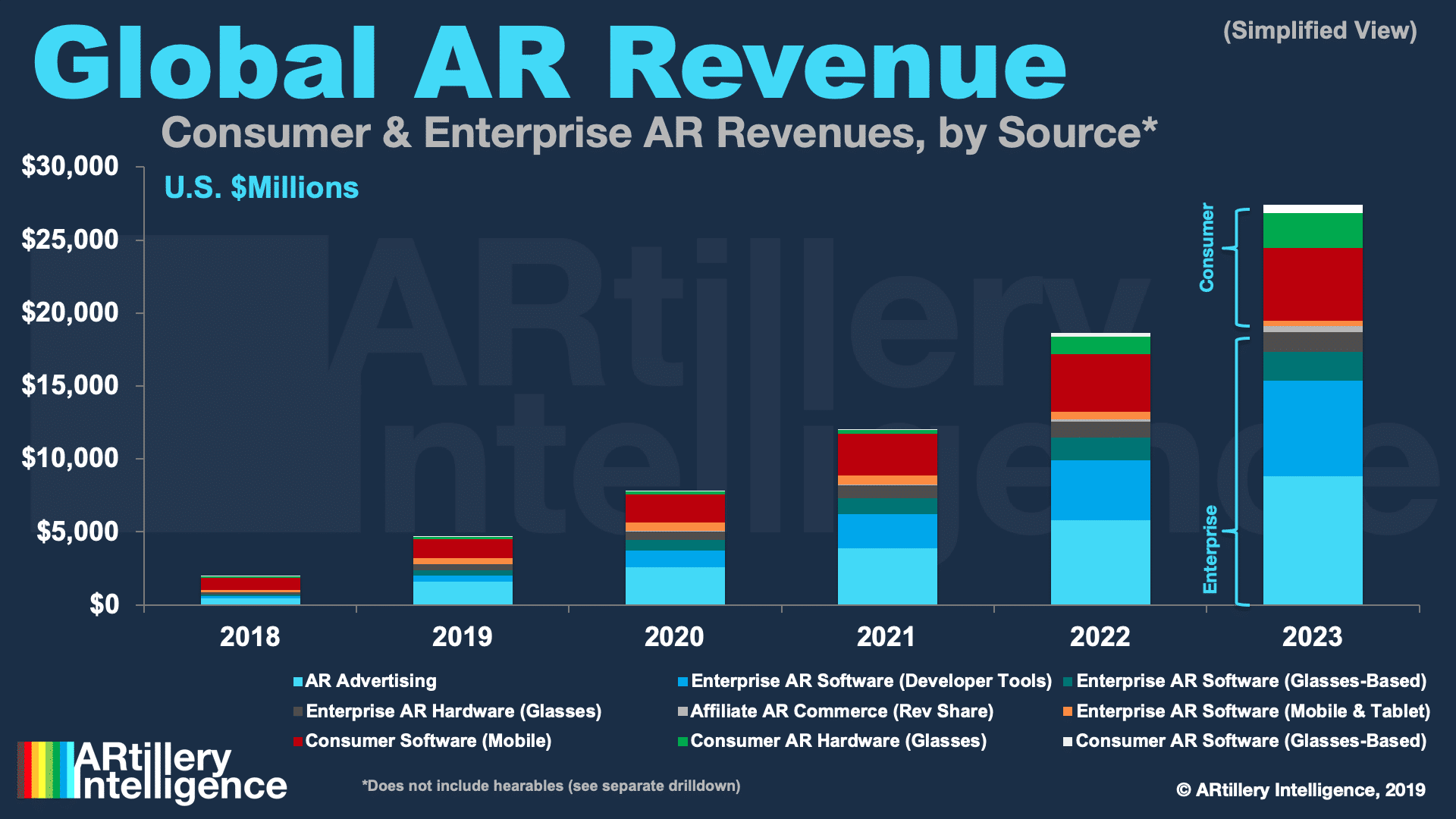

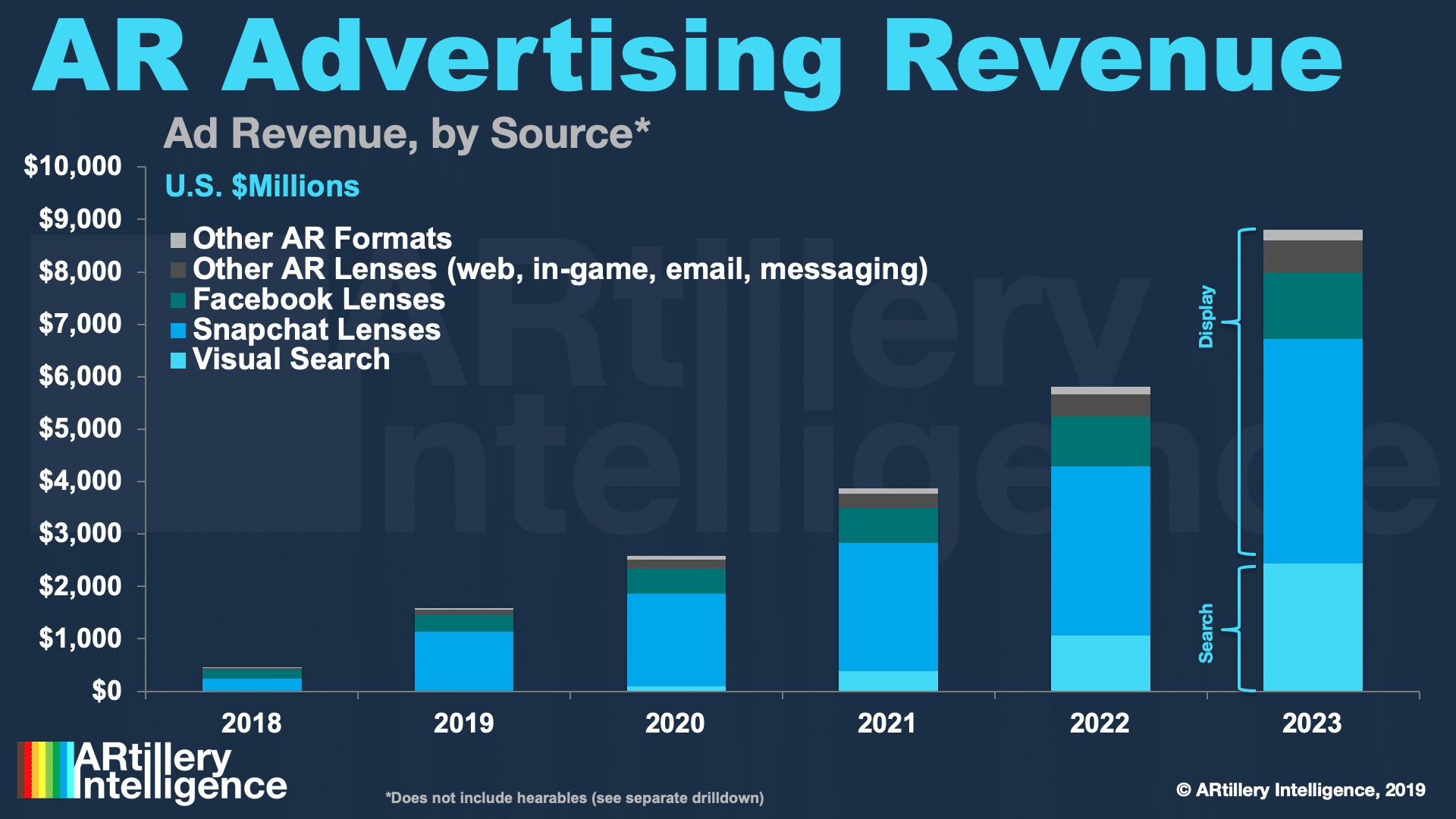

This post is adapted from ARtillery Intelligence’s report, Lessons From AR Revenue Leaders, Part I: Snap. It includes some of its data and takeaways. More can be previewed here and subscribe for the full report.

A lot can be learned from consumer AR’s early leaders. What are they doing right? How are they engaging users? And how are they making money? These are key questions in AR’s early stages, as there’s no standardized playbook quite yet. This results in lots of experimentation.

This includes pinpointing fitting AR use cases, as well as more granular strategies around user experience (UX). What types of AR interactions resonate with consumers? And what best practices are being standardized for experience and interface design?

Equally important is the question of AR monetization and revenue models. Just as user experience is being refined, questions over what consumers will and won’t pay for are likewise being discovered. The same goes for brand spending behavior for sponsored AR experiences.

Picking up where we left off last week, Snap is one company leading the way with all of the above. It has built a real revenue-generating business around AR lenses. A lot of this comes down to a virtuous cycle: user engagement attracts lens developers, more users… then advertisers.

Virtuous Cycle: Developers

600,000 AR lenses have been created to date on Snapchat and lens creators grew 20 percent in Q3 2019 — the last reported figures. This follows Snap’s move to open the Lens Studio platform and make it increasingly accessible. Some creators can make as much as $40,000 per month.

This engenders a sort of virtuous cycle for AR lens engagement, creation, and ultimately revenue. A greater volume of lenses attracts users and boosts engagement rates. That growing audience attracts more lens developers which further expands the library and, in turn, attracts more users.

That’s a notable flip from Snapchat’s early AR lens experiences that were limited in volume and gated by a highly curated approach. Like Facebook, it has since leaned into the idea of scaling up lens development with a steady flow of tools for lens creation, distribution and monetization.

That includes creator profiles that give developers a presence to promote their work and make it discoverable. There are also new templates for popular formats such as lenses that augment hands, bodies and pets. This handles the heavy computational lifting and simplifies lens creation.

In the same spirit, Landmarkers templatize AR lens creation for high-traffic places like the Eiffel Tower. Developers can use those templates to infuse their own creativity. Templates also mean a focused set of subjects for which Snapchat has done advance work for mapping and tracking.

Landmarkers are also smart in that Snapchat has concentrated its efforts in a smaller subset of locations, thus sidestepping the AR cloud challenge of recognizing every building and street in the world. It instead focuses on high-traffic areas that get the most bang for the spatial-mapping buck.

Other developer tools include 3D paint, launched alongside the new AR Bar. This lets users annotate live scenes with stylistic overlays, essentially making anyone a lens developer. This aligns with the pattern of crowdsourcing creation, while feeding and fueling socially-oriented AR.

Virtuous Cycle: Users

Refined experiences like Landmarkers translate to more robust AR for users – the counterpart in the virtuous cycle. Snapchat is also expanding capabilities beyond selfie lenses to utilize the rear-facing camera and augment the outside world, thus a greater range of use cases.

So far, these non-selfie AR activations include World Lenses, Snapcodes (AR activations from branded QR codes) and song identification from Shazam. Through a partnership with Amazon, it’s also moved into visual search to identify physical-world products with your mobile camera.

Snapchat has also moved into a broader range of AI-fueled lenses with its Scan feature. This applies object recognition then adds context-aware AR animations to a given scene. So gifs from Giphy can serendipitously animate related scenes, or math problems are solved on the fly.

The latter is perhaps the most compelling feature and combines a sort of novelty wow factor with actual utility. Snap also notably beat Google to the punch in what is a very “Googley” feature. Google has since launched a Google Lens feature that totals and tallies restaurant checks.

“Now you can scan anything, and we’ll show you the most relevant lenses. Just press and hold the screen and we’ll show contextually relevant gifs,” said Snap’s Bobby Murphy at a developer event. “If you’re trying to solve a math equation, we’ll help you do that with our partner Photomath.”

These moves extend Snap’s AR persona from lenses to more utilitarian functions. That’s evident in solving math problems – a lighthouse feature that will lead to other creations. As we’ve examined, mundane utilities like search and navigation are where AR killer apps could germinate.

And just like Google Lens, the magic isn’t on the front end but in the computer vision and AI to recognize objects. Snapchat is doing that through partners like Photomath, but could scale up its own AR cloud and image recognition as the machine learning improves through high-scale use.

The Endgame: Advertisers

The above features aren’t monetized directly but they indirectly accomplish an important end. That is, they create a more attractive experience which grows user volumes and engagement levels. That in turn attracts brand advertisers to Snapchat’s AR revenue driver: sponsored lenses.

In addition to audience reach, the user-facing features outlined above unlock new capabilities for brand advertisers to engage users. It essentially provides them a bigger toolbox for product animations – involving faces (cosmetics, etc.), and the larger canvas of the physical world.

“The Snapchat camera allows people to use computing in their natural environment, the real world,” said Evan Spiegel at Snap’s partner summit. “By opening the camera, we can create a computing experience that combines the superpowers of technology with the best of humanity.”

This appeals to a lot of creative professionals in the marketing departments of brand advertisers, or at ad agencies. Erstwhile stuck within the confines of 2D media, immersive product try-ons through AR lenses let them flex their creative muscles and see real results.

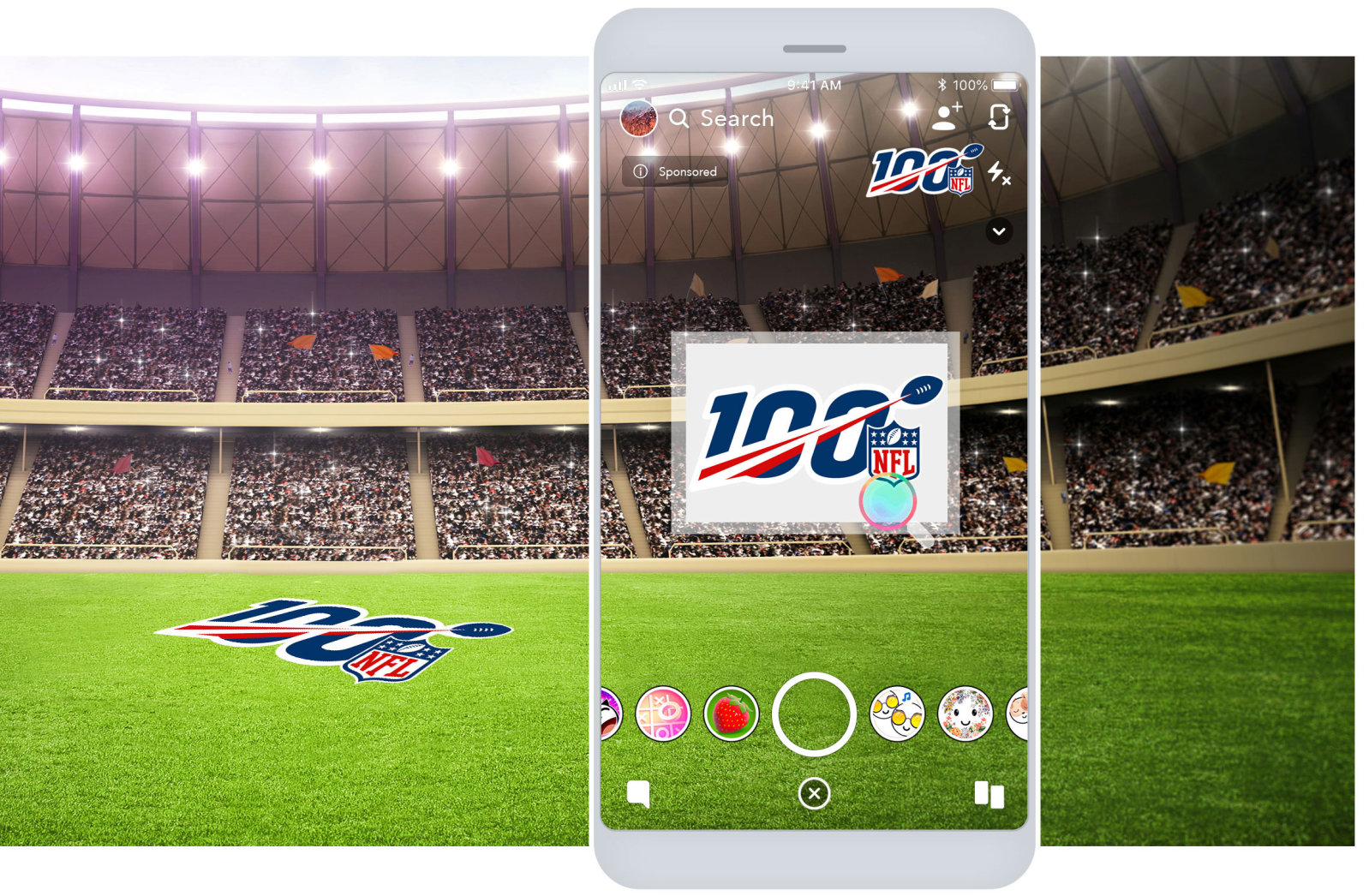

One example is the NFL’s lenses for last year’s Superbowl LIII, featuring selfie lens animations for each team’s fans to show their support. It was viewed 303 million times, which is 3x the television viewership of the game itself. This embodies AR’s potential for true reach and audience scale.

Snapchat derived an estimated $1.114 billion from AR advertising last year (see above). It also attributes AR to its revenue growth and 2019 stock market rebound. Beyond that, it broadly sees AR as a core part of its business as a camera company. So it continues to double down on it.

By doing so, Snapchat is “following the money.” This aligns with our ongoing concept of triangulating tech giants’ AR directions by examining financial motivations. For Snapchat, AR will be a key revenue diversification play to satisfy Wall Street and its own “camera company” vision.

Stay tuned for more report excerpts, and check out the full report here.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.