XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, as well as narrative analysis and top takeaways. Speakers’ opinions are their own.

Snap has labeled itself for a while as a camera company. That designation naturally led into the current wave of mobile AR excitement, likely by design. But even as AR slogs through what some call the “trough of disillusionment,” Snap is doubling down on its vision for an AR future.

This culminated in yesterday’s Snap Partner Summit where several AR features rolled out (video below). At a high level, new functionality addresses developers, users and advertisers. In fact, the value chain goes in that order in terms of driving AR revenue for Snap (more on that in a bit).

Starting with developers, Snap has come a long way from initially keeping AR lens design in-house. With Lens Studio, it opened up lens creation to developers and thus scaled up volume and creativity. Now it’s taking another step in giving developers ammunition to create AR lenses.

That includes creator profiles that give developers a presence to promote their work and make it discoverable. There are also new templates for popular formats such as lenses that augment hands, bodies and pets. This handles the heavy computational lifting and simplifies lens creation.

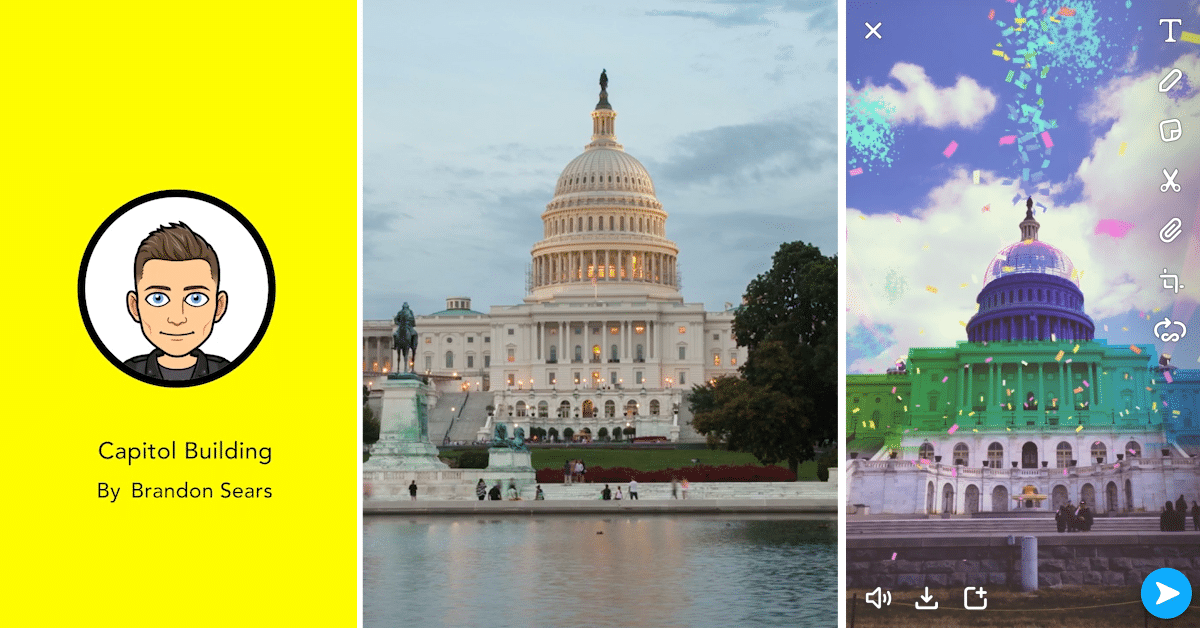

In the same spirit, “Landmarkers” templatize AR lens creation for places like the Eiffel Tower or Flatiron Building. Developers can use those templates to infuse their own creativity. Templates also mean a focused set of subjects for which they’ve nailed the AR mapping and tracking.

In other words, Snap has intelligently concentrated its efforts in a smaller subset of structures, thus sidestepping the AR cloud challenge of recognizing every building and street in the world. It instead focuses on high-traffic areas that will get the most bang for the machine-learning buck.

That refined experience also translates to tighter AR experiences for users… the second constituency mentioned above. For them, Snap is boosting capability beyond selfie lenses, to utilize the rear-facing camera to augment the world, and thus a greater range of use cases.

To be fair, Snapchat already had non-selfie AR such as World Lenses, Snapcode activations, song identification from Shazam (audio AR), and visual searches of products. The latter is a key step we’ve examined, to identify products in the real world through a partnership with Amazon.

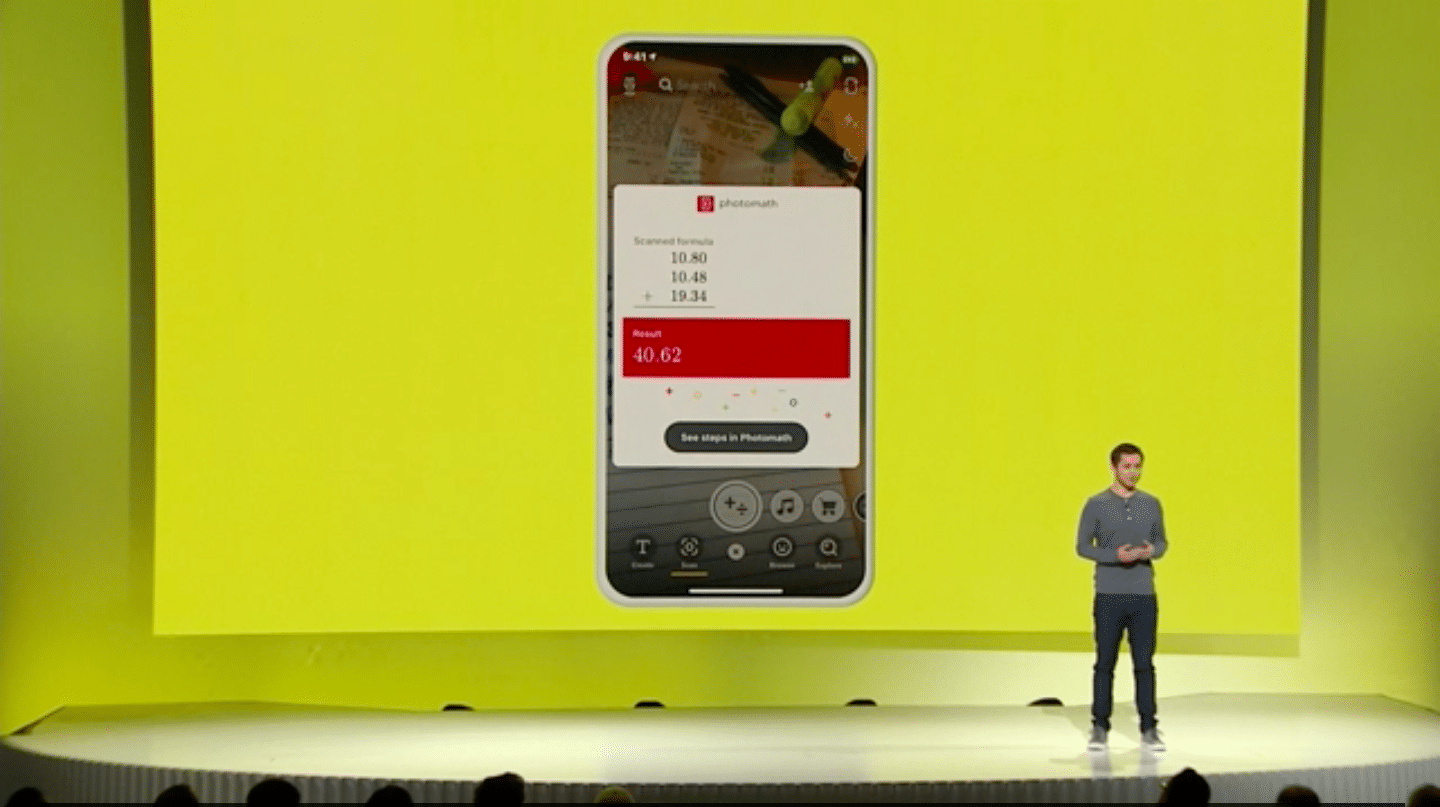

Now Snap moves to a broader range of AI-fueled AR with its Scan feature. Sort of like visual search, this uses object recognition then adds context-aware AR animations. So gifs from Giphy can serendipitously animate related scenes, or math problems are scanned and solved on the fly.

“Now you can scan anything, and we’ll show you the most relevant Lenses. Just press and hold the screen and we’ll show contextually relevant gifs,” said Snap co-founder Bobby Murphy. “If you’re trying to solve a math equation, we’ll help you do that with our partner Photomath.”

This extends Snap’s AR persona from selfie masks to more utilitarian functions. That’s evident in solving math problems — a lighthouse feature that will likely lead to lots of other creations. As we’ve examined, mundane utilities are where AR killer apps will germinate, a la Google Lens.

And just like Google’s Lens, the magic isn’t on the front end but in the computer vision and AI to recognize objects. Snap is doing that so far through partners but it could scale up its own AR cloud and image recognition as the machine learning improves through high-scale use.

That usage will accelerate from new lens experiences outlined above, in combination with Snap’s AR-forward audience. 70 percent of Snapchat’s 186 million daily active users are activating AR lenses daily, and 15 billion have been viewed. No one else has that much AR engagement.

Back to visual search, it’s really just an evolution of marker-based AR, which Snap was already doing through Snapcodes. But object recognition now brings AR activation to a broader range of real-world “markers.” That includes 2D media like movie posters or magazine ads.

Though marker-based AR is often shunned in AR circles as being simplistic, we believe it will be a key factor in AR’s next era. Things like posters are not only plentiful (in ad inventory terms) but they are an adoption accelerant in expressly prompting users to scan them for AR animations.

But most importantly, they’re highly monetizable. And that’s an area where Snap has everyone beat. AR lenses are the most lucrative form of AR and Snap has an early lead. According to our projections, Snap has derived $236 million of the $408 million spent on AR advertising in 2018.

In fact, when considering AR revenue leaders, people often point to (as we have) Pokemon Go and its $2.3 billion in cumulative in-app-purchase revenues. But all of that revenue isn’t directly attributable to AR (it’s just one component), whereas Snap’s branded lens revenue is all AR.

And that’s the endgame for all of the above. Users are engaged longer through compelling AR, thus attracting brand advertisers. Brands meanwhile get a bigger toolbox for product animations. And ad inventory grows from faces (cosmetics, etc.), to the grander mosaic of the physical world.

That brings us full circle to doubling down on AR. By doing so, Snap is following the money. To be fair, it’s less following than creating revenue where few others have. AR will be a key revenue diversification play to satisfy Wall Street in the near term, and Snap’s vision for the long term.

“The Snapchat camera allows people to use computing in their natural environment, the real world,” said Evan Spiegel from the stage. “We believe that by opening the camera, we can create a computing experience that combines the superpowers of technology with the best of humanity.”

See the full presentation below

https://youtu.be/yifzT3n0Ps8

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.

Header image credit: Snap, Inc.