A question continues to be asked in AR circles: what are the points of intersection between AR and AI? One answer to that question comes from brain science, as the convergence of these technologies holds ample potential to transform the way we perceive and interact with ideas.

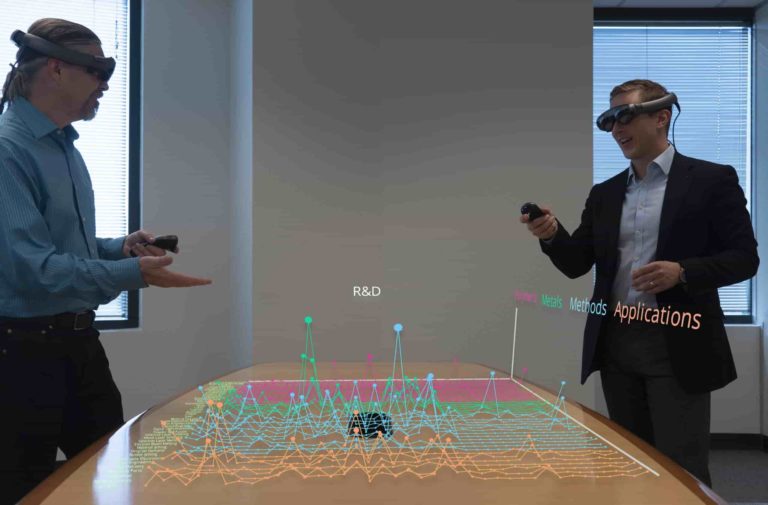

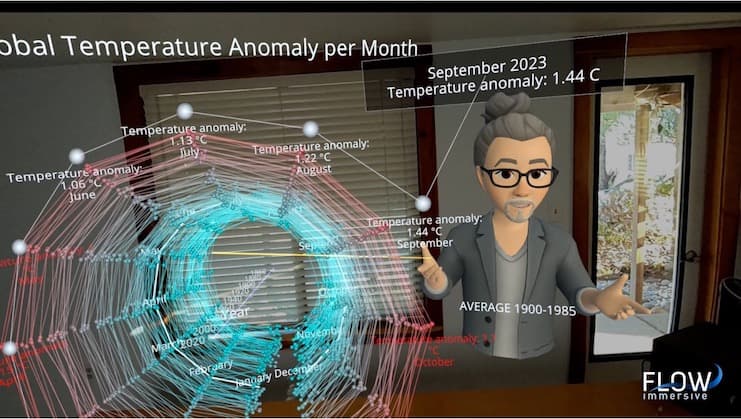

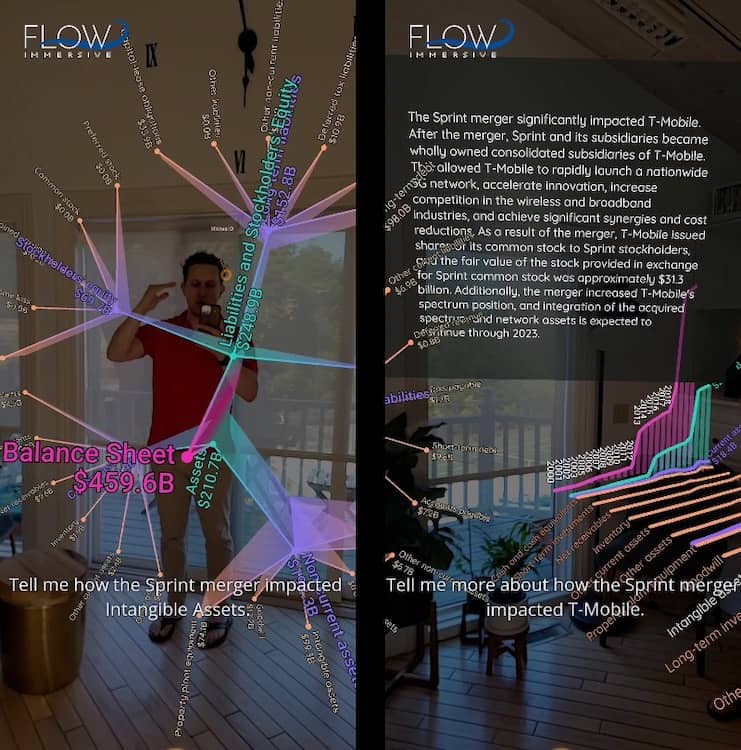

To project that onto a more concrete scenario, imagine an executive parsing through sales data. Instead of viewing flat charts on a screen – the predominant approach today – the exec walks around a virtual sales map, zooms into specific regions, and is served real-time predictions and insights on-demand. They expand specific graphical details and activate insights through natural-language verbal prompts. Unlocked by AI and rendered in AR, the former provides the control interface and insights, while the latter does the visual heavy lifting.

For 8 years, I’ve been creating something similar – a vision brought to us through several SciFi movies that feature interactive AR visualization. It’s like Iron Man, where Tony Stark creates a new element to power his suit using his AR-powered Jarvis supercomputer (maybe a new definition of the commonly-debated “future of work.”)

In these contexts, AI makes AR make sense. While we’re at it, the inverse is also true: AR helps AI make sense. In the context of data visualization, AI can process massive amounts of data quickly, identify patterns, and present insights. On the other hand, AR provides an interactive, immersive platform to visualize these insights in a 3D space or the real world.

AR Needs AI

Our brains are remarkable information processors, capable of making sense of complex data and creating meaningful connections. However, traditional information presentations often fall short in effectively tapping into the brain’s innate processing power. PowerPoint is a good example of this, where each slide disappears from our visual view without showing its relationships to past or future slides. Our brain has to work extra hard to make these connections because the brain’s hippocampus can’t create spatial connections to disappearing non-things.

But in AR, we can see these relationships with data points, words, and connection lines. We can even “cognize” beyond our immediate field of view because the brain maintains a model of our 360-degree physical space, even if it is only available with a turn of the head.

But AR still has challenges: even if it had wider FOV and retina resolution, it doesn’t feel right to show complex interfaces floating in space where they clutter too much of our real world. Buttons in space are not going to do it. But with the combination of AI and AR we can interact with digital content in a natural and seamless manner using voice commands that have become newly useful because of large language models. A voice interface, especially one with a broad set of commands for manipulating data, has been the missing piece for a massively usable application. AR without AI has always been handicapped… but not anymore.

An AI interface further increases interactivity, giving each participant the ability to personalize the communication for themselves, increasing relevance, and therefore value. In fact, as SciFi movies have shown us, visualizing our data and information in 3D in our world should be providing us with the “cool” feeling of mastery and control, and these combined technologies do that. I often say that the definition of “cool” is “mastery and control”. AI with AR gives us that magic while providing business value.

AI Needs AR

But the connection goes even deeper because AR is equally vital to AI, as it provides the missing piece of the puzzle when it comes to data visualization and comprehension. Chat-based LLMs increase this problem: they can generate great tomes of words, which can be very helpful, but they don’t activate our visual brains to build clear mental models.

While AI algorithms excel at extracting insights from complex datasets, the challenge lies in effectively presenting this information to the human mind. This is where AR’s visualization capabilities come into play, transforming abstract data into tangible and visually stimulating representations.

Human brains are an amazing tool to build 3D mental models. It is a lot of work for the brain to translate words into these models. In a lot of ways, our brains build 3D mental models kind of like a branching tree. AI generates ideas, like fruit, and AR gives you the structure to hang an idea-fruit on a branch, where it has the spatial relationships to create meaning. Our hippocampus loves spatial information, and therefore it sticks in long-term memory.

And here’s the real kicker: these mental models need to be shared with your audience or team, which is so much easier when seeing the model than describing the model to colleagues with a bunch of words. By externalizing our mental models using AR, we’re essentially making our thought processes tangible and shareable. This has the potential to revolutionize collaboration and communication. Instead of trying to explain a complex concept verbally or through 2D charts, teams can literally walk someone through their thought process in an interactive 3D space. This can lead to better alignment, understanding, and quicker decision-making. What is that worth to you?

Force Multiplier

The implications of this vital connection between AI and AR are profound. From education to healthcare, manufacturing to marketing, the integration of these technologies revolutionizes the way we work, learn, and interact with information. Students can engage in immersive and interactive learning experiences, doctors can visualize medical data with unprecedented clarity, and businesses can gain deeper insights into their operations, leading to more informed decision-making.

This is the force multiplier that AR has been waiting for.

Jason Marsh is Co-Founder & CEO of Flow Immersive.

Jason Marsh is Co-Founder & CEO of Flow Immersive.