This post is adapted from ARtillery Intelligence’s latest report, Social AR: Spatial Computing’s Network Effect, Part II. It includes some of its data and takeaways. More can be previewed here and subscribe for the full report.

A big part of reaching AR’s potential is to empower developers to build AR experiences. Facebook’s Spark AR platform is meant to lower barriers and accelerate AR adoption by seeding more experiences. And that’s done by making it easier to develop AR on ubiquitous smartphones.

“Because it’s delivered through the Facebook camera, you have potential to reach 1.5 billion people,” said Facebook’s Elise Xu at AWE. “We want to expand [by] growing the inventory of AR content and giving developers ability to make AR experiences [for] more people and channels.”

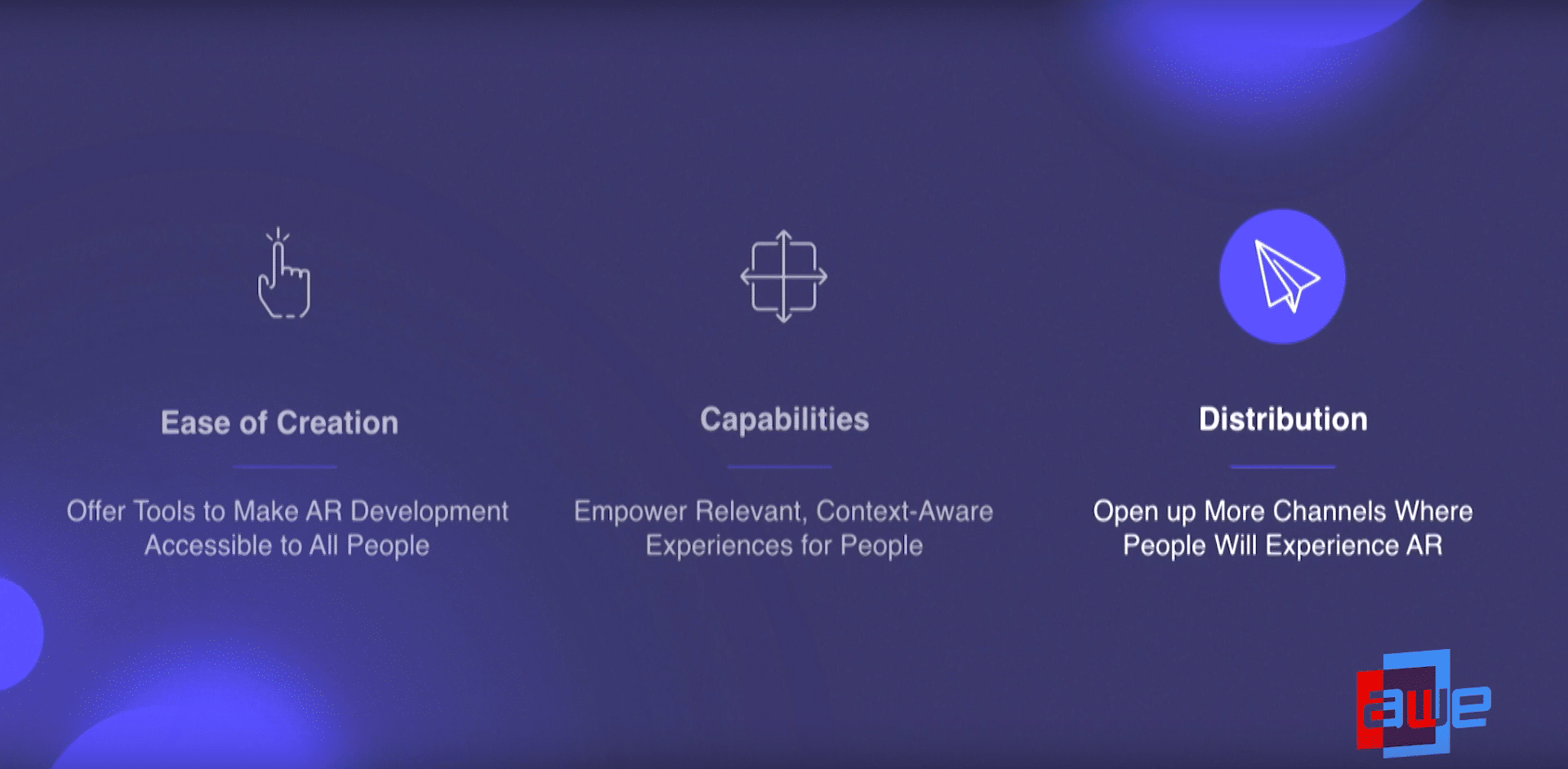

To clarify, 1.5 billion is an installed base for Facebook, not active AR users. But Facebook plans to penetrate deeper in three ways: easier AR creation, more capabilities and greater distribution. For creation, its Patch Editor utilizes a visual programming interface for drag & drop functionality.

This means programming such as JavaScript isn’t required to make AR experiences on Spark AR Studio. And it’s doubling down on that strategy by making it easier to import AR graphics. A partnership with Sketchfab for example will make it easy to import thousands of 3D objects.

“There’s a library in Spark AR Studio that contains a collection of 3D models pre-made from Sketchfab,” Xu said on stage. “We’ve already vetted them to make sure that they’re compatible with [Spark] AR Studio and you can just browse them and add them directly into your projects.”

Context-aware Experiences

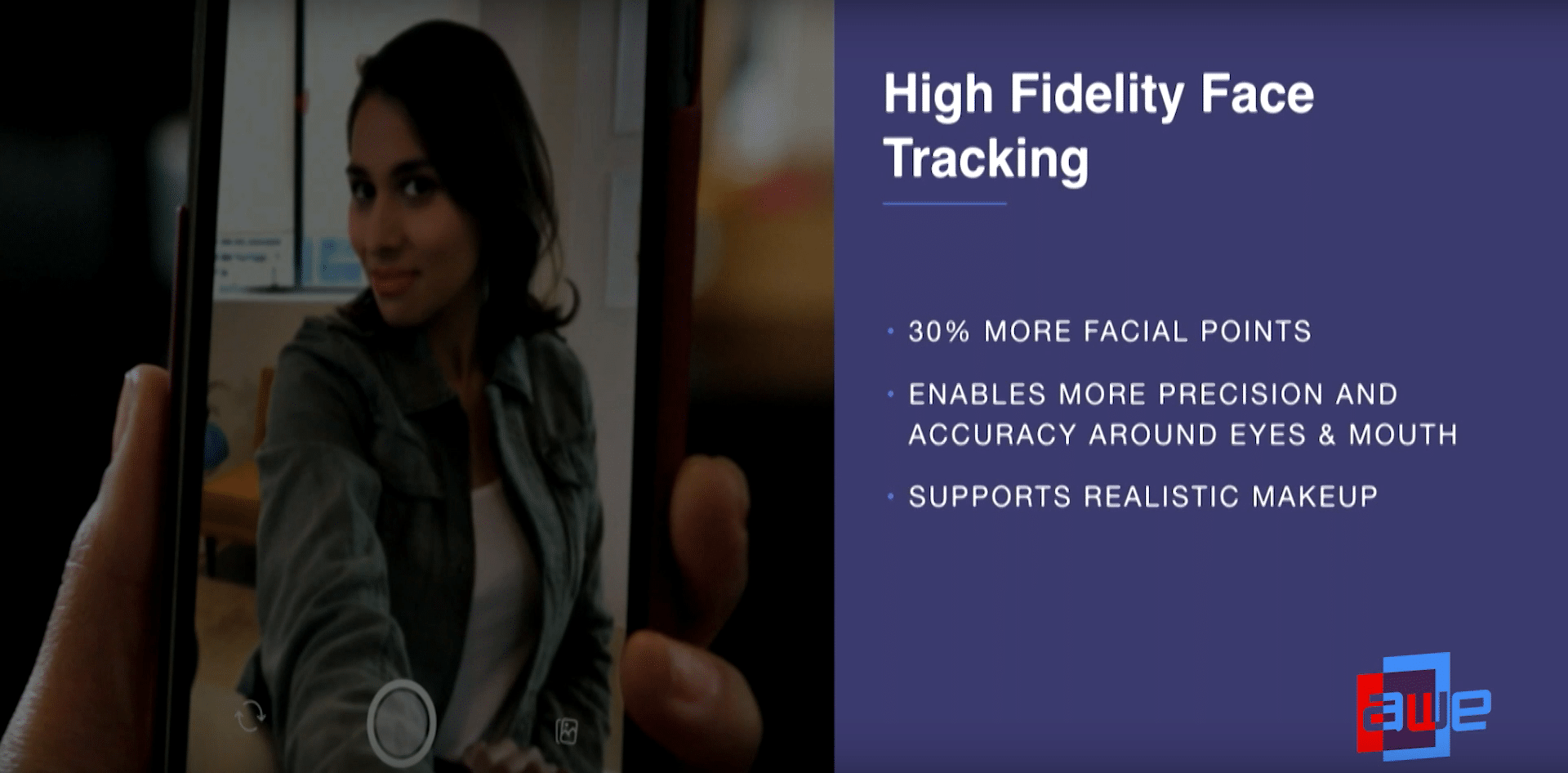

As for the second goal – AR capabilities – Facebook is evolving its interactions for augmenting people (front-facing camera), and the world (rear facing camera). For the former, it’s also making advertiser-friendly enhancements, such as better face tracking for trying on style items.

“We launched a high-fidelity tracker that tracks 30 percent more points on the face,” said Xu. “It enables more precision accuracy around areas like the eyes and the mouth, and this is important for realistic makeup effects…. one of the major verticals we’re tackling with that is cosmetics.”

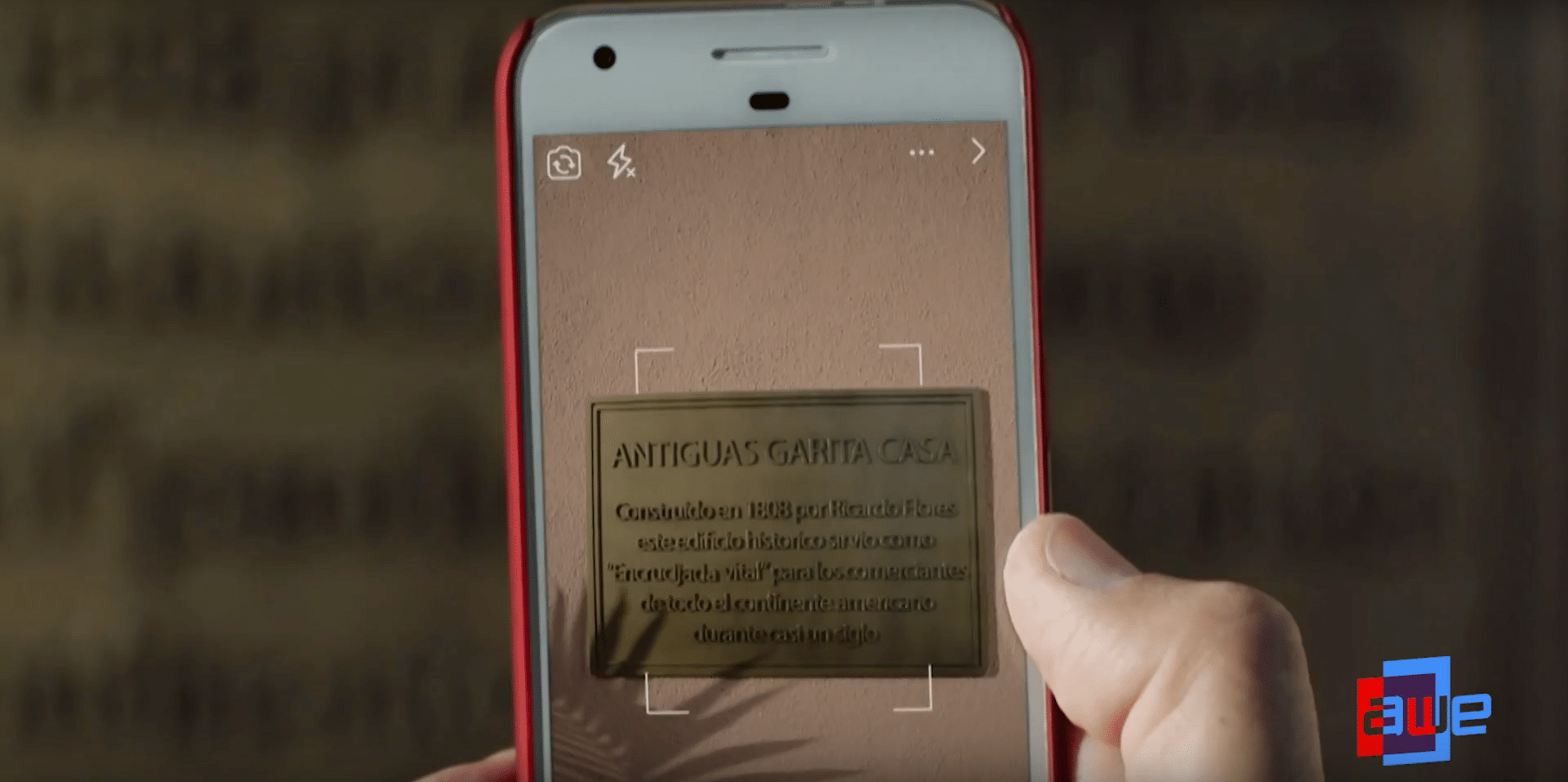

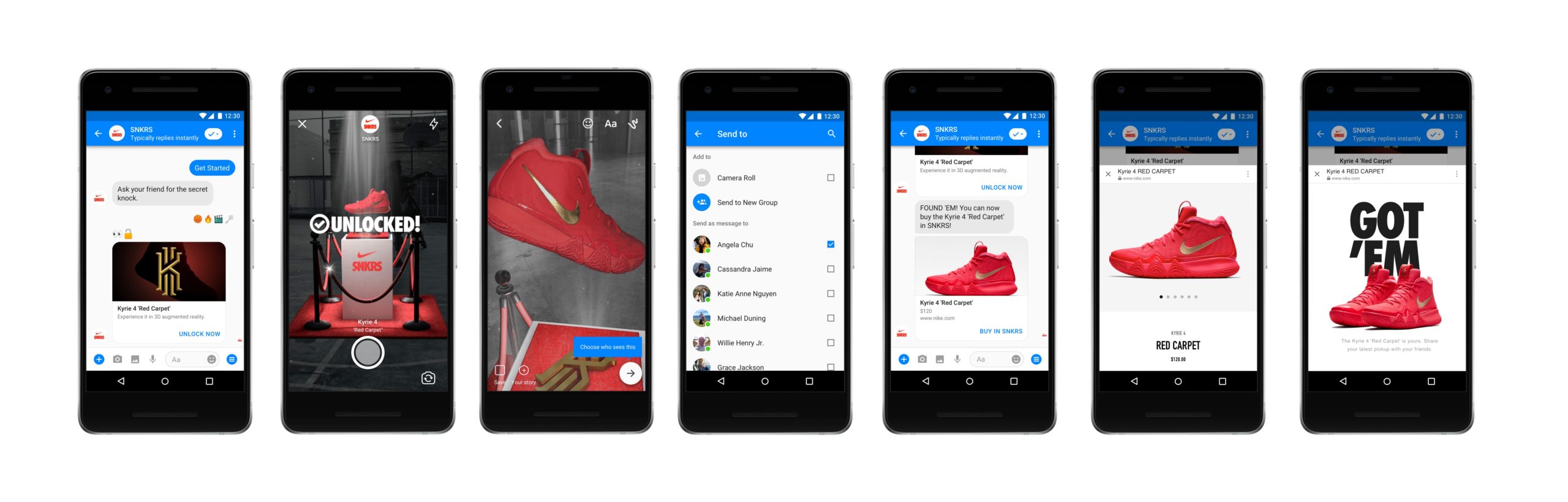

As for outward (rear-facing camera) effects for augmenting the world, Facebook’s Target AR feature is a sort of marker-based approach to activate 3D content on any 2D plane. This is meant for things like pop-up animations on museum placards, or other ad-friendly use cases.

“The 2D planar object could be a sign… but it could also be product labels… it could be illustrations in books,” said Xu. “Eventually we want the camera to be able to understand your world. And the more it’s able to do that, the more it’s able to give you context-aware experiences.”

Facebook’s Location AR meanwhile works towards geographically-anchored AR graphics that carry location relevance. This will be a key value-driver in AR, tied to the concept of geographic scarcity. And like the above moves, it’s advertiser-friendly considering location-based marketing.

“As AR evolves and expands, it’ll become more important for these experiences to be location relevant, and to be particularly tied to certain locations so they feel like they’re part of that place,” said Xu. “Location can be as broad as a country or as specific as a particular address.”

‘Gram it

Last among Facebook’s over-arching AR initiatives is the third goal: broadening distribution. This is a numbers game to attract developers who are deciding where to apply resources. And the next frontier for AR among Facebook’s properties, as mentioned earlier, is the mighty Instagram.

“A big push this year has been bringing our AR platform to the Instagram camera,” said Xu. “The next phase is opening it up to more creators … we’re going to have our first batch of creators who are not already known brands or influencers and see what they come up with.”

Clearly, the key theme through all these moves is lowering barriers to AR creation and distribution. That goes for both independent developers and advertisers. The former create content that grow Facebook usage and engagement, while the latter bring in the dollars. And it’s working so far…

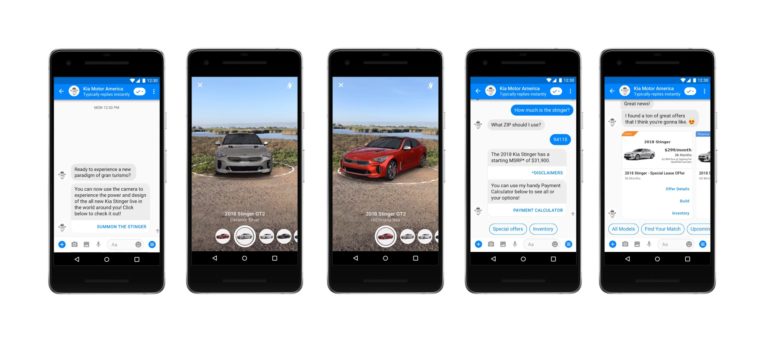

“When A/B tested against non-AR ads, [AR] drives significantly more conversions,” said Xu. “The next step is making the creation of these assets far easier and cheaper. One potential way to do that a tool where advertisers simply upload 3D models and we auto-generate AR effect for them.”

Lighthouses

Building on the above opportunities, Facebook is taking AR development seriously. That goes for the tools its building for developers in the Spark AR platform as just explored. But it also applies to the experiences its developing internally to inspire developers with use cases that work.

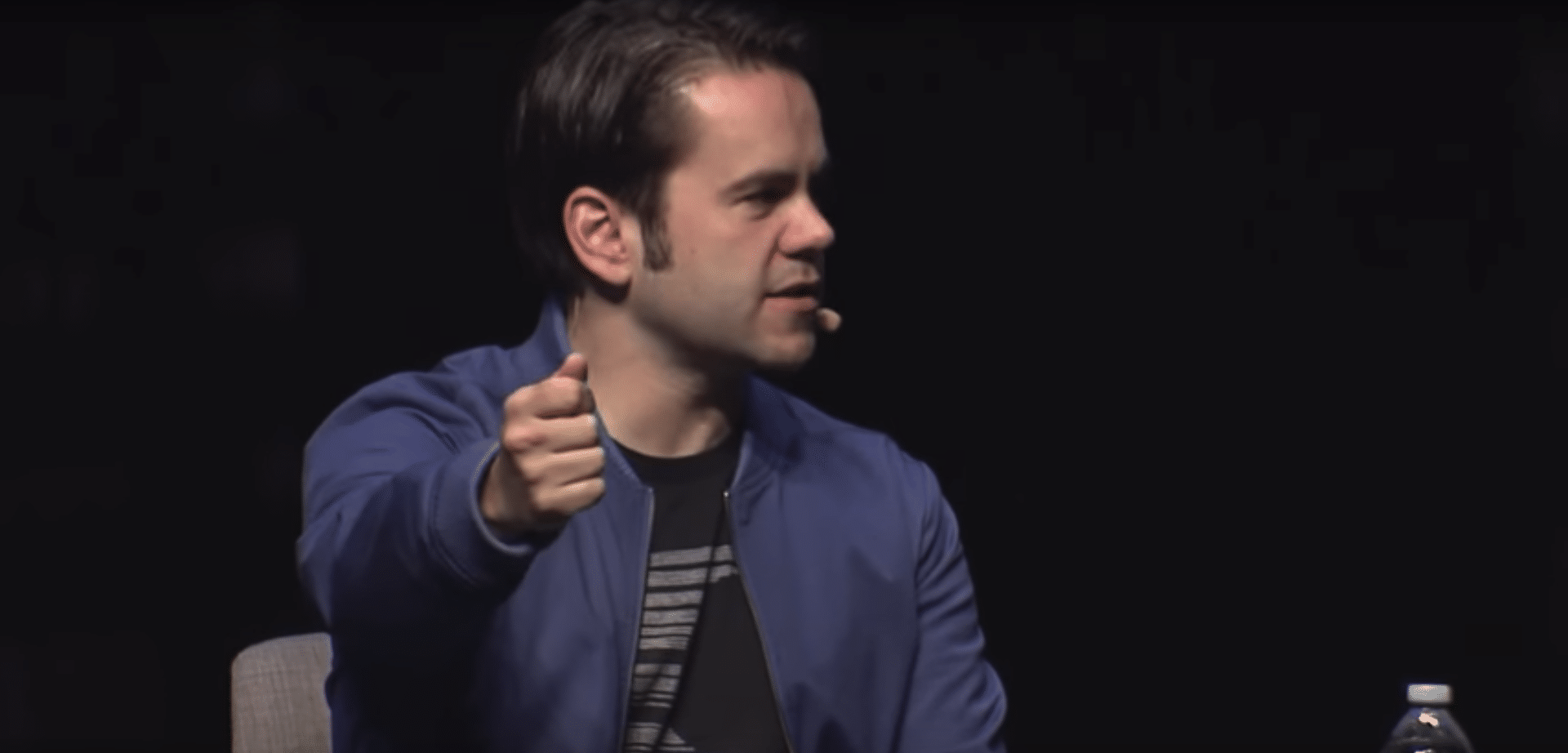

According to Facebook Camera lead Ficus Kirkpatrick, a platform like Facebook has to strike this balance between guiding developers with AR best practices, and letting them experiment. He says it does this by seeding the ecosystem with a few proven “lighthouse” AR experiences.

“It’s really important that we as the platform set examples of what can be done,” he told Techcrunch on stage. “It doesn’t foreclose any opportunity to do something new and creative, and it does maybe inspire people who need that initial push to start from a new jumping-off point.”

One example of a “lighthouse” is Story Time in Facebook Portal. It adds AR animations like selfie lenses for adults telling stories (think: grandparents) to children remotely. Super Ventures’ Tom Emrich heralds it as a “trojan horse” for AR in the home. Other lighthouses involve commerce.

“In commerce in particular, we’re running some interesting tests where you can try on sunglasses before you buy them, and you can try on makeup before you buy it,” said Kirkpatrick. “Cases like that are interesting and solve problems for people and maybe save you a trip to the store.”

Threading the Needle

When working on lighthouse experiences or any Facebook-branded AR product, Kirkpatrick also has firmly held quality standards and design principles. This primarily includes building for native utility and repeat/active use as opposed to novelty. His team has specific protocols to achieve this.

“It’s about thinking ‘Is this something that someone will come back to, or is it kind of a splashy thing?’” he said during the on-stage interview. “Then we do a ton of testing… internal testing… user research. At the end of the day, the test for us is if people are coming back to use it.”

In early days, these success factors are mostly unknown and it’s a game of feeling out what resonates with users. But as an industry, shared best practices are emerging — many based on physical realities of mobile AR. For example, design around the limitation of short sessions.

“There are a couple of things that are pretty different about a handheld device,” said Kirkpatrick. “Notions like going up and seeing an augmented street sign, isn’t really going to work because nobody’s going to walk around with their hand out like this [gesturing] all day long.”

There’s also the concept of “activation energy” which is the friction to launch an AR experience. For example, app downloads (as opposed to web AR) are an adoption barrier for this reason. In short, the appeal or utility of the AR experience has to outweigh the activation energy.

“[It’s] use cases that are so valuable and promising that they’re worth overcoming the friction of taking your phone out of your pocket and getting into an app.” he said. “There are quite thin needles we need to thread in terms of finding cases that actually work like that on handhelds.”

See more details about this report or continue reading here.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.