2016 visions of near-term AR glasses are taking longer to materialize — mostly due to technical and cultural realities. Cultural factors are real but technical issues may loom larger due to limitations in converging digital and optical technologies.

As background, digital technologies — such as silicon chip-based computing and displays — are advantaged by the forward march of Moore’s Law. This defines the shrinking size and cost of chip components — the reason your flat screen TV is cheaper now than when you bought it last year.

But optical technologies like lenses follow different laws. They’re bound by physics, such as the way light moves. This is the reason my Canon 70-200mm lens isn’t cheaper now than when I bought it three years ago. But the 6D camera body — powered by digital tech — definitely is.

This is one of many reason AR glasses are challenged — particularly waveguide or photonic lightfield systems in headsets like Hololens 2 and Magic Leap One. They render images on glass (or into your eye), after reflected or “guided” by a system of tiny stationary and oscillating mirrors.*

Physical Challenge

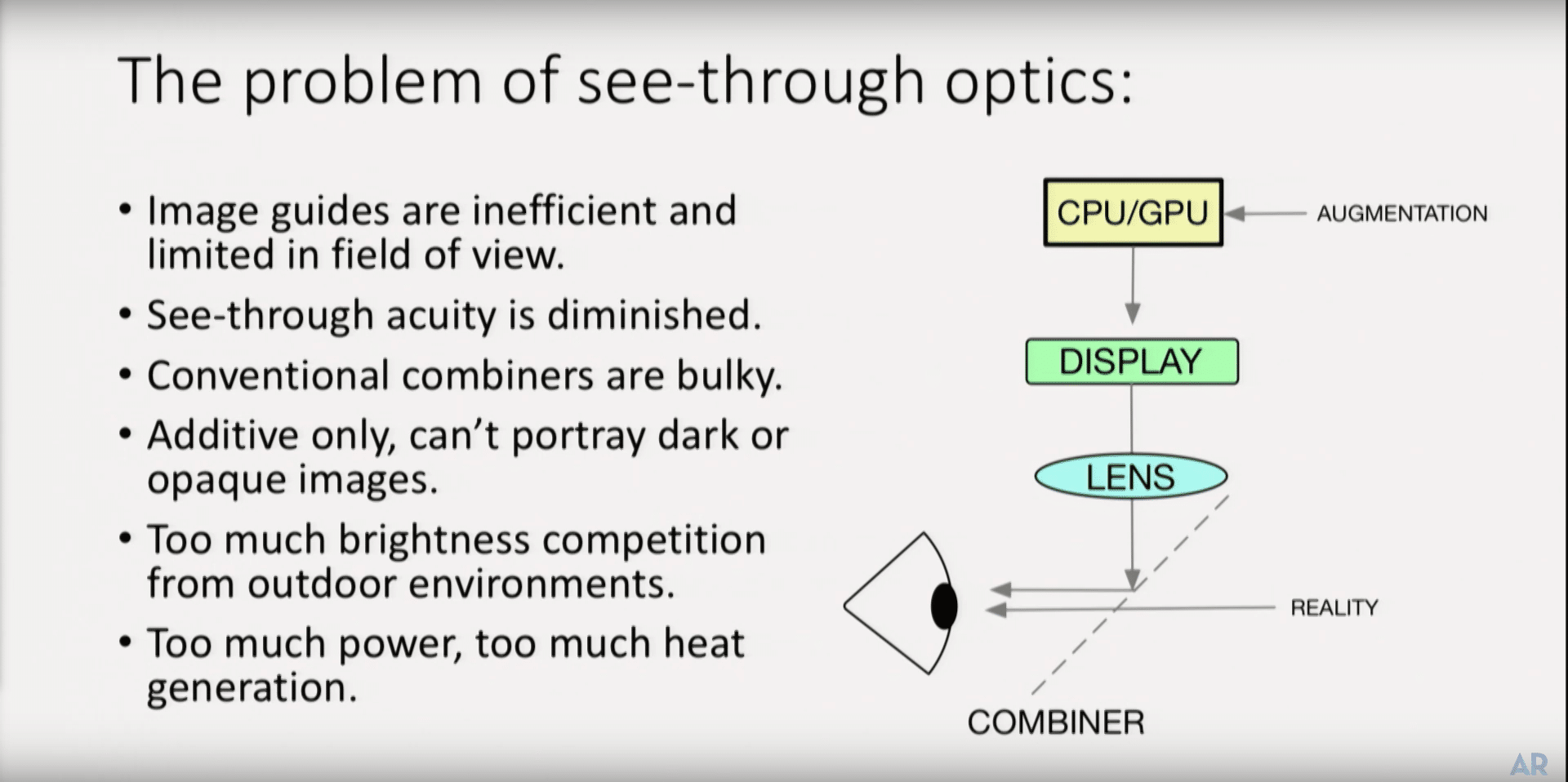

Waveguides could therefore be perpetually limited by the way light can be projected over the real world. For example, there are well-known detriments with brightness in daylight. Light signals also can’t render the color black (absence of light) so they can’t get the “true blacks” that your TV does.

“I can tell you this is a hard problem,” said AR optics Guru Mark Spitzer at ARiA. “If you use waveguides, like with Hololens, they’re inefficient. It needs a lot of power, and it has a limited field of view. When looking through the combiner, acuity of the real world is somewhat diminished.”

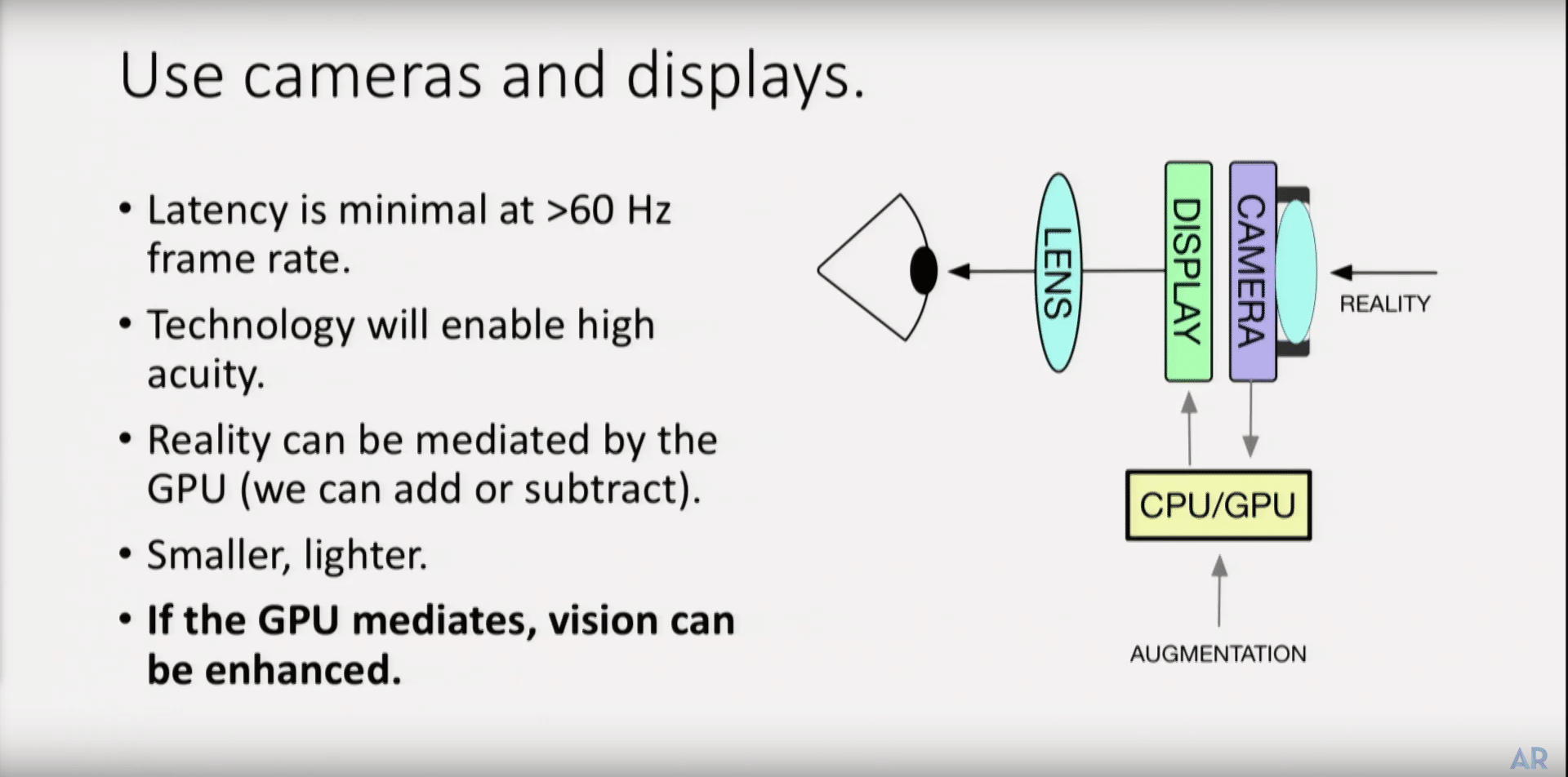

So the question is if digital displays could eventually leapfrog optical waveguide systems in AR glasses. In other words, rather than light projected on glass in front of your eye, could digital displays in front of your eye, start to gain an advantage? Again, this comes back to Moore’s Law.

“For 28 years, the displays were never good enough. That’s coming to an end. And when you have that, you can solve some interesting problems like see-through optics,” said Spitzer, who formerly ran GoogleX. “What this means is an end to displays limiting visual performance,”

Image Source: Mark Spitzer, ARiA, YouTube

Pixel First

This principle is already playing out in VR headsets that have passthrough cameras to bring in the real world. This is sort of the opposite of AR — instead of projecting graphics on the real world, the latter is brought in via passthrough cameras and added to a pixel-dominant experience.

We got the latest example of this principle at AWE, when Varjo launched its XR-1 headset. More towards the VR end of the spectrum, it’s a fully immersive and pixel-dominant format that pipes in low-latency video from the device’s front-mounted cameras for a blended experience.

Oculus Chief Scientist Michael Abrash is also big on video passthrough due to greater UX control. In outlining projections of AR and VR convergence, he believes that the best AR (what he refers to as MR) will happen in VR headsets — via passthrough video — before it happens in AR glasses.

“Mixed reality in VR is inherently more powerful than AR because there’s full control of every pixel rather than additive blending,” he said at OC5. “The truth is that VR is not only where mixed reality will first be genuinely useful, it will also be the best-mixed reality for a long time.”

But here lies a key challenge for passthrough video as a core long-term AR modality: it requires a VR-like headset. This makes video displays sub-optimal for everyday consumer use due to bulky headsets, not to mention near-eye focal dynamics and eye strain from illuminated pixels.

The Bright Side

On the bright side (excuse the pun) near-eye AR digital displays would have relatively low processing and resolution requirements. That’s because a smaller display area combined with foveated rendering enables an inherently smaller and more-efficient data processing payload.

“The fovea is only about two degrees, so in pixels that’s 120×120,” said Spitzer. “So data rates for just serving the fovea are pretty low… and the optic nerve is only a 10-megabyte-per-second channel so displays don’t have to be fed more than [that] — low data rates by today’s standards.”

Moreover, Spitzer reminds us that eye tracking can be applied in order to digitally control things like focus, using natural fovea movements. Taking that a step further, AR glasses with video-passthrough could enhance vision through things like telephoto zoom or corrective features.

“Since you’re using the GPU and system-on-a-chip to mediate, you can enhance vision,” said Spitzer. “There’s a long list of things that that can be done to give you better than human vision… That itself is a compelling AR use case and we’re going to be able to do it in the next few years.”

Innovation Timelines

Another place video passthrough is already happening is mobile AR. In other words, you don’t see the real world… you see a passthrough image from the camera that’s rendered by pixels on your screen. There’s no see-through glass, nor light projected on it… it’s all pixels.

Projecting forward, other evidence comes from Cupertino. Following the money, as we like to do, acquisitions in Apple’ AR buying spree indicate that it’s betting on passthrough. That includes VRVana, a Montreal Startup that has VR/AR hybrid glasses built around video-passthrough.

But one question, besides technological and practical advantages of each technology, is their respective innovation timelines. Will video passthrough represent the longer- term approach that leapfrogs AR optics, or is it a near-term stop gap as with mobile AR and VR headsets like XR-1?

“Don’t sleep on passthrough as a potential stopgap because it’s really good,” said Tested Jeremy Williams (video above). “It fills your field of view, and gives you opaque imagery… massive benefits. Yes, you have to have a big old brick on your face but none of this is lightweight yet.”

Software is Eating AR

Another lesson from smartphones is how software overcomes optical limitations in photography. You can’t increase focal length without phone bulk, so Apple’s Portrait Mode renders depth of field and background blur using software and two cameras to digitally simulate the focal length of one.

Google’s Pixel 3 has an even better hack. With just one camera, it utilizes the tiny movements of your hand while taking a picture to scan from several points, just millimeters apart. Those points simulate stereoscopic view, thus understanding depth of field and enabling photographic effects.

The lesson from these moves is the interplay of digital and optical technologies. Clever hacks with digital technology can step in to fill the gaps caused by natural limitations in optical technologies. And that lesson could be broadly supportive of video passthrough for future AR glasses.

Though Moore’s Law is approaching physical limits, it will take a few cycles to reach compact and stylistically-viable digital displays. Then multiply components by two, given outward-facing displays to show one’s eyes for social interaction. But the picture is starting to materialize.

“Because cameras present to your eyes in the right format, your eyes and brain can absorb them and you can use a digital processor,” said Kopin CEO John Fan at ARiA. “Optical see-through is OK for information snacking. Once you get more complex data, video see-through [will] win.”

* A more thorough and technical guide to waveguides, holographic combiners and laser displays is provided here.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.

Header image credit: Varjo