XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, as well as narrative analysis and top takeaways. Speakers’ opinions are their own.

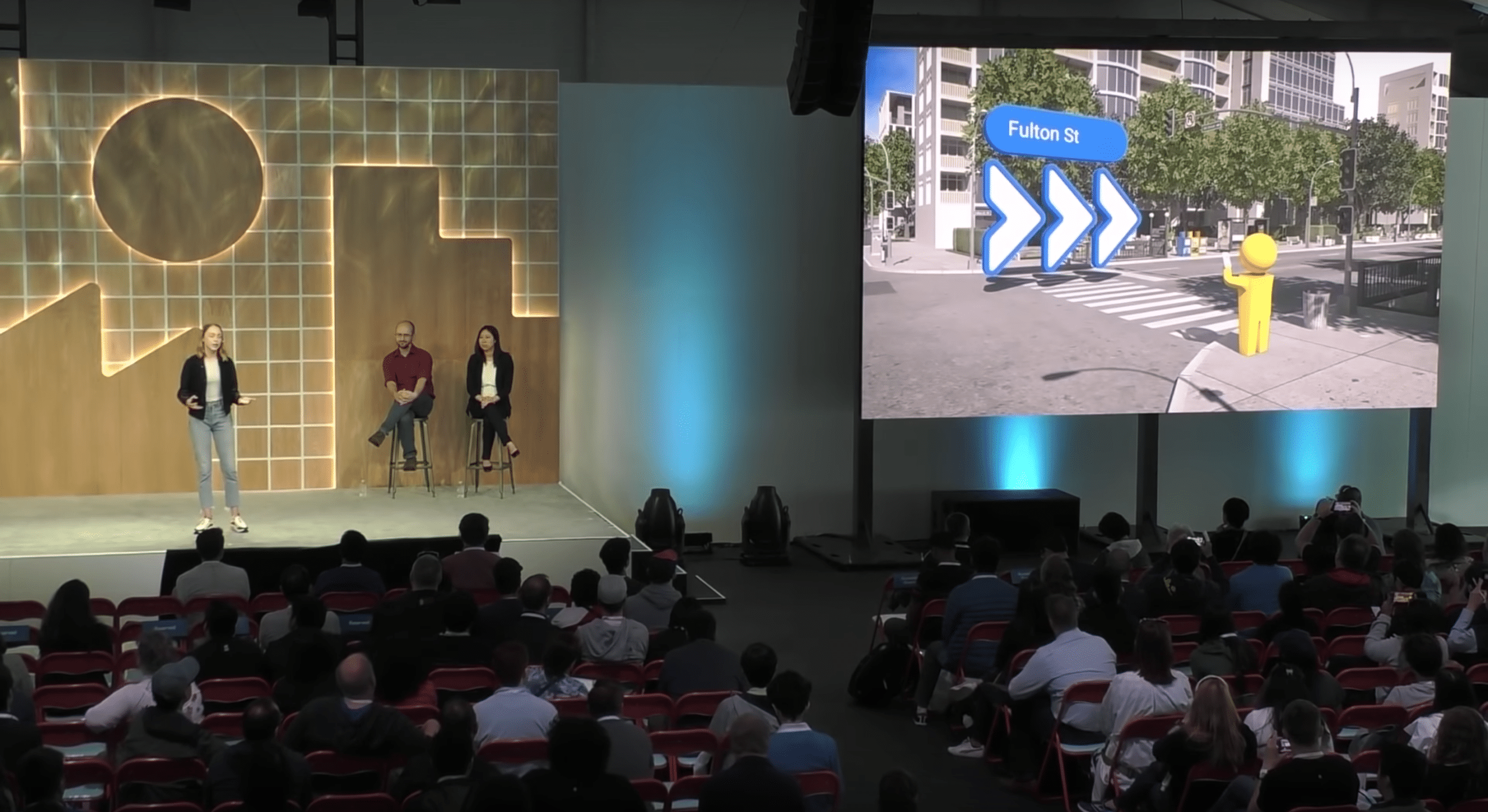

One of the most anticipated and potentially-useful forms of AR has been Google’s VPS walking directions. But its lesser-known backstory likewise offers value in the form of lessons for AR interaction design. Google broke this down as a sort of PSA at its I/O event (video below).

“Our vision-based approach works similar to how humans orient and navigate — by recognizing familiar features and landmarks,” said Google’s Joanna Kim. “Solving this has been one of the most technically-challenging projects that I’ve been a part of during my eight years at Google.”

As background, most AR works by first localizing to calculate where the device is in space. It can only map its environment, overlay graphics and track them if it knows where it is in relation. In mobile AR, this happens through a combination of GPS and inertial measurement (IMU).

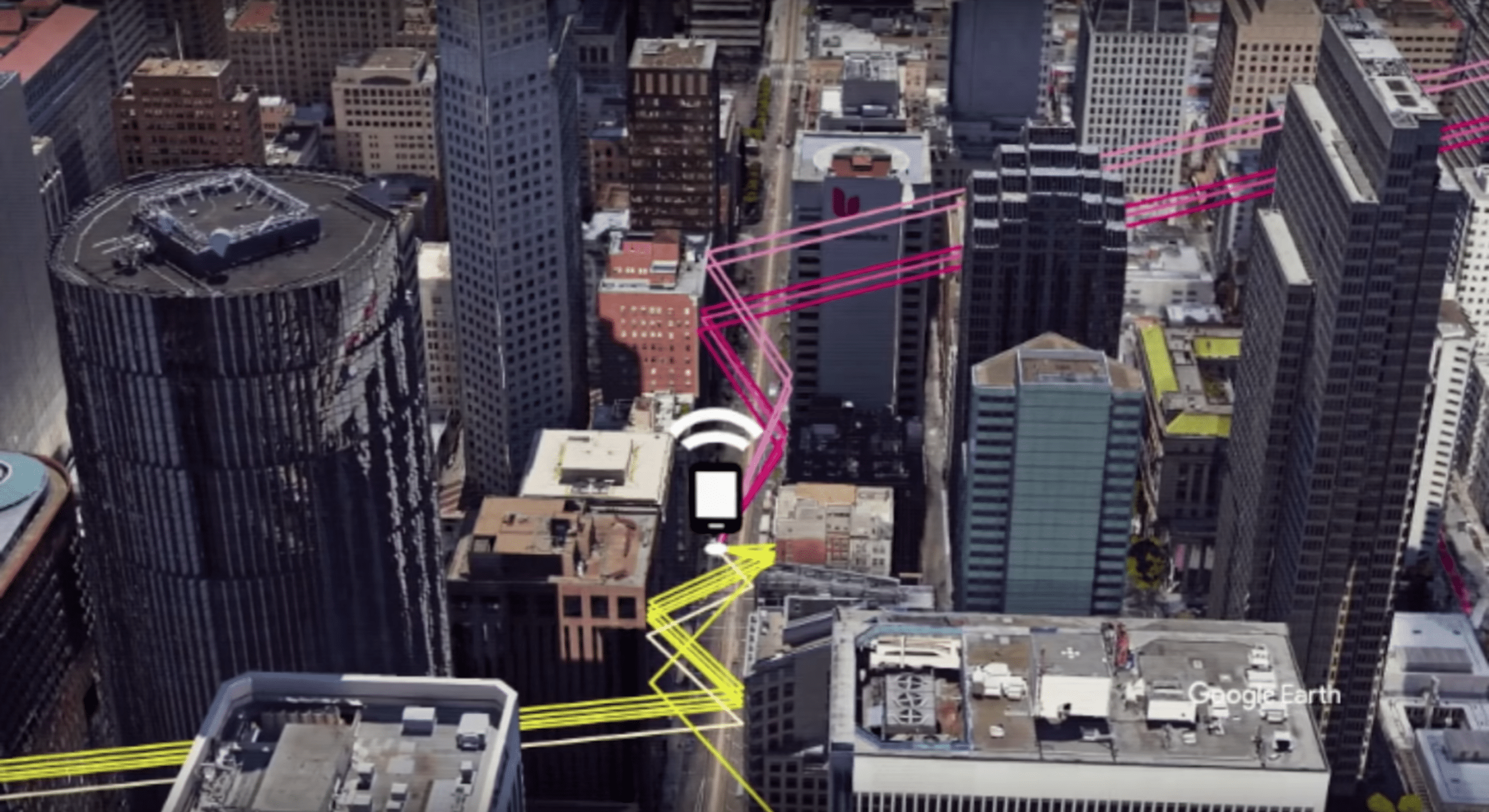

But both can have issues. You’ve probably noticed that blue dot in Google Maps isn’t always on target. For example, in urban areas where satellite signals bounce off buildings, it creates more time and distance between the device and the satellite, causing imprecise location calculation.

“When calculating distances to satellites, it requires estimating how much time has passed since the signal left the satellite,” said Google’s Jeremy Pack “But when you’re talking about signals moving at the speed of light, even a nanosecond of error results in miscalculating by a foot.”

This is an issue when that blue dot on Google is a city block away from where it should be. But it’s an even bigger issue for AR because informational overlays need millimeter-level precision… especially if they’re telling you where to walk in high-traffic areas. That’s’ where the IMU kicks in.

The IMU includes an accelerometer, magnetometer (compass) and gyroscope. They measure time and distance between several points to calculate the device’s orientation. This is known as inertial odometry, But here too, small errors can occur, then amplify as movement continues.

“So this type of inertial odometry that’s tracking the movement of something through space using inertial sensors needs additional signals to keep it anchored,” said Pack. “[That’s] to keep it working well over time, and to keep accurately estimating your position.”

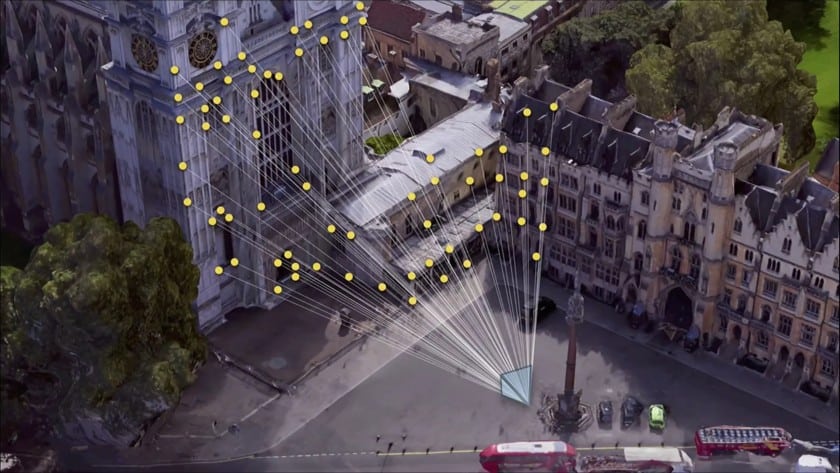

Here Google figured out a clever hack, which is to supplement these two sources of localization with another sensor: the camera. As it first pioneered years ago with Tango, the camera can further localize AR devices by recognizing their position visually and correct IMU drifts.

“We can use the camera in a smartphone to detect visual features in the scene around us,” said Pack. “Think: corners of chairs, text on the wall, texture on the floor… and use those to anchor these inertial calculations. Then we can correct for the errors in the inertial sensors over time.”

And that brings us back to mapping and navigation: Google already has a robust image database by which to perform this real-world visual positioning: Street View. This visual database is well-aligned with VPS’ intended use due to its geographic coverage of the areas where one might walk.

“We could actually precompute a map using imagery that we’ve collected around the world [from] Street View,” said Pack. “We could use an image from that same camera… match it against the Street View visual features… and precisely position and orient that device in the real world.”

Add it all up and that’s how VPS works. But that only covers the underlying technology and localization dynamics. The other half of the battle is a front-end interface that’s optimized and human-readable. And the challenge there is that it’s unchartered territory with no prior playbook.

“They’re all things that we had to learn the hard way, so we hope that you enjoy them, because wehad to fail a lot of times to actually get to this,” said Kim. We’ll be back in part II of this series to examine that second half of the battle: prototyping and iterating the AR interaction design.

See the full session video below and stay tuned for next week’s deep dive on the second half.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.

Header image credit: Google