![]() “Wearables Wars” is AR Insider’s mini-series that examines how today’s wearables will pave the way and prime consumer markets for AR glasses. Each installment will profile a different tech leader’s moves and motivations in wearables. For more, subscribe to ARtillery PRO.

“Wearables Wars” is AR Insider’s mini-series that examines how today’s wearables will pave the way and prime consumer markets for AR glasses. Each installment will profile a different tech leader’s moves and motivations in wearables. For more, subscribe to ARtillery PRO.

Common wisdom states that mobile AR is the forbear to smart glasses. Before the latter achieves consumer-friendly specs and price points, AR’s delivery system is the device we have in our pockets. There, it can seed user demand for AR and get developers to start thinking spatially.

That’s still the case, but a less-discussed product class could have a greater impact in priming consumer markets for AR glasses: wearables. As we’ve examined, AR glasses’ cultural barriers could be lessened to some degree by conditioning consumers to sensors on their bodies.

Tech giants show signs of recognizing this, and are developing various flavors of wearables. Like in our ongoing “follow the money” exercise, they’re each building wearables strategies that support or future-proof core businesses where tens of billions in annual revenues are at stake.

Earlier in this series, we examined Google’s ambitions to create more direct user touchpoints (literally) that drive revenue-generating search by voice, visual and text. The story is similar for Amazon, Microsoft and Bose (kind of), and the 800-pound gorilla in wearables, Apple.

Fully Actualized

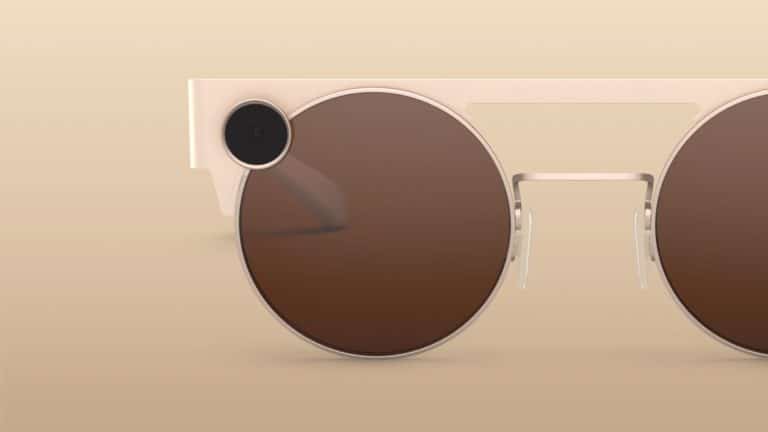

To continue the narrative, what about Snap? Its Spectacles have had their ups and downs. But the understated genius of the product might be more about conducting a market experiment than distributing a product whose success is measured in on traditional metrics like units sold.

In other words, though Spectacles aren’t AR glasses, Snap is gaining insights about consumer demand signals and proclivities for what AR glasses should someday look like. This is a nascent area where no one has answers, so it’s investing to gain product intelligence for AR’s next era.

Snapchat is already primed for that era in other ways, such as developing the market’s leading playbook for AR lens interactions. But it knows it needs to marry that software competency with the hardware that will eventually represent AR’s fully-actualized “heads-up” modality.

Snap’s Carolina Arguelles revealed as much at last year’s AWE Europe:

“We believe that in order to envision this future of computing overlaid on the world, you really need to take the screen away that’s cutting you off from the actual physical world, which the mobile phone does… Our investment in Spectacles is because we want to test, iterate and understand what it means to interact with cameras when they’re on your face. We want to know what good content is… How people interact with it… What they like… What should the UX be? … What should the creative experiences be? … And ultimately how can we start to build out a content repository? … AR is just starting to be introduced into this product and is eventually something that you’ll see more and more of.”

Sacred Territory

The other main goal for spectacles — true to the premise of this series — is to acclimate the world to technology that you wear on your face. Because this is sacred territory and others have failed (a la glassholes), it will be all about thoughtful and gradual approaches to ease consumers in.

“That’s the secret strategy or the Trojan horse,” said Ubiquity 6 CEO Anjney Midha. “How do you get enough sensors in people’s hands at a cheap price or on their face. That sets [Snap] up for very immersive AR experiences or any kind of VR experiences a year or two years from now.”

As we’ve examined, Apple is conditioning users to wear sensors on their bodies to warm the world up for its looming smart glasses play. Snap is doing similar so that Spectacles hit the ground running. But its angle differs from Apple’s signature top-secret R&D approach, says Evan Speigel.

“Spectacles represent a long-term investment in augmented reality hardware. So I think it’ll be roughly ten years before there’s a consumer product with a display that could be really widely adopted. But in the meantime, we’ve built a relationship with our community and all these people who love building [AR] experiences and we’re sort of working our way towards that future, rather than go in a hole or in an R&D center, and try to make something that people like, then show them ten years later. We’ve sort of created a relationship with our community where we build that future together. So I think what’s really cool about Spectacles 3, for the first time we have depth capability and if you’re using Lens Studio, which are our tools for building augmented reality experiences, you can build AR directly for that spectacles content in 3D using our depth technology. So that’s sort of an iterative step towards this AR future.”