The biggest names in tech are locked in a battle to define, shape, and equip the future digital landscape. Meta (formerly Facebook) wants to call it the Metaverse. NVIDIA envisions an Omniverse. Google is currently calling it Lens. Samsung, Apple, Microsoft, Amazon, Adobe, Snap, Huawei, and others have all stated the importance and promise of this next computing paradigm.

These titans all know that this next shift in computing is coming fast, and they don’t want to be the “old technology company” that missed it like what happen to IBM with the PC, Motorola with the switch to digital mobile phones or Blackberry, Palm and Nokia with the switch to smartphones. Yet they all have the same problem with this new mobile platform – finding optics that enable head-worn devices to look natural and stylish and yet provide the required level of performance.

For the casual observer, this battle for the next computing platform today primarily refers to a virtual reality space, or a network of 3D digital spaces, in which users, from a first-person perspective, can interact with some type of computer-generated environment. Star Trek’s holodeck would be the science fiction example of this immersive experience, as would the world in Steven Spielberg’s “Ready Player One.”

But we at DigiLens believe there’s a much larger near-term play, that for the last decade has been gated by the existing inferior optics offered in the market. As we start 2022 though, this now changes because of the breakthrough performance of DigiLens’ new waveguide technology. Technology that allows for natural human interaction between everyday people in a socially acceptable, organic way, that improves everyday life.

Headworn AR Global Revenue Forecast, 2020-2025

Extended Reality Devices Are The Next Major Digital Platform

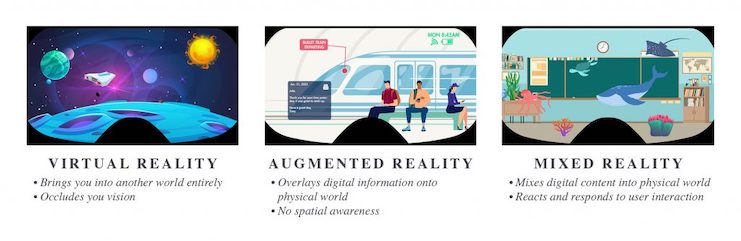

We’re headed toward an Extended Reality (XR) environment that comprises the full spectrum of Augmented Reality (AR), Mixed Reality (MR) and Virtual Reality (VR). It will be a future somewhere between a fully immersed digital world and a digital on-demand experience that’s only active when the user wants it to be. We’re building a brand-new digital platform that will allow users in several different horizontal markets to visualize their information in ways that increase productivity, optimize learning, and ultimately improve their numerous other daily activities.

Imagining the future is the easy part. Building it is much more difficult. At DigiLens, we see the two primary challenges as optics and social acceptability. The technology has to work (i.e., with the right resolution, field-of-view, etc.) and people must feel comfortable wearing the glasses. You can’t achieve one without the other. It’s like asking which blade on a pair of scissors is more important. We’ve made tremendous strides in optics as we’ve discussed most recently in our CES 2022 press release and I’ll describe in even greater detail our advances in social acceptability below. But first, it’s important to understand where we think the technology is headed and why.

As shown in the diagram above, XR breaks up into three categories: VR, AR, and MR. The first and most visible one now is VR. Meta, Sony, and HTC have been working on rolling out VR hardware. In VR, the user puts on a bulky headset over their head and eyes, and the reality a user sees is completely recreated and therefore users, from a first-person perspective, interact with a completely computer-generated environment and representations (such as digital avatars) of other users.

The big question now that’s facing tech companies though is whether consumers want this in a broader-based way? More specifically, will non-techie users put these headsets on and agree to sit in their living rooms cut off from all but the computer-generated world? I suspect older people, less technology advantaged people, and even more mainstream users will not.

Instead, I believe we’re headed toward an XR environment that comprises different aspects of AR and MR where people’s experience augments the real world where and when the user wants it to be, instead of being represented by a digital avatar in a made-up world where you can choose to look like a digital you or something completely different while you are wearing a large, bulky VR headset because no one will ever see it or you in person.

What’s the Sales Outlook for AR Glasses?

In a World Where AR/MR Dominate

At DigiLens, we want to help tech evolve in a direction that will enable people to thrive, connect and communicate with the people and world around them. AR/MR glasses add data and images to a real-world environment as the user is experiencing that world. It allows the user to enhance the way they see their world by overlaying digital information on top of and in the context of the world. AR will do it in a more limited way with simple notifications, such as providing names for people the user interacts with, pricing of items, maps, ability to provide a zoom function, and more.

MR will eventually do this in a more immersive way by allowing the user to define how they see the world by occluding certain things and inserting other items into their world. This seems imminently more valuable of an experience than a purely virtual environment because people will be able to interact in a more human way with others and their surroundings. Consider the following examples:

A doctor in the developing world can link up with a specialist across the globe to help give a child life-saving medical care. A specialist can interact via an XR overlay image in real-time just as auto companies are using XR technology to direct mechanics. XR offers telemedicine without limits with the vitally important human connection of real doctors treating real patients.

An HVAC tech in Bangalore, India can link up with an expert in Buffalo, New York with XR technology shared between them.

DIYers across the planet can work faster and more efficiently by getting guidance on their smart glasses instead of having to pick up their phone. Like fighter pilots reading real-time data on a heads-up display, a DIY woodworker would be able to view angles overlaid right onto the board they were about to cut.

Teachers can use XR devices for more immersive and interactive hands-free instruction.

Gamers can organize and engage in multiplayer gaming in real-world settings that go beyond simple tapping on screens – exploring the world while having their appearances customized to their unique personalities.

The possibilities will be endless as enterprise users, then consumers, get their hands on this technology and explore its capabilities. Still for this to be successful, hardware manufacturers must realize that what a person looks like matters. You are physically there in person and the device you wear to deliver the experience will define how you look and present yourself to others.

Devices need to integrate well with your person so you can still communicate effectively. So much of physical communication is non-verbal – raising eyebrows, seeing where someone is looking, seeing if their eyes are dilated, and so on. Head-worn devices need to be lightweight, stylish, and what we call “socially acceptable.”

Is AR’s Next Design Target ‘Wearability?’

Social Acceptability and Human Connection Will be Major Factors

How you look in the real world matters a lot. There are huge industries built around making people look their best – pharma, plastic surgery, clothes, eyewear, et cetera. Social acceptability of new technology (beyond the initial cool factor) is also linked to an even more important factor – human connection. It’s hard to meaningfully interact with another person if the technology they are using puts a barrier between natural, in-person communication.

This is where DigiLens excels. Creating technology that “just works” takes an enormous amount of time, focus and engineering excellence. I’m proud of what our team has accomplished. We’ve solved several of the biggest challenges surrounding the hardware piece of the XR smartglasses equation – highly efficient optics that deliver a socially acceptable glasses experience at a consumer price point. The goal is to make the tech fit into our lives without making people stick out.

Our waveguide lens technology is notable for its characteristics in the areas that are essential to allowing for socially acceptable smartglasses to be built:

- 4x less eye glow than the closest competitor – Eye glow is a general side effect of diffractive waveguides. It occurs when light is projected away from the user’s eye and is visible to the outside world. High eye glow can be distracting to others, reduce visibility to the user’s eyes, and it is typically a sign of lower waveguide efficiency.

- Low glint from outside light sources – The gratings within waveguides that bend the light can catch light from external sources and create “glints” in the form of rainbows or other distracting reflections that takes away from a good user experience. DigiLens is able to minimize these disturbing artifacts by having a flexible design platform that allows manufacturers to position gratings in a manner that minimizes glint.

- High transparency with a value of > 85% – Transparency is the amount of light that can pass through a waveguide lens, allowing users to see the real world and allowing others to see the user’s eyes. DigiLens’ waveguides start at 85% transparency and go up from there, whereas our competitors are less than 85%. This allows for a natural eyeglasses look and not a sunglasses look.

- High efficiency – Efficiency explains how effective a waveguide is in sending light from the projection source to the wearer’s eyes. The higher the measure the more valuable the waveguide because that then enables manufacturers the ability to build sleek, lightweight devices that can have smaller batteries, fewer thermal issues, and smaller projectors. This is one of the most important metrics because of the effect it can have on the overall form factor. DigiLens is the market leader in diffractive optics here by far.

ARtillery Briefs, Episode 54: What’s the Revenue Outlook for AR Glasses?

Good Looking Tech That Achieves an MVP Level of Performance has Arrived

So regardless if you call this next computing category a Metaverse, an Omniverse or something else, the message I want to leave you with is for this next platform to take hold the technology must fit into our lives without making people stick out like cyborgs while they organically move around in the real world.

It must enhance people’s lives as opposed to making people more cut off and isolated, as unfortunately social networks have paradoxically done. I’m pushing the team here to build waveguides and head-worn devices that fit into what people are already doing and help them do it smarter, better, more efficiently, and with greater collaboration.

Head-worn technology has a long history that we are working to perfect. Just as the first eyeglasses with corrective lenses helped users see the world for what it is and operate more freely and effectively, we’re helping users experience, not escape, reality and connect with the people around them. We want to help early adopters do what they are already doing with the advantages of the next evolutionary step in display technology.

Editor’s note: since the publication of this article, DigiLens announced the completion of its Series D funding for $50 million, at a post-money valuation of $500 million.

Chris Pickett is CEO of DigiLens. A version of this post originally appeared on the DigiLens Blog, published here with permission.

Chris Pickett is CEO of DigiLens. A version of this post originally appeared on the DigiLens Blog, published here with permission.