After examining Google’s visual search efforts in earlier parts of this series, how does Snap compare? Visual search is a subset of AR – the broader category where Snap maintains a lead. This mostly involves AR lenses but increasingly its visual search play: Snap Scan.

Starting with lenses, 75 percent of Snap users engage with them, as they’re naturally aligned with existing behavior – sharing multimedia. Lenses enhance and adorn that familiar use case (a product lesson in itself), which is why they’ve caught on so well in the Snapchat universe.

But though social lenses have landed well, Snap recognizes that consumer tech products must evolve as they mature. Specifically, the fun and whimsy that gave Snap lenses early traction need to expand into the next life stage: utility. Snap calls this an evolution from toy to tool.

To be fair, fun and whimsy will continue to be part of Snap’s product formula, but it’s increasingly integrating more utilitarian AR formats. Those include shopping lenses such as product try-ons. And as part of this evolution, it’s intent on visual search, which brings us back to Snap Scan.

In fact, Snap Scan aligns with another key trend in Snap’s road map: to transition from front-facing camera lenses (selfies), to the rear-facing AR to augment the broader canvas of the physical world. Snap Scan is a key part of that formula… and it’s already used by 170 million users.

Product Persona

Stepping back, what is Snap Scan? It’s similar to Google Lens in a broad sense: point your smartphone camera at objects to identify them. But as we asserted earlier in this report, visual search players’ approaches trace back to their core product personas and company missions.

That statement applies here, as Snap Scan specializes in a few use cases that are endemic to Snap’s persona. For example, rather than “all the world’s information” (Google Lens’ approach), Snap Scan is more about fashion and food. These are aligned with Snap’s ethos and its users.

This focus on a narrower set of high-value use cases is also smart from a practicality and execution standpoint. In other words, Snap doesn’t have Google’s knowledge graph, including the vast image databases that it utilizes for AI training sets to refine visual search capabilities.

Within its chosen areas of visual search, Snap instead partners with best-of-breed data providers. A partnership with Allrecipes helps Snap Scan recognize items in your fridge and suggest fitting recipes. Photomath similarly helps Scan solve math problems written on a physical page.

But in fairness, Snap also invests in its own machine learning. For example, it announced in Fall 2021 that Snap Scan recognizes more real-world objects and products – everything from pets to flowers to clothes and packaged goods. So its approach combines internal data and partners.

Outfit Inspiration

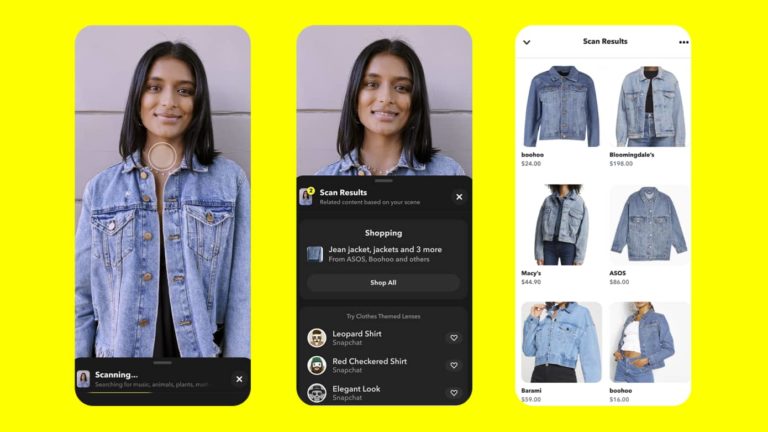

One of Snap Scan’s most recent visual search developments gets back to the fashion discovery use cases noted earlier. Its Screenshop feature evokes product information and purchase details from retail partners (which also provide visual data) when users scan style items they see.

Snap calls this “outfit inspiration,” which not only has utility in finding out more about fashion items encountered in the real world (or on magazine pages), but it’s monetizable. That gets back to one of visual search’s key advantages: like web search: there’s a high degree of user intent.

This also brings back another concept introduced earlier in this report: shoppability. The world is increasingly shoppable. That mostly includes transactional buttons in social feeds, but it’s becoming such a user expectation that it could be valued in a physical-world context.

In that way, Snap Scan provides more surface area for shopping. Consumers will still actively go to websites for online and mobile shopping. But scanning fashion items in the real world or the pages of their favorite fashion magazine brings more shoppability to their fingertips.

Altogether, this represents a component of Snap’s business and its long-term road map. Indeed, visual search is a natural extension for tech companies that are built on ad revenue models. In fact, the two companies examined so far — Google and Snap — are exactly that.

Improve & Assimilate

Speaking of Snap’s road map, visual search is currently a less-discussed component of its AR mix – overshadowed by the more exposed and popular Lens format. But it’s a key puzzle piece in Snap’s continued expansion of “shoppable” content and, ultimately, AR ad revenue.

In fairness to lenses and their monetization potential, they currently derive the lion’s share of AR revenue for Snap. But, as it continues to improve and assimilate, visual search in general could catch up and surpass lenses as a formidable AR modality and visual shopping format.

Meanwhile, Snap Scan’s use cases continue to widen, which traces back to Snap’s rear-facing AR ambitions noted above. By evolving and investing in the underlying platform — while empowering partners and developers via APIs — Snap continues to offer visual search entry points.

Speaking of which, Snap is “incubating” visual search to accelerate traction. This includes planting Scan directly on the main camera screen for easier access. This should help it to gain more traction and to condition user habits. Because visual search is still new, it needs that nudge.

As Snap conditions that behavior, it also does so in service of visual search’s endgame: AR glasses. Snap Spectacles feature Scan to discover the world visually and naturally. This hands-free format, though still far from mainstream viability, is where visual search will hit its stride.

We’ll pause there and circle back in the next report excerpt with more insights and narratives around visual search. Read the full report here…