XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, as well as narrative analysis and top takeaways. Speakers’ opinions are their own.

In all the excitement around our spatial future, relatively few people discuss nuanced realities like privacy and control of augmented spaces. What filters will be in place to activate AR layers, or deactivate them (think: ads)? As we asked recently, who owns your augmented reality?

“These are all new things around spatial computing that we haven’t had to think about before,” said Unity’s Timoni West at VRARA Summit (video below) “We’re going to have to tell computers things that we never normally articulate. How we talk to computers will have to be rethought.”

As Unity Labs’ lead (see our past interview) West is is one of the “relatively few” referenced above. And in the process of rethinking the human-computer interface that we’ll need for a spatial computing era, she’s most interested in starting a dialogue rather than setting rules.

“We’re not coming up with the solutions. We want it to be a community discussion,” she said. “We need to have some sort of structure of how we think people are going to start developing apps and the types of permissions and states they’ll want. So this isn’t the end, it’s just the beginning.”

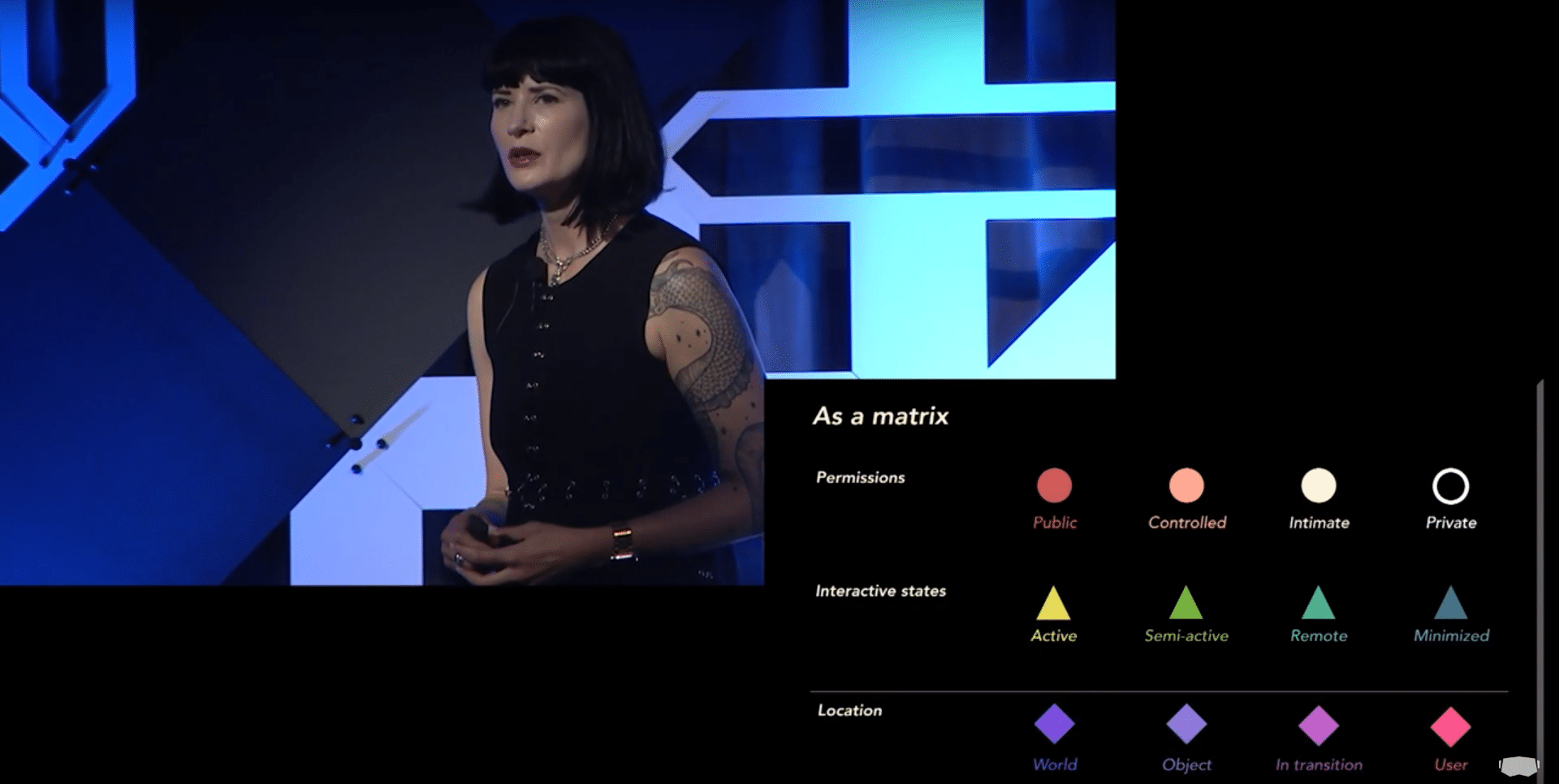

Drilling down, the AR interactions she raises include permissions (who controls AR graphics in a public park versus your bedroom), interactive states (how persistent are AR graphics — active versus background/minimized?), and location (are graphics anchored to users or objects?).

These aren’t questions with one answer but are rather thought exercises so we can begin to develop frameworks for when AR graphics are allowed and when they’re not. More importantly, what’s the hierarchy of control in terms of individuals choosing what they see and when?

“In controlled spaces that are semi-public spaces like stores or offices or malls, there is usually a commercial owner,” West said in reference AR graphics in such spaces. “So the question is, who owns what we do in these spaces? You might say the landowner owns the permission set.”

That’s where things get tricky as those permissions may conflict with individuals’ permission settings when in those spaces. Logically, and in alignment with today’s standards, individuals’ permissions should trump that of commercial spaces… but what about matters like safety?

“I used to think that the individual always trumps any sort of permission set that you’d have in a commercial space,” she said. “But then someone pointed out: if you’re in a mine and there’s a gigantic [digital] ‘do not go here’ warning sign, that will need to trump individuals’ ad blocking.”

There are also matters of the entities to which AR graphics are anchored. And do they stay persistent with those people, places and objects; or do they have more mobility? The answer is all of the above, but once again there will need to be frameworks to optimize for each scenario.

“Things could be anchored to the world, like fireworks over the Eiffel Tower,” said West, “or anchored to an object in transition when you pick it up and move it from one space to another. Or they can be anchored to the user, like if I have my email app open in front of me all the time.”

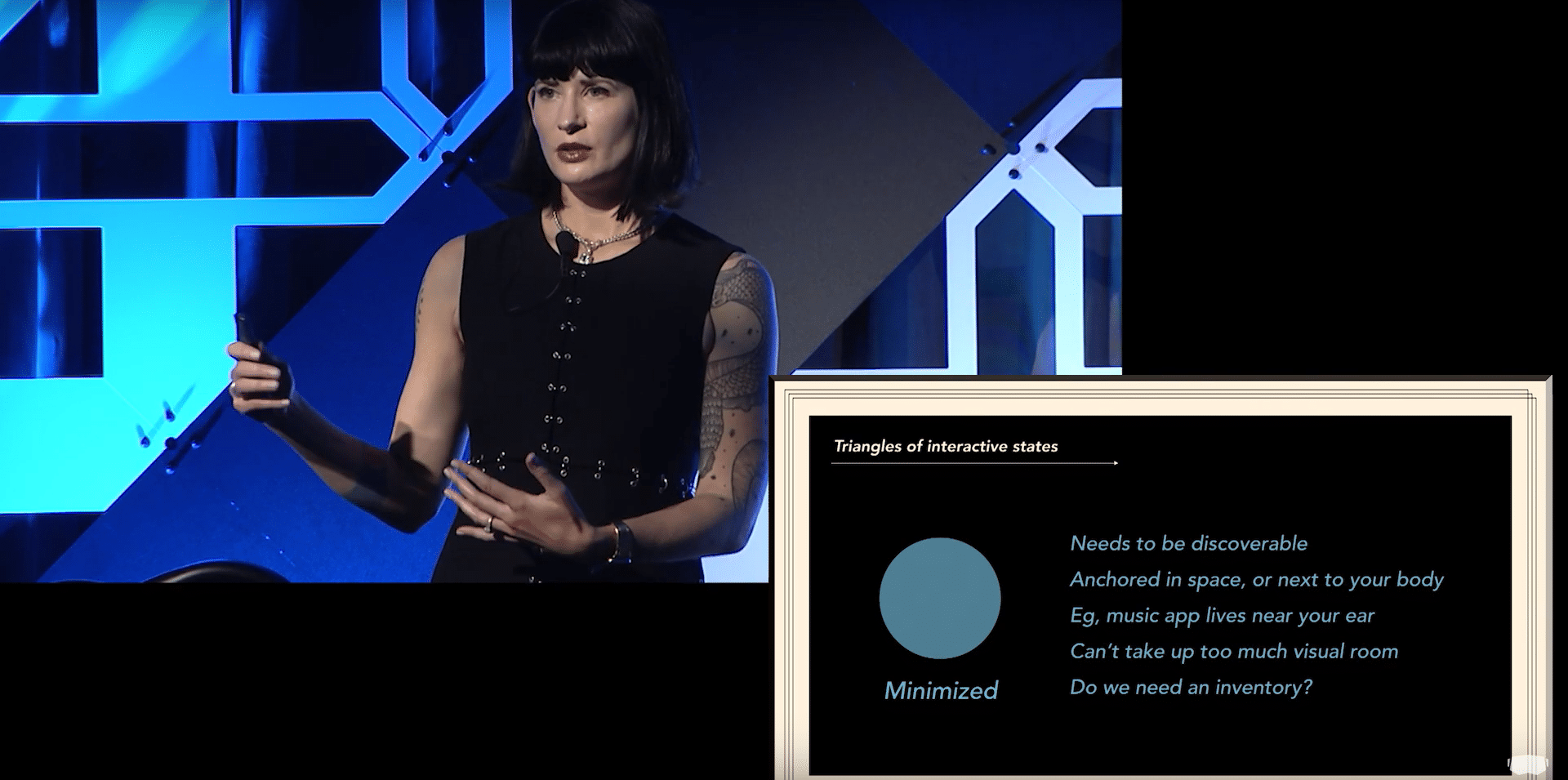

The persistent email app raises another question which is modes and states of our various AR apps. Today we have apps and software that transition between various states of open, minimized backgrounded or, in the case of the smartphone, shoved into our pockets.

“Will we need a master inventory for all the apps you have so that you can pull them up at any moment?” West Posed. “Today every app is just tied to your physical device… but when it comes to a spatial UI, and you’re just carrying around an AR headset, the paradigm is different.”

These are all key questions. Some of it is admittedly futuristic but it’s arguably never too early to start thinking about how we’ll interact with and control the powerful technology that we project AR and spatial computing to be. We’ll want to make sure we’re thoughtful about it, and get it right.

“A lot of this has been validated by experiences that we’ve tried,” said West of the work at Unity Labs. “We’re really trying to figure out what people need to make the spatial computing future that really works. We want this to work because we think it’s really cool and we don’t want to F’ it up.”

See the full talk below.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: The author of this post is involved with the San Francisco chapter of the VR/AR Association (mentioned above). He nor AR Insider received payment for producing this article. Disclosure and ethics policy can be seen here.

Header image credit: VR/AR Association, YouTube