This article features the latest episode of The AR Show. Based on a new collaboration, episode coverage now joins AR Insider’s editorial flow including narrative insights and audio. See past and future episodes here or subscribe.

Where are we in AR’s lifespan? Where are we going and where have we been? There’s probably no better person to spend an hour breaking that down than Tony Parisi. His spot on the AR Show podcast (listen) was so informative that we’re cheating and including it in our weekly video series.

The wide-reaching discussion with Jason McDowall hit on several points in the spatial computing spectrum, from AR advertising to the hardware/device continuum. In addition to the audio link below, we’ve summarized some of those highlights in our normal fashion.

Starting Point

Starting with the present, it’s no secret that the scale is in mobile, given 1.1 billion AR compatible smartphones. Mobile AR is concentrated in a few different experiences like social lenses, which will expose AR to mainstream audiences before it branches into a broader set of use cases.

“AR it’s still being driven by social media apps,” said Parisi. “So that’s Facebook and Snap primarily and Instagram is introducing it. There’s also buying utility apps — Amazon AR shopping, IKEA Place. Those have a lot of utility and it’s a place we’re going to see a lot more stickiness.”

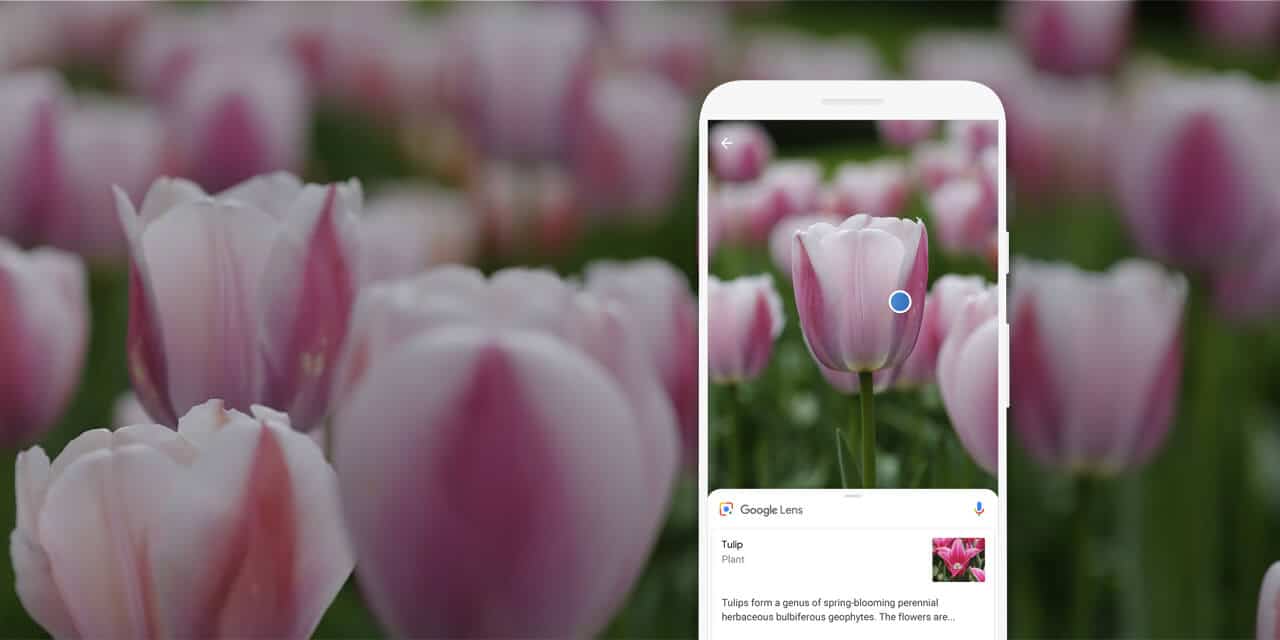

Another place where AR will develop — beyond graphical overlays for fun or utility — is using the camera to identify things, a la Google Lens. This is one of our favorite prospects for AR because it combines a familiar and frequent utility (search) with a more intuitive input (camera).

“There’s a companion to ‘try-before-you-buy’ retailing which is the camera as your eyes, recognizing things,” said Parisi. “Whether it’s logos, QR codes or objects, once you have that capability, you can now overlay information so it creates utility in a completely different way.”

Where’s the Money?

All of this naturally leads to the question of monetization. Fortunately, the above examples, and several other AR use cases, are naturally monetizable. Brands are increasingly keen on AR for its ability to demonstrate products immersively and create higher levels of engagement.

In fact, Unity has an advertising business that reaches 1.7 billion people. Given its reach in game development, it essentially built an ad network where developers can opt-in to monetize mobile games with various forms of interstitial ads. That’s most often video, but now also includes AR.

“We realized that with the magic of ARkit and ARCore, we could deliver interactive AR experiences into that same stream,” said Parisi. “So instead of just a linear video ad, you can have an interactive experience and then if you turn the camera on, you get an AR version of that.”

AR’s reach isn’t enough to get most brands excited, but it will get there. Meanwhile, engagement levels are promising for reasons stated above. In fact, Parisi reports that effectiveness causes AR ads to be more expensive (more revenue for developers and Unity) than traditional formats.

“The belief is, and we’re proving this out now through early pilots, that it’s more engaging,” he said. “First, of all, it’s interactive, not just linear video. Second, it’s relevant and in context. I can get a product display out in front of me, and see what that looks like, like the IKEA scenario.”

AR Accelerants

All of the above is impacted by orbiting factors like advancements in computer vision, AI and of course 5G. As we’ve examined, the latter is about more than just faster speeds and low latency. Parisi believes it will enable and inspire new/native product thinking for AR.

“With 5G, the idea is we could deliver that much more immersive content,” he said. “The street will find its use for those things…I’m Old Guard at this point…I didn’t see Uber coming, so I’m way out of it. What’s going to happen with those sets of tools 3 to 5 years out… the mind boggles.”

The same goes for machine learning (ML) for an intelligence backbone for AR. That’s especially true for computer-vision intensive flavors of AR such as visual search. Speaking of visual search, Google and others are investing heavily in ML to support AR and lots of other stuff.

“Machine learning is overtaking and outpacing everything else right now,” said Parisi. “There’s are so many different use cases for machine learning and it’s proving out in so many different ways that a lot of industry appetite and money are flowing that way.”

Interlinked AR

Another important question is AR’s delivery vessel. Though we’re in an app-based mobile world, apps could be too siloed for AR. Parisi, a long-time web VR guy, believes that the web’s linked architecture is more congruent with the dynamism that AR needs, with some room for apps.

“If you’re on Twitter like I am a million times a day and you see a tweet and want to tap on [it], a lot of 3D content should work that way,” he said. “It really depends on where you’re trying to play… simple lightweight storytelling versus long form content. I think these forms will all coexist.”

That equation changes a bit when we’re eventually in a glasses-dominant era. The dynamism and alternate inputs for such a form factor may require something more frictionless than the current app paradigm. This comes into play when discovering new content through daily travels.

“You can imagine with smart glasses, you’re walking down the street and every time you want to do something you’re invited to do an app install,” said Parisi. “I just think it’s not going to happen that way. It’s going to have to be frictionless somehow.”

Training Camp

The sweet spot will probably something that combines apps and web interactions. For frequently used functions, we may have apps just like we currently use five or six apps regularly on our smartphones. But serendipitous real-world discovery can’t have all that download friction.

“There’s no reason to think there might not be the same sort of interplay, where future smart glasses will have a handful of apps… activities that are first and foremost that are your tool belt,” said Parisi. “But everything else is discovered by links, object recognition and machine learning.”

Speaking of AR glasses, all of the above mobile AR dynamics are really just training camp for a glasses era. That’s years away, but the work being done today in mobile AR is warming up users as well as developers who have to gain native footing for immersive interactions.

“We need a confluence of electronics and style, but the belief in the industry and the shared hope is that what we’re building now with handset based AR is a stepping stone for smart glasses,” he said. “These things will all converge in some single-digit number of years.”

Listen to the full episode on the AR Show by clicking below.

For deeper XR data and intelligence, join ARtillery PRO and subscribe to the free AR Insider Weekly newsletter.

Disclosure: AR Insider has no financial stake in the companies mentioned in this post, nor received payment for its production. Disclosure and ethics policy can be seen here.