XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, narrative analysis and top takeaways. Speakers’ opinions are their own. For a full library of indexed and educational media, subscribe to ARtillery PRO.

Our headline in last year’s coverage of Snap’s Partner Summit asserted that the company was doubling down on AR. Now one year later, it’s tripling down as a feedback loop emboldens AR’s revenue-generating potential and its central role in the “camera company’s” future.

Beyond a full slate of AR-centric announcements, the presentation itself was delivered with a healthy dose of AR. Showing that AR is in Snap’s DNA, the technology rendered a keynote-scale stage, deviating from Zoom-produced developer conferences that are common today.

But the more substantial commitment to AR was represented in Snap pushing the ball forward on many fronts. As always, these moves map to our construct of Snap’s AR virtuous cycle. Consisting of developers, users and advertisers, they flow into each other and spin out revenue.

This cycle involves attracting developers with AR creation tools. AR lenses then attract users and boost engagement rates. A growing audience attracts more lens developers which further expands the library and, in turn, attracts more users. And advertisers are compelled all along.

https://youtu.be/Aawlz4b2Nvg

Democratizing AR

Starting with developers, several speakers made a point to remind us that the Lens Studio platform is what it uses in-house for AR creation. It democratizes that capability in a free and continually-expanding toolset to build AR lenses. Some even make a living from it.

To open the platform up further, one of the event’s highlights was Snap ML. This lets developers bring their own machine learning. So those that want to play inside of Snap’s walled garden for additional traction and distribution can migrate existing software into Lens Studio.

Launch partners include Wannabe’s popular shoe try-on AR app, and Prisma’s signature artistic selfie renderings. Altogether, Snap wins from a broader slate of AR, and developers win from greater distribution. Both win from incremental users that are attracted by new lenses.

There are also now more tools in Lens Studio to help them get discovered — a key feature given that the library is getting crowded with one million lenses to date. For example, lens creators can tag their lenses so that they’re surfaced whenever users scan thematically-related objects.

“If you’ve got a lens that puts AR creations into the sky, simply tag ‘sky’ when you submit in Lens Studio,” said Snap’s camera lead Carolina Arguelles from the virtual stage. “Using our computer vision capabilities, any time someone scans the sky, your lens will appear.”

Eyes and Ears

Moving on to users, Local Lenses apply the AR Cloud principle of shared, persistent graphics that are spatially anchored to the world. It will use existing Snaps to piece together spatial maps, which is similar in some ways to the crowdsourcing done by Niantic/6d.ai and Facebook/Scape.

Snap is also making moves to accommodate users with the same discoverability principle examined above in light of developers. Most notable is Voice Scan which is sort of like Alexa for AR lenses. Natural language processing can return lenses to queries like “make my hair pink.”

This continues Snap’s initial and ongoing design principle for lens-based experiences that require as few taps as possible. The app itself famously opens right to the camera. Now, it’s top-level navigation makes lenses and its Scan feature more immediately accessible in a new Action Bar.

Speaking of Scan, this expands from the fun and whimsy of selfie filters to be more of a utility. It’s like Google Lens in that it can contextualize real-world items. But instead of Gooogle’s approach to tap into its knowledge graph, Snap uses partners in various verticals for object recognition.

But where this gets interesting (read: monetizable) is to use Scan for product searches. Just as it recognizes a growing set of objects like pets, flowers and math problems, it can recognize brand logos. From there, it can launch brand-customized experiences for virtual shopping.

Natively Monetizable

This is where the third stage of the virtuous cycle comes in: advertisers. Snap is essentially building user experiences that can be repurposed or customized by advertisers for brand engagement. That natively-monetizable quality is what makes great ad media (e.g. web search).

In that sense, Snapchat Scan can double as a sort of barcode scanner for the physical world. That includes information on product packaging and the brand logo scans noted above. This is especially conducive to social distancing by activating virtual showrooms for product discovery.

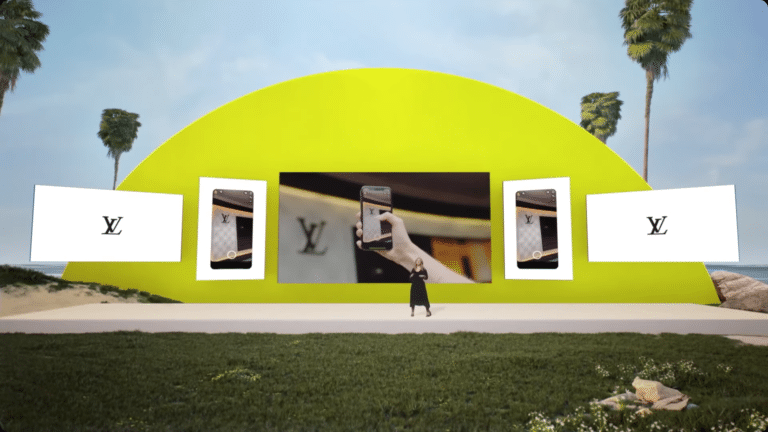

“Some of the most iconic logos in the world are scannable everywhere,” said Arguelles of Scan’s utility and commercial potential. “Soon, you’ll be able to scan Louis Vuitton’s monogram and be transported to a virtual installation which displays their classic trunks and latest collection.”

This is also notable in that it’s a form of “marker-based” AR. This is often considered in AR circles to be a primitive form of the technology. But it has virtues in being simple, user friendly and a sort of user prompt or reminder that AR is there (note: Apple is working on something similar).

And that embodies Snap’s AR persona overall. It has made AR simple and accessible, even though its not the most technically-advanced flavor of the technology. It wants to impress regular folks rather than fellow tech people. And that’s what makes Snap today’s consumer AR leader.

See the full talk below and see ARtillery Intelligence’s related report on taking lessons from Snap’s AR lead.