Amazon has been back & forth on visual search – a flavor of AR that lets you identify and contextualize items with your smartphone camera (and someday smart glasses). The thought is that Amazon can benefit from visual search as an additional front-end for eCommerce.

Past visual search efforts from Amazon include partnering with Snap to be the product database that sits behind Snap Scan. At the time, we saw it as Amazon’s experimental forays into visual search, to be followed by its own branded offering. That continues to unravel slowly but surely.

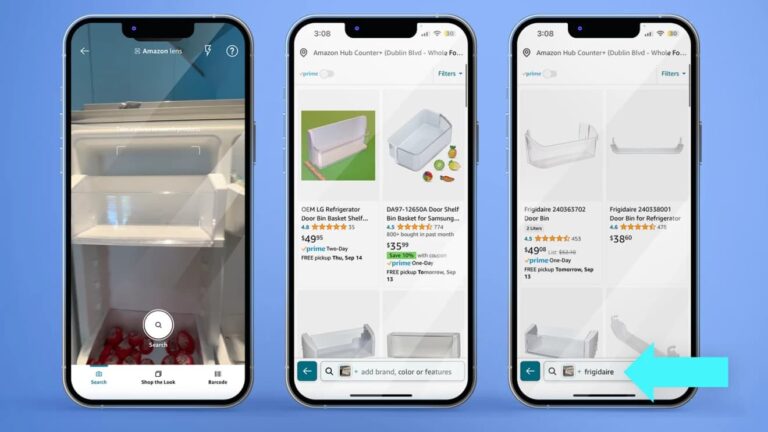

The latest effort lets shoppers search for items on Amazon using images of visually similar items that they encounter in the physical world. Adding additional capability and familiarity, users can then add text qualifiers to zero in on certain qualities (think: “the same shirt in green”).

All the above can involve physical objects – everything from pets to flowers to landmarks. But where the rubber really meets the road is with physical goods such as accessories. That’s where Google is headed with visual search, and it’s obviously where Amazon’s interests lie.

Surface Area

So how does this work? Amazon users can take a picture of a physical object to then use it as a search input to find similar items. But unlike Google Lens’ live camera feed, this requires a static image, either taken on the spot or referenced from the user’s camera roll.

But the real magic, as noted, is the ability to add text descriptors to the mix. This offers the best of both worlds: images are optimal for querying advanced physical properties, while text is better for further qualifications that aren’t visible in a given image, such as product manufacturer.

If this sounds familiar, Google’s Multisearch does something similar. As we’ve examined, it lets you use images (or live camera feed) to contextualize objects encountered in the physical world. And like Amazon’s latest play, text can then be entered to filter for specific attributes.

Fitting use cases include things like finding replacement parts for appliances, or style items where the tag/brand isn’t visible in a given image. The latter can offer opportunities to identify apparel that’s spotted in the wild – a potentially valuable use case for fashion-forward shoppers.

The point in all the above is to expand the surface area of search. By offering more search inputs and modalities, Amazon can boost overall query volume in various contexts. Just like Google, Amazon’s query volume is the tip of the spear for lots of revenue-generating activity.

AI Meets AR

Speaking of surface area, Amazon’s visual search is available on its mobile app, but it’s unclear where else it will go. Google, by comparison, has expanded Lens to several user touch points to incubate and expose the product – from the Google home page to the Chrome address bar.

This makes sense, as visual search is closer to Google’s core product than Amazon’s. For Google, it’s one way – along with voice search – to future proof its core business and expand query volume. The latter applies to Amazon as well, but perhaps less urgently as a non-core function.

Amazon meanwhile continues to push the ball forward with AR more generally. Building from past features that let users virtually try on clothes, furniture, and decor, Amazon recently announced expanded capability to tabletop items like lamps and coffee makers.

And the timing is opportune for all the above. Both AR and its visual search subset is currently riding the tailwinds of AI. Of all the flavors of AR, visual search is the most reliant on AI, including computer vision and object recognition. We’ll see if that association propels it any further.