XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, narrative analysis and top takeaways. Speakers’ opinions are their own. For a full library of indexed and educational media, subscribe to ARtillery PRO.

XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, narrative analysis and top takeaways. Speakers’ opinions are their own. For a full library of indexed and educational media, subscribe to ARtillery PRO.

You’ve likely heard all about Facebook Connect 7, including the keynote unveiling of Oculus Quest 2 (our pre-order is in). Leaving that coverage to the VR news outlets that do it best, we’re devoting this week’s XR talks (video below) to dive deeper for AR strategic takeaways.

Many of these come later in the keynote with Facebook Reality Labs’ Chief Scientist Michael Abrash and his annual technical deep dive. Before digging into that, we’d be remiss to not give a nod to Mark Zuckerberg’s proclamation that Facebook V1 smart glasses will arrive in 2021.

To be clear, there are scant details about the glasses but it is clear that Facebook is working with Ray-Ban maker EssilorLuxottica. This signals a design path that — like we project for Apple — will prioritize wearability and social sensibilities over advanced optics, at least in version 1.

Also under the AR umbrella is Project Aria. This is Facebook’s field research for AR glasses. Devoid of optical displays, these research frames have cameras and sensors so Facebook can gain insight about social dynamics and ethics around AR glasses (and avoid glasshole mishaps).

Human-Oriented Computing

Moving on to the deeper AR topics in Abrash’s address, the Chief Scientist started off as he often does, invoking computing history. In this case, he framed AR and VR as eventually driving the next inflections in human-oriented computing that was started with the graphical user interface.

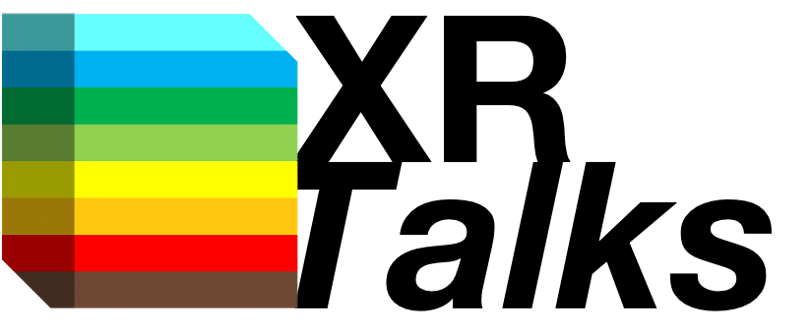

But to get to that stage, we first must tackle three main areas including input/output, machine perception and interaction. Starting at the top, input/output is all about interface. There are well-known challenges in controlling things in AR and VR as they deviate from keyboards and such.

So Facebook Reality Labs is working on a few input mechanisms that will be more native to the experience, including haptics and electromyography (EMG). Born from its acquisition of CTRL Labs, an armband can translate signals from the motor neurons in one’s wrist to digital inputs.

Another big area of input/output research is sound. Given that sound is one of our primary senses, it will be an important component of augmenting our realities in holistic ways. As we’ve examined, it also has advantages in being a more discreet informational “overlay” than optical AR.

This ties in with Abrash’s third main category: Interaction. Beyond the mechanics of the interface and the content (explored below), the “languages” we use to meaningfully interact with digital objects (and with each other) will be a key puzzle piece in the dream of persistent AR.

Layered Meaning

The second item on Abrash’s list is perhaps the most complex and important component of AR’s future: machine perception. To augment one’s reality, a device has to first understand that reality. And that has to happen on geometric (shapes/depth) and semantic (meaning) levels.

This is the area otherwise known as the AR cloud. The basics are that the requite spatial maps of the world will be so data-intensive that there will need to be a cloud repository that AR devices can tap on an on-demand basis, rather than reinvent the wheel with each experience.

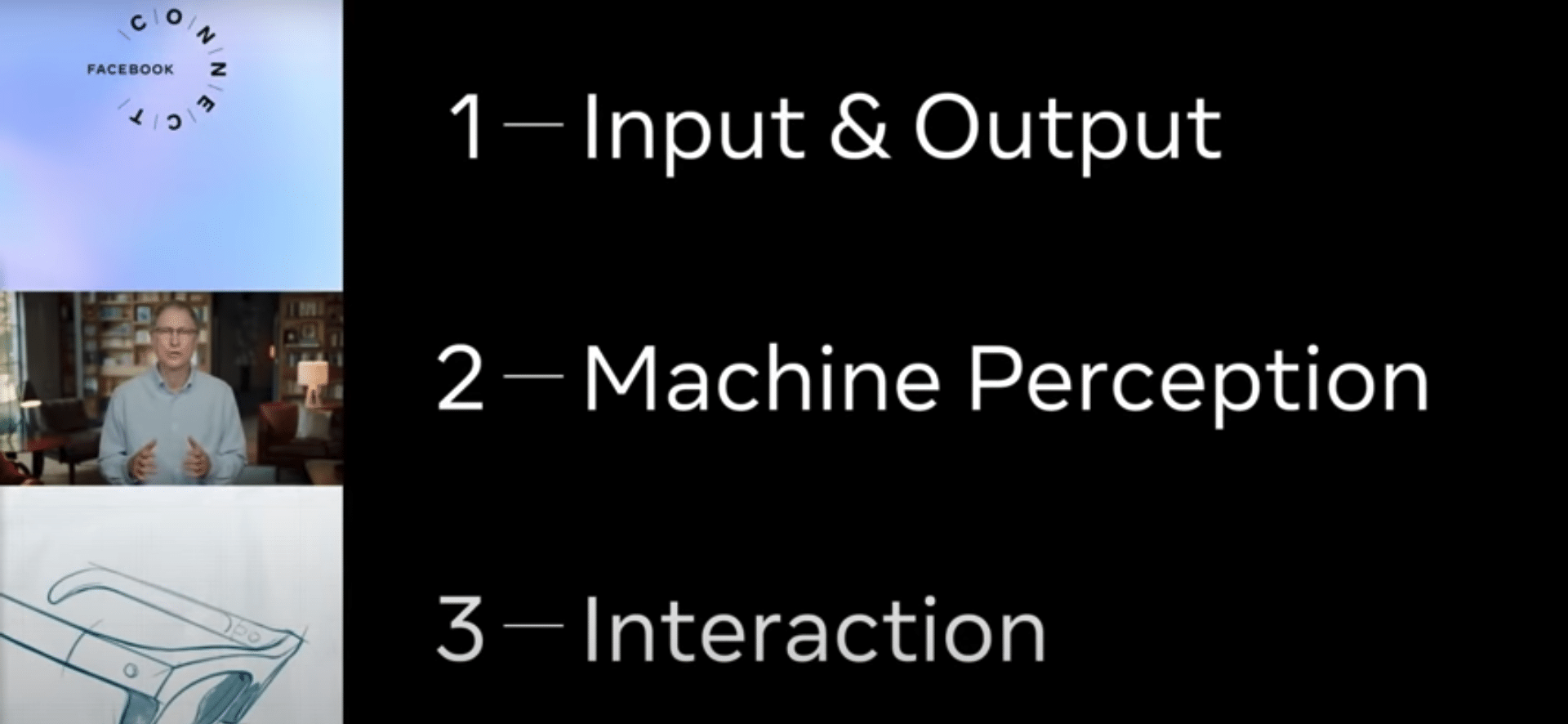

Most tech giants chasing the AR dream have a different flavor of the AR cloud and Facebook’s is Live Maps. Abrash outlines three key layers, starting with something we already have: location data. Some portion of the heavy lifting can be done by knowing where you’re standing.

The second layer is an index layer. Think of this like Google’s search index for the physical world, a la Internet of Places. This involves the geometry of a given room, stadium or hiking path. Like anything else, some will be public and some will be private, and it will be perpetually evolving.

The third layer is content, or what Abrash describes as ontology. These are the more personal and dynamic relationships between objects and their meaning to a given person. This will be the layer that has the most permissioned access, and will do things like help you find your keys.

Fourth Modality

One of the challenges with the AR cloud — not specific to Facebook — is assembling all of that data and keeping it accurate and updated. Niantic for example (with the help of 6d.ai’s acquired tech) has a crowdsourcing approach for Pokemon Go players to scan the world while playing.

Facebook’s approach goes back to the aforementioned project Aria. Though it will start out as internal research to gain insight on the social dynamics of smart glasses, an eventual version of these glasses could be used to crowdsource the spatial mapping of the inhabitable earth.

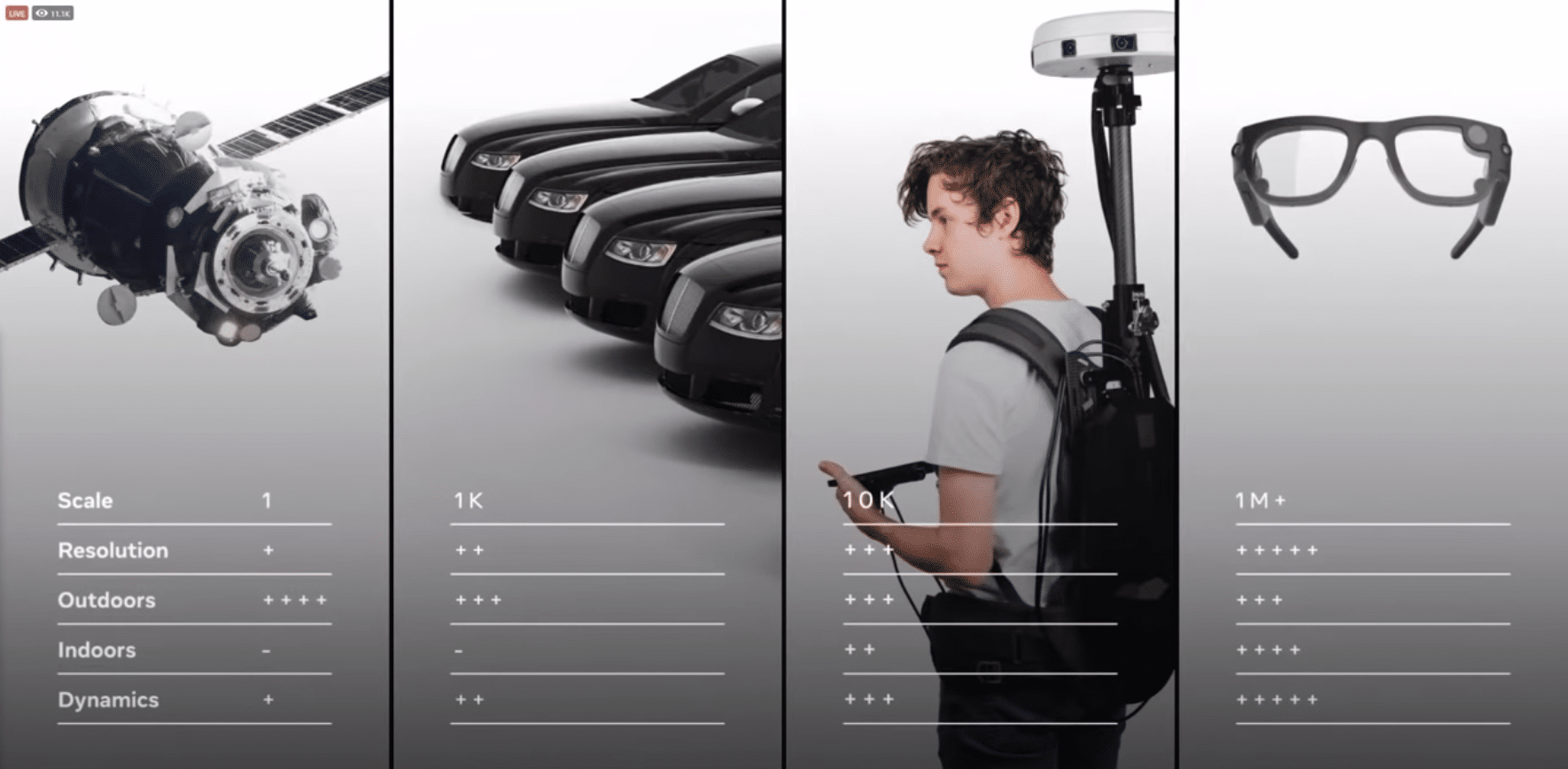

This creates a fourth modality for mapping as we know it. After satellites (GPS), cars (Street View), backpacks (exploratory Street View), glasses drill down one level of granularity. This will be a key tool to map the “index” layer mentioned above, and to do so from a first-person perspective.

Think of the crowdsourced approach like Waze in that users benefit from the data while collecting it and feeding into the collective cloud. But as you can imagine, the key word here is scale, which Facebook has in its sheer user base. Yet subsets of AR adoption will be a question mark.

More from Abrash and the full keynote can be seen the video below. Abrash’s address starts at 1:13:48 or just click here.