XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, narrative analysis and top takeaways. Speakers’ opinions are their own. For a full library of indexed and educational media, subscribe to ARtillery PRO.

XR Talks is a series that features the best presentations and educational videos from the XR universe. It includes embedded video, narrative analysis and top takeaways. Speakers’ opinions are their own. For a full library of indexed and educational media, subscribe to ARtillery PRO.

The AR cloud has turned three. This traces back to the principle’s origin in a 2017 landmark editorial by AR veteran and thought leader Ori Inbar. To celebrate its birthday, Inbar gathered and moderated a panel of leading thinkers at a recent AWE Nite NYC (video below).

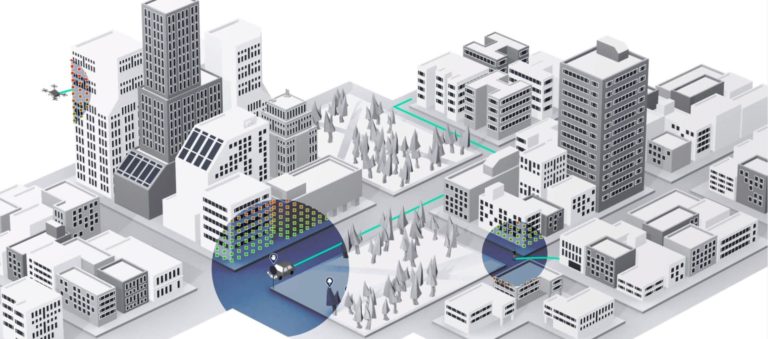

Before diving in, what is the AR cloud? For those unfamiliar, it’s a foundational principle that helps contextualize the future of augmented reality. It’s all about data that’s anchored to places and things, which AR devices can ingest and process into meaningful content.

In order for AR to work in the ways we all envision, it first must understand its surroundings. Before placing graphics in a room, an AR device has to understand that room. And because the world’s spatial mapping data is too extensive to fit on one device, it must tap the cloud.

What’s in a Name

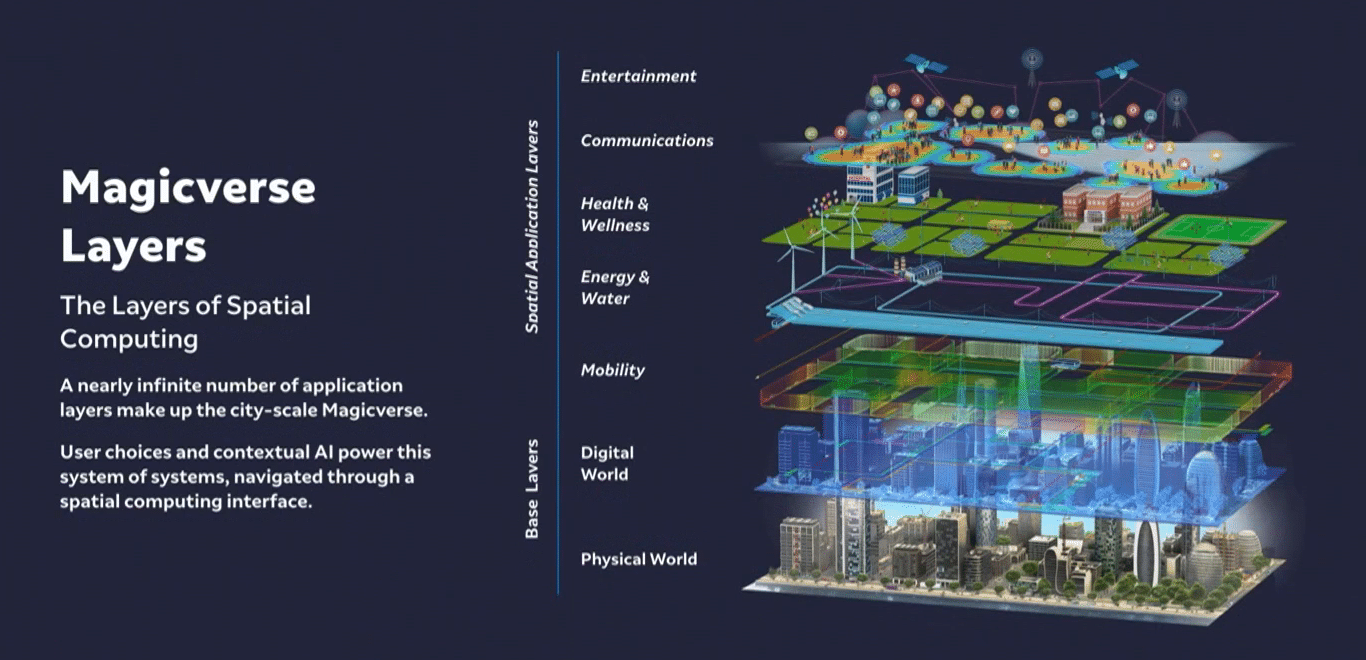

One of the AR cloud’s attributes over the past three years is its many names. Representing varied approaches from tech giants, it’s been called everything from LiveMaps (Facebook) to Magicverse (Magic Leap). And nearly everyone uses the “layers” metaphor (see below).

Though naming isn’t as critical as other fundamental aspects of the AR cloud’s construction, ownership and use, it is important to work with standardized language to advance the idea. The panel mostly agreed that the original term — AR cloud — is the most fitting.

Going deeper into AR cloud strategies, AR cloud pioneer and 6d.ai founder Matt Miesnieks believes AR cloud startups are challenged because it’s such an inherently large undertaking. Mapping the physical world requires scale and deep pockets, akin to Google or Snap.

Therefore startups should aim for best-of-breed technology that builds on or adds to these larger companies’ AR cloud initiatives. If this sounds familiar, it was the route taken by 6d.ai before it was acquired by Niantic… which will now deploy the technology at Pokémon-scale.

Scale Imperative

Speaking of tech giants, Google has AR cloud ambitions, evident in its interlocking spatial computing efforts. These include visual search through Google Lens, and visual navigation through LiveView. Both use a combination of computer vision and Google’s existing data.

For example, Google Lens is a strong visual search engine because Google has a rich image database and knowledge graph (its own AR cloud) that it can apply for object recognition. These are the assets acquired from being the world’s search engine for two decades.

Along the same lines, Google Live View has devised a clever hack to localize in GPS-spotty urban areas using the camera to pinpoint a given device’s location. Here again, it uses existing data — in this case Street View imagery — to localize and navigate users to a destination.

But according to Google’s Justin Quimby, these first-party applications from Google are only the beginning. The AR cloud starts with underlying geospatial and object-recognition data, but the front-end magic will be in the apps yet developed, which can bring it to the masses.

The Application Layer

This all leads to the question of what will be AR’s killer apps, and when will we see them? One of the challenges according to the Open AR Cloud’s Jan-Erik Vinje is that consumers don’t yet know what they want from AR. It’s like the Henry Ford (mis)quote about faster horses.

Miesnieks, now representing one of the few AR killer apps to date in Pokémon Go, believes the answer lies in elevating the activities we already know and love, a la AR training wheels. Pokémon Go did this with mobile gaming, and Snap Lenses did it with social sharing.

For example, Miesnieks cites communications as an inherent human need. If the AR cloud can be applied to enhance communications — which Snapchat has already done to a degree — that could represent the technology’s eventual scale. It’s also a high-frequency use case.

Vinje calls this the “Tesla roadster moment” — when a technology goes from early enthusiasts to a cultural phenomenon. This could happen, says Quimby, in the world’s recovery from a pandemic, when pent up energy engenders receptiveness to AR-fueled real-world interaction.

We’ll pause there and return in Part II of this series with the panel’s musings on the AR Cloud’s privacy, security and interoperability, including insights from XRSI’s Kavya Perlman. Stay tuned.