Tech giants see different versions of spatial computing’s future. These visions often trace back to their core businesses. Facebook wants to be the social layer to the spatial web, while Amazon wants to be the commerce layer and Apple wants a hardware-centric multi-device play.

Where does Google fit in all of this? It wants to be the knowledge layer of the spatial web. Just like it amassed immense value indexing the web and building a knowledge graph, it wants to index the physical world and be its relevance authority. This is what we call the Internet of places (IoP).

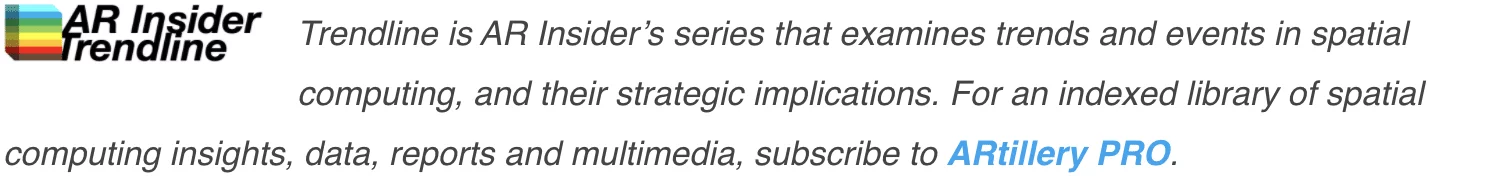

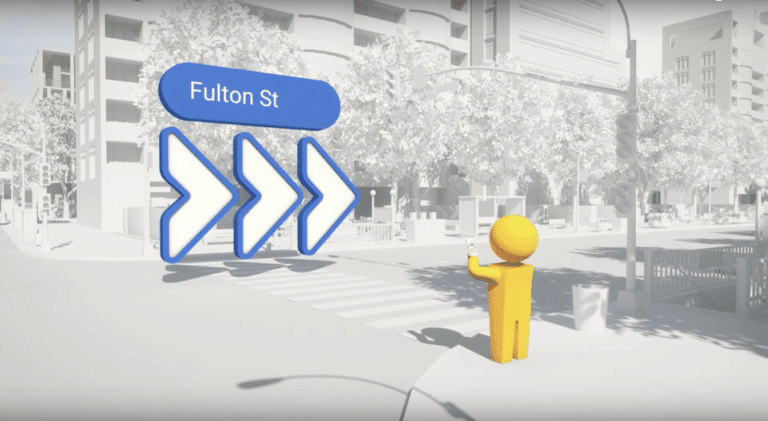

Besides financial incentive to future-proof its core search business with next-generation visual interfaces — per our ongoing “follow the money” exercise — Google’s actual moves triangulate an IoP play. That includes its “search what you see” Google Lens, and Live View 3D navigation.

Clues we’re tracking continue to validate this path. After Google’s recently-unveiled storefront visual search feature, last week it announced a new crowdsourcing effort for assembling 3D maps. This could help it to scale up the underlying data that it needs to get closer to an IoP reality.

Planet Scale

Going deeper on Google’s latest announcement, a “connected photos” feature in the Android Street View app lets users contribute imagery to the Google Maps database. It will let them walk down a given street or path with an upheld smartphone as the app captures several frames.

Google will take care of the 3D image stitching on the back end. This means that for the first time, a 360 degree camera isn’t needed to capture Street View image capture. Of course, quality and visual acuity of these images won’t be as good as its Street View cars, but it will scale better.

That brings us to Google’s intentions for this move. Its stated purpose is to let users help improve Street View and to get last-mile imagery where cars can’t travel. But we also think this move will help it continue to assemble 3D image data for its AR cloud and IoP ambitions.

Put another way, motivating users to capture wide swaths of imagery in the above ways feeds into a more comprehensive mesh of 3D image data. Having robust 3D image data gets it closer to spatially-anchored AR experiences that have location relevance. In other words….an IoP.

The crowdsourced approach is also aligned with common AR-construction approaches. Niantic is doing similar by capturing real-world spatial maps by Pokemon Go players. To enable “AR everywhere,” spatial mapping needs to happen at planet scale, which requires some help.

World-Immersive Properties

Speaking of user participation, it plays out on a few levels. In addition to image capture, there will be social and user-generated content. For example, Earth Cloud Anchors (see above) let users geo-anchor digital content for others to view, which could feed into an IoP index.

Resulting use cases could include reading restaurant reviews by holding up your phone to the menu. It could include leaving geo-anchored notes for friends that have permissioned access. This “social layer” to the spatial web is something Facebook is also angling towards, as noted.

Collectively, the above use cases — whether images or social messaging — paint a picture of a spatial web that offers similar activities as today’s 2D web. That’s good, as familiar activities will serve as “training wheels” to acclimate users and help consumer AR gain cultural acceptance.

Beyond familiar use cases, there will also be “native” activities on the spatial web, given its unique world-immersive properties. Many of those use cases haven’t been imaged yet, and some could be unlocked as underlying technology like LiDAR evolve and cycle into ubiquity.

Meanwhile, some comfort will already be present given Gen Z’s camera affinity. Snap and others will also continue to stimulate demand for world-immersive digital content. In that way, all these AR-driven companies could help each other reach similar but separate IoP endpoints.