As you likely know, one of AR’s foundational principles is to fuse the digital and physical. The real world is a key part of that formula… and real-world relevance is often defined by location. That same relevance and scarcity are what drive real estate value….location, location, location.

Synthesizing these variables, one of AR’s battlegrounds will be in augmenting the world in location-relevant ways. That could be wayfinding with Google Live View, or visual search with Google Lens. Point your phone (or future glasses) at places and objects to contextualize them.

As you can tell from these examples, Google will have a key stake in this Internet of Places, or what we’ve begun to call the metavearth. It’s highly motivated to to future proof and pave a future path for its core business, where the camera will be one of many search inputs.

And it’s well-positioned to do so, given existing assets. For example, it utilizes imagery from Street View as a visual database for object recognition so that AR devices can localize. That forms the basis for its storefront recognition in Google Lens and urban navigation in Live View.

Turf Battle

Google isn’t alone. Apple signals interest in location-relevant AR through its geo-anchors. These evoke AR’s location-based underpinnings by letting users plant and discover spatially-anchored graphics. And Apple’s continued efforts to map the world in 3D will be a key puzzle piece.

Meanwhile, Facebook is similarly building “Live Maps.” As explained by Facebook Reality Labs’ chief scientist Michael Abrash, this involves building indexes (geometry) and ontologies (meaning) of the physical world. This will be the data backbone for Facebook’s AR ambitions.

Then there’s Snapchat, the reigning champion of consumer mobile AR. Erstwhile propelled by selfie-lenses, Snap’s larger AR ambitions will flip the focus to the rear-facing camera to augment the broader canvas of the physical world. This is the thinking behind its Local Lenses.

Speaking of consumer mobile AR champions, Niantic is a close second given the prevalence of Pokémon Go and the geographic augmentation that’s central to its game mechanics. And its bigger play — the Real World Platform — aims to offer robust geolocated-AR as a service.

Beyond tech giants and mid-market players, there are compelling startups positioning themselves at the intersection of AR and geolocation. Most notably, Gowalla’s rebirth brings the company’s location-based social UX chops to a new world of geo-relevant AR.

Cloud Convergence

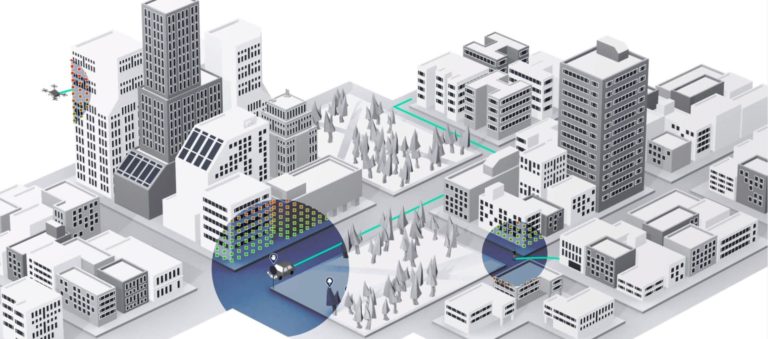

If any of the above sounds familiar, it’s aligned with a guiding principle for AR’s future: The AR Cloud. As AR enthusiasts know, this is a conceptual framework in which invisible data layers coat the inhabitable earth to enable AR devices to trigger the right graphics (or audio content).

As background for those unfamiliar, AR devices must understand a scene and localize themselves before they can integrate AR graphics believably. That happens with a combination of mapping the contours of a scene, and tapping into previously-mapped spatial metadata.

But true to all of the above efforts, it won’t just be one cloud as its moniker suggests. All of these geo-located AR ambitions will compete. Much like the web today, the AR cloud will ideally have standards and protocols for interoperability, while allowing for proprietary content and networks.

But instead of websites, these proprietary points of value will be in “layers.” The thought is that AR devices can reveal certain layers based on user intent and authentication. View the social layer for geo-relevant friend recommendations and the commerce layer to find products.

There are of course several moving parts. 5G will help achieve millimeter-level precision for geolocated AR. And there’s ample spatial mapping required. How will it all converge? This is the theme of our new series, Space Race, where we’ll break down who’s doing what.

Stay tuned in the coming weeks as we profile the geolocation-AR strategies of all of the players mentioned above, and more that continue to develop.