As you likely know, one of AR’s foundational principles is to fuse the digital and physical. The real world is a key part of that formula….and real-world relevance is often defined by location. That same relevance and scarcity are what drive real estate value….location, location, location.

Synthesizing these factors, one of AR’s battlegrounds will be in augmenting the world in location-relevant ways. That could be wayfinding with Google Live View, or visual search with Google Lens. Point your phone (or future glasses) at places and objects to contextualize them.

As you can tell from the above examples, Google will have a key stake in this “Internet of Places.” But it’s not alone. Apple signals interest in location-relevant AR through its geo-anchors and Project Gobi. Facebook is building “Live Maps,” and Snapchat is pushing Local Lenses.

These are a few utilitarian, commerce, and social angles. How else will geospatial AR materialize? What are its active ingredients, including 5G and the AR cloud? This is the theme of our new series, Space Race, where we break down who’s doing what….continuing here with Facebook.

Network Effects

Like the players we’ve profiled so far in this series — Google and Apple — Facebook’s geospatial AR ambitions trace back to its core business. In broad terms, that mission is to connect the world socially and build network effects that can be monetized through advertising.

So how does that translate to AR products and business moves? Facebook’s AR play is at much earlier stages than its already-commercialized VR activities. But it’s also been fairly forthright about its AR ambitions, research underway, and billions in spending to make it happen.

These intentions have trickled out through Mark Zuckerberg interviews and public filings. But the most illuminating glimpse of Facebook’s AR vision can be gained from the technical deep dives from its Chief Scientist Michael Abrash each year at the Oculus Connect conference.

At his most recent appearance, Abrash discussed Project Aria. This is Facebook’s field research for AR glasses. Devoid of optical displays, these research frames have cameras and sensors so Facebook can gain insight into social dynamics and behavior around AR glasses.

But more notable — and related to geospatial AR — is Facebook’s work to build Live Maps. This is its vision for data that’s anchored to people and places in order to evoke meaning through AR interfaces. That could be everything from social connections to product details.

Layered Meaning

In order to achieve these lofty goals for Live Maps, Abrash points to a critical building block: machine perception. To augment one’s reality, a device has to first understand that reality. And that has to happen on geometric (shapes/depth) and semantic (meaning) levels.

This is the area otherwise known as the AR cloud. The basics are that the requisite spatial maps of the world will be so data-intensive that there will need to be a cloud repository that AR devices can tap on an on-demand basis, rather than reinvent the wheel with each experience.

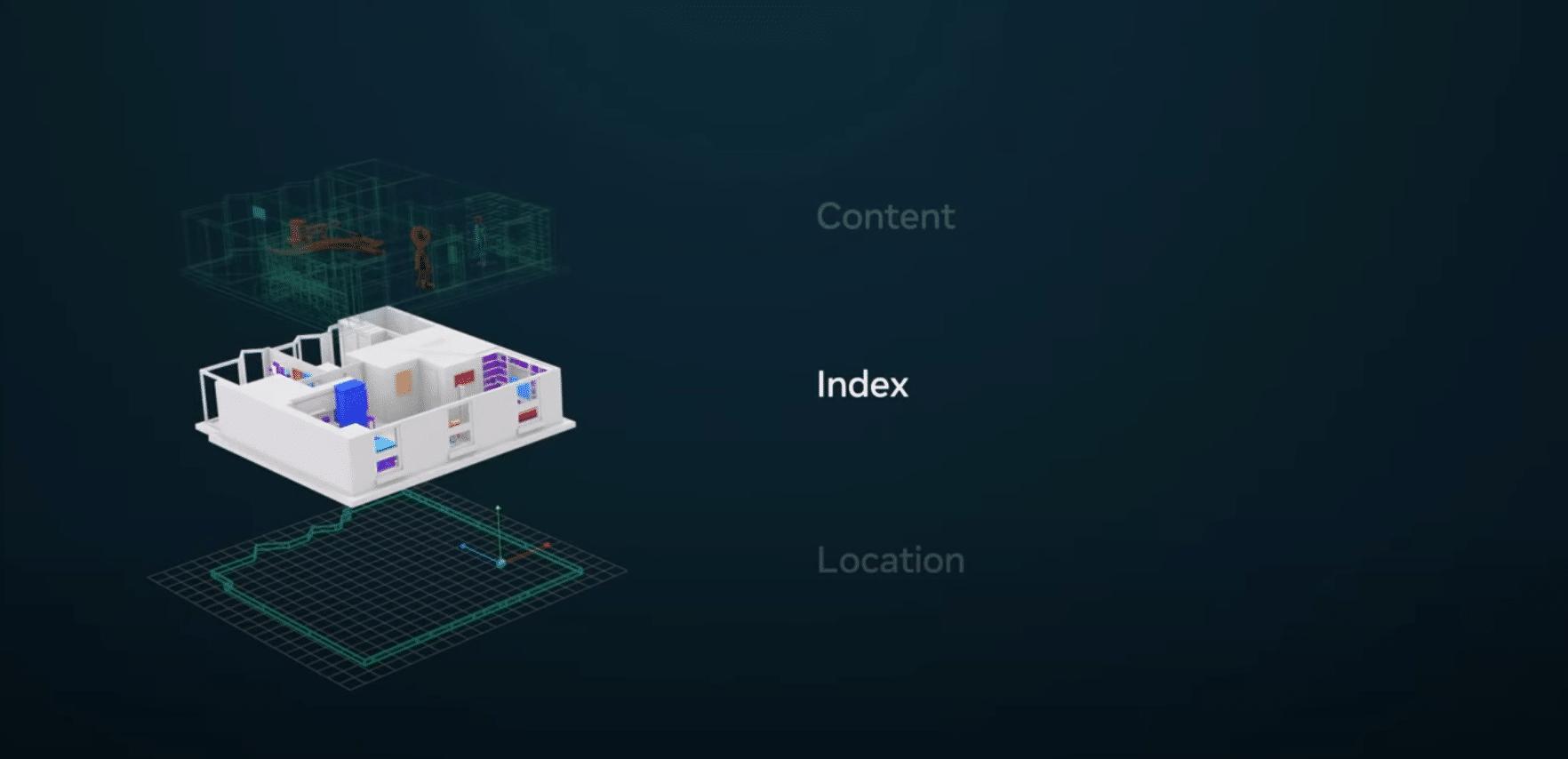

Most tech giants chasing the AR dream have a different flavor of the AR cloud and Facebook’s is Live Maps. Abrash outlines three key layers, starting with something we already have: location data. Some portion of the heavy lifting can be done by knowing where you’re standing.

The second layer is an index layer. Think of this like Google’s search index for the physical world, a la Internet of Places. This involves the geometry of a given room, stadium or hiking path. Like anything else, some will be public and some will be private, and it will be perpetually evolving.

The third layer is content, or what Abrash describes as ontology. These are the more personal and dynamic relationships between objects and their meaning to a given person. This will be the layer that has the most permissioned access, and will do things like help you find your keys.

Fourth Modality

One of the challenges with the AR cloud — not specific to Facebook — is assembling all of that data and keeping it accurate and updated. Niantic, for example (with the help of 6d.ai’s acquired tech), has a crowdsourcing approach for Pokemon Go players to scan the world while playing.

Facebook’s approach goes back to the aforementioned project Aria. Though it will start out as internal research to gain insight on the social dynamics of smart glasses, an eventual version of these glasses could be used to crowdsource the spatial mapping of the inhabitable earth.

As we’ll explore in a future installment of this Location Wars series, this is similar to how Snapchat will piece together its own version of an AR cloud. It hopes to mine data from snaps and Spectacles-captured video to assemble spatial maps that will power its geospatial AR play.

All of these cases represent a fourth modality for mapping. After satellites (GPS), cars (Street View), backpacks (exploratory Street View), AR drills down one level of granularity. This will be a key for the “index” layer mentioned above, and to gain first-person perspectives.

Think of the crowdsourced approach like Waze in that users benefit from the data while collecting it and feeding into the collective cloud. But as you can imagine, the key word here is scale, which Facebook has in its sheer user base. That plus billions in spending could give it an edge.

We’ll pause there and return in the next Space Race segment with a different company profile…