As you likely know, one of AR’s foundational principles is to fuse the digital and physical. The real world is a key part of that formula….and real-world relevance is often defined by location. That same relevance and scarcity are what drive real estate value….location, location, location.

Synthesizing these factors, one of AR’s battlegrounds will be in augmenting the world in location-relevant ways. That could be wayfinding with Google Live View, or visual search with Google Lens. Point your phone (or future glasses) at places and objects to contextualize them.

As you can tell from the above examples, Google will have a key stake in this “Internet of Places.” But it’s not alone. Apple signals interest in location-relevant AR through its geo-anchors and Project Gobi. Facebook is building “Live Maps,” and Snapchat is pushing Local Lenses.

These are just a few utilitarian, commerce, and social angles. How else will geospatial AR materialize? What are its active ingredients, including 5G and the AR cloud? This is the theme of our new series, Space Race, where we’ll break down who’s doing what….starting with Google.

Part I: Google

Tech giants see different versions of spatial computing’s future. These visions often trace back to their core businesses. Facebook wants to be the social layer to the spatial web, while Amazon wants to be the commerce layer and Apple envisions a hardware-centric multi-device play.

Where does Google fit in all of this? It wants to be the knowledge layer of the spatial web. Just like it amassed immense value indexing the web and building a knowledge graph, it wants to index the physical world and be its relevance authority. This is what we call the Internet of places (IoP).

Besides financial incentive to future-proof its core search business with next-generation visual interfaces — per our ongoing “follow the money” exercise — Google’s actual moves triangulate an IoP play. That includes its “search what you see” Google Lens, and Live View 3D navigation.

Google’s latest moves include updates to its Live View visual navigation to help users identify and qualify local businesses. This was unveiled at its recent Search On 2020 event and follows soon after its Earth Cloud Anchors that will let users create digital content on physical places.

Level-Setting

Before diving into these latest moves in greater detail, let’s level set on Google’s broader AR play. The company continues to invest in visual search to future-proof its core search business, as noted. Given Gen-Z’s camera affinity, Google wants to lead the charge to make it a search input.

This includes Google Lens, which lets users point their cameras at real-world objects to contextualize them. This starts with general interest searches like pets and flowers, but the real opportunity is a high-intent shopping engine that’s monetized in Google-esque ways.

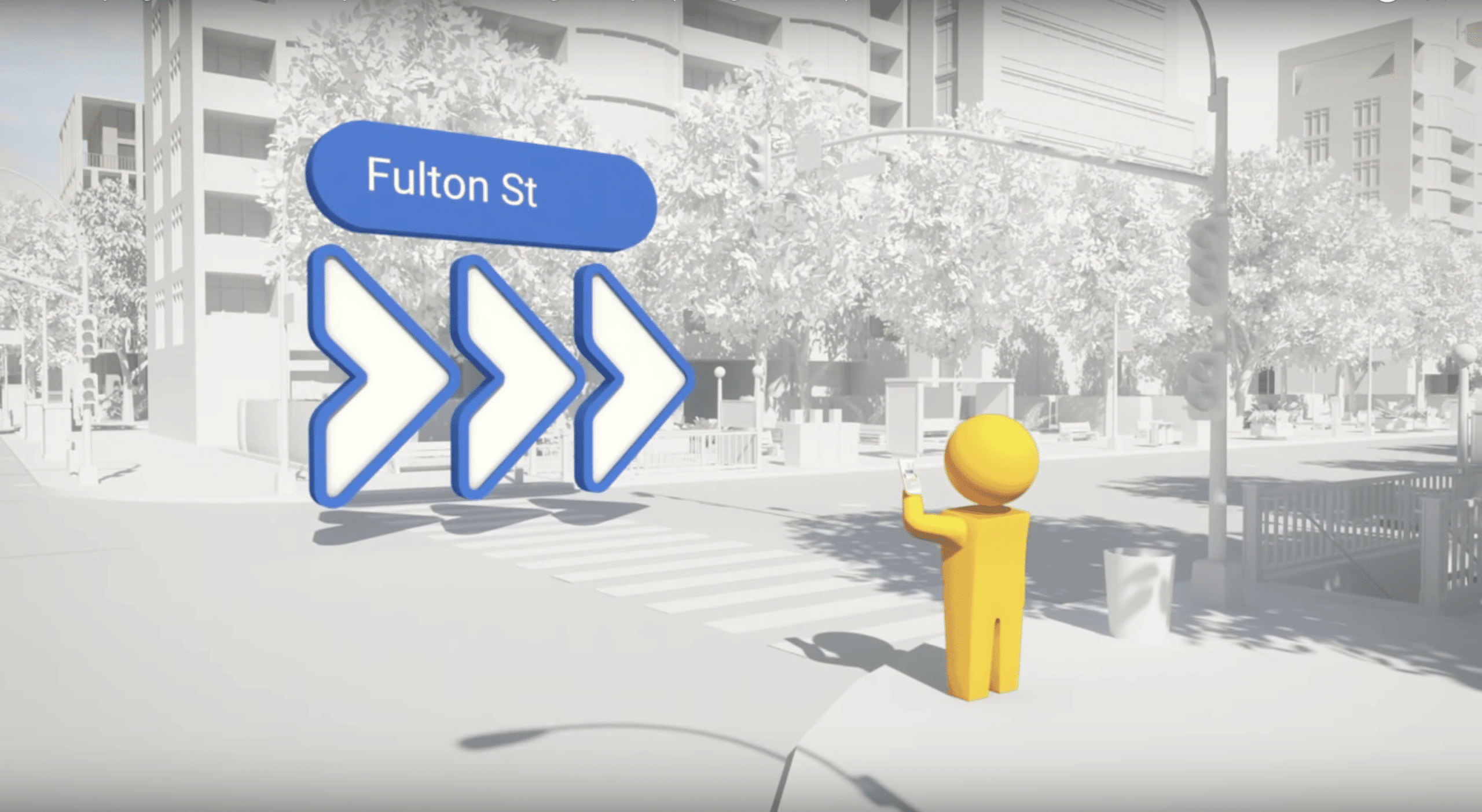

Live View similarly uses the camera to help users navigate with 3D urban walking directions. Instead of the “mental mapping” to translate a 2D map to 3D space, holding up your phone to see directional arrows is more intuitive. And like Google Lens, monetization is on the road map.

Google is uniquely positioned for these efforts as they tap into its knowledge graph and the data it’s assembled from being the world’s search engine for 20 years. Lens taps into Google’s vast image database for object recognition, while Live View uses Street View imagery.

Master Plan

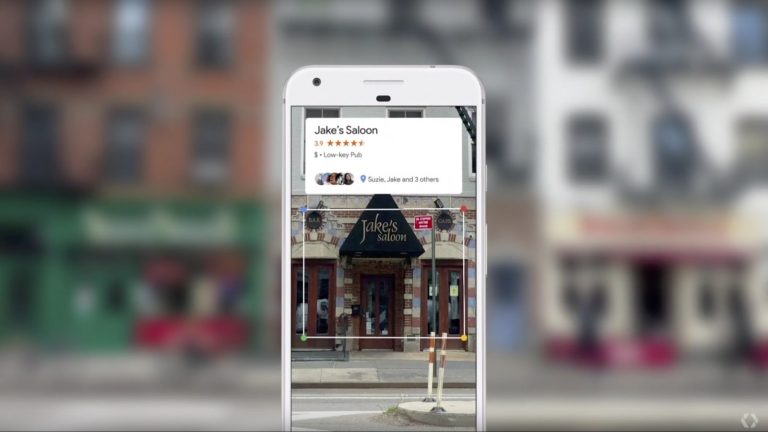

That brings us back to the present. Google’s latest visual search play combines Lens and Live View. While navigating with Live View, Google now offers small click targets on your touchscreen when it recognizes a business storefront. When tapped, expanded business information appears.

This is something Google has been teasing for a few years. As shown above, it includes business details that help users discover and qualify businesses. The data flow from Google My Business (GMB) and the current version offers structured listings content like hours of operation.

As mentioned, this follows Google’s less-discussed Earth Cloud Anchors. Another vector in Google’s overall visual search and AR master plan, this ARCore feature lets users geo-anchor digital content for others to view. This could feed into Google Lens and Live View.

In other words, Cloud Anchors could engender a user-generated component of visual search. It could have social, educational and serendipitous use cases such as digital scavenger hunts and hidden notes for friends. But a local business reviews use case could also develop.

Visual SEO

That last part is most aligned with Google’s DNA, as it’s become a local search powerhouse, with lots of revenue to show for it. This has driven Google My Business, which involves assembling local business data directly from the source and indexing the universe of small businesses.

In fact, we’ve long speculated that an Internet of places could breed a new visual flavor of SEO. In other words, if a visual front-end phases into ubiquity, starting with Gen-Z, it could drive businesses to optimize meta-data for that UX, just like they do today for web-search in GMB.

But we’re not there yet. Outcomes will hinge on the wild card that is user traction. If the use case falls flat, this won’t be a channel that local businesses need to worry about. Though we think visual search is a potential AR killer app, it’s yet to be seen if people will use it en masse.

That’s why Google continues to tread carefully into AR and visual search. It jumped too quickly at VR before unwinding it over the past few years. AR is much closer to search, but Google needs to feel out user behavior and optimal UX. Then, just like search itself, monetization could follow.